| Resurrection Home | Previous issue | Next issue | View Original Cover | PDF Version |

Computer

RESURRECTION

The Bulletin of the Computer Conservation Society

ISSN 0958-7403

Number 38 |

Summer 2006 |

| News Round-Up | |

| Society Activity | |

| CCS Web Site Information | |

| Creating Computer Courses | Paul Samet |

| Getting the Job Done | George Felton |

| Role of the National Computing Centre | David Firnberg |

| Creating a Sane Professional Body | John Ivinson |

| Pictures of the Past | |

| Forthcoming Events | |

| Committee of the Society | |

| Aims and Objectives |

| Top | Previous | Next |

-101010101-

All officers and members of the Committee were unanimously re-elected to serve for another year at the Society's AGM on 4 May.

-101010101-

The principal news item announced at the AGM was that the reconstruction of the Bletchley Park Bombe is now complete. This major milestone has been reached after nearly nine years dedicated work by John Harper and his team. John estimates that another year or so will be needed to complete the commissioning. His latest report can be found on pages 4-6.

-101010101-

CCS Chairman Roger Johnson, reviewing the year's activities at the AGM, reported that the Society held 11 seminars during the year, six in London and five in Manchester. Audiences were typically between 30 and 50 people.

-101010101-

The LEO Society will be celebrating the 60th anniversary of the pioneering Lyons Electronic Office project next year. The CCS is exploring with them the possibility of a joint meeting to commemorate the anniversary on 19 October 2007.

-101010101-

Simon Greenish has succeeded Christine Large as Director of the Bletchley Park Trust.

-101010101-

The book "Electronic Brains - Stories from the Dawn of the Computer Age" is now available in paperback at the price of £8.99, a reduction of £7 on the hardback price. It is published by Granta Books, and the ISBN is 1-86207- 839-4. For a review see Resurrection issue 36, p32.

-101010101-

The Science Museum is looking for contributors to a BBC Science project. It is looking for "outgoing hands-on Technology types from a range of engineering disciplines... with a good building/fixing/wiring background". Anyone interested should contact Andrea Paterson on 020 8752 6993.

-101010101-

| Top | Previous | Next |

Dan Hayton

The figures for the last year showed that the Society has managed to stay ahead, just, of rising costs with the generous help of the BCS in producing and distributing Resurrection and with the Bombe project at Bletchley Park.

However it looks as though we may be facing increasing costs both to support existing working parties and to meet expanding interest in conservation, restoration and reconstruction.

Having taken a look at the past and future figures, I'm afraid I am going to suggest that we should ask for £15 as a voluntary "subscription".

Members have given the Society financial support and their time and expertise to enable us to be pre-eminent in the field of the practical and historical study of early computers.

Thanks to all members for their support and enthusiasm for the CCS.

Contact Dan Hayton at Daniel@newcomen.demon.co.uk.

Hamish Carmichael

Tilly Blyth has asked me to make a searchable catalogue of the photographs in Section 8 of the ICL Archive at Blythe House. I have started at the beginning of the Powers-Samas photographs, and am working through large numbers of prints of naked tabulators, the shots concentrating on minutiae of mechanical detail whose significance is nowhere explained and is not self-evident. Looking ahead, the Ferranti collection, by contrast, is already well catalogued.

Contact Hamish Carmichael at Hamishc@globalnet.co.uk.

Simon Lavington

CCS volunteers are making slow but steady progress in compiling technical information on a selection of early British computers - see www.ourcomputerheritage.org/wp. An example of recent detective work is the entry for the Elliott 403 computer which was delivered to the Weapons Research Establishment, Woomera, Australia, in August 1955, where it was used for the analysis of guided missile trials. Many thanks to David Pentecost and colleagues for the substantial collection of uploaded pages containing interesting information on the Elliott 400 series machines.

Browsing such information has recently been made easier with the addition of tabs and drop-down menus to the Pilot Study Web site, for which we thank Phil Gregg at Birkbeck College. Finally, here's a cry for help. We badly need someone to take responsibility for the English Electric section of the Web site and especially for the Deuce and KDF9 subsections. If you can help, or if you know the name of a likely contact, please please contact me.

Contact Simon Lavington at lavis@essex.ac.uk.

John Harper

Since our last report in January there has been a dramatic change at Bletchley Park. The heating came on and we were able to make very good progress. So much so that I can now report that

and we have now fully entered the commissioning phase. However to complete the report we need to make it clear that we still do not have a full five sets of drums necessary to run all 60 wheel orders but we will soon have three sets giving us the 108 necessary to fully populate the front of the machine. The full set is estimated to be complete towards the end of this year. To complete the picture we need to add that there are a few slightly incorrect relays fitted and these will need to be changed. Those fitted are genuine BTM types but not exactly to specification. Also our motor and lubrication pump are not exactly to the original specification but if the correct ones should ever be located these can be fitted very easily.

The breakthrough over the last few months has been the sudden and rapid progress in manufacturing and testing the sense relays and associated resistors. The bad weather did us a favour because this allowed our team to work in the warm away from BP rather than on other jobs. All 104 relays were completed and successfully tested before fitting to shelves. These shelves were then fitted to the machine without encountering any significant problems.

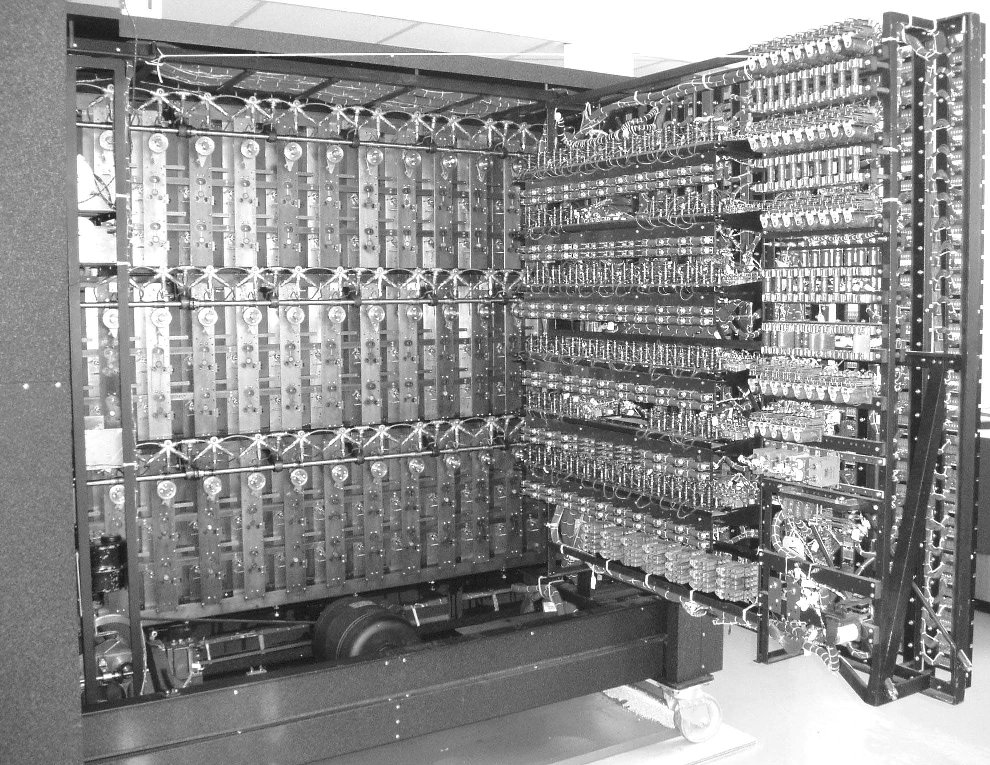

The photo above shows the rear of the machine open: the six relay shelves, the final area to be completed, can be seen fitted.

I should also add that all the menu cables have now been made and with luck a full set of plugboards that act as 'Uncle Walters' will be wired up shortly. This will allow us to run three banks of drums at the same time.

Now that we are entering the commissioning phase we feel less able to estimate timescales. However we have no reason to doubt that we will be able to run real jobs towards the end of the year. All the mechanical timing is complete and after a period of static electrical testing we will quickly move to the dynamic setting of the fast drums. We have recently been donated advanced test gear which should make this task very easy. We will also be testing the 104 sense circuits in situ using special test gear that we have cobbled together.

Our Web site is at https://www.bombe.org.uk/.

Len Hewitt & Peter Holland

Pegasus continues to run with very few problems and visitors to the museum have been able to see it operating at fortnightly intervals. The refrigeration equipment has now been serviced and the refrigerant topped up. We are currently working on the paper tape equipment: we hope to restore the Trend tape punch to working order with a replacement motor, but this does require modifications to fit it into the existing equipment. We are also trying to use a Midlectron type M22 paper tape reader/punch and we would be grateful if anyone having any information on this equipment would get in touch with us.

Contact Peter Holland at peterholland@care4free.net.

| Top | Previous | Next |

The Society has its own Web site, located at www.bcs.org.uk/sg/ccs. It contains electronic copies of all past issues of Resurrection, in both HTML and PDF formats, which can be downloaded for printing. We also have an FTP site at ftp.cs.man.ac.uk/pub/CCS-Archive, where there is other material for downloading including simulators for historic machines. Please note that this latter URL is case-sensitive.

| Top | Previous | Next |

In the beginning, everyone in computing knew all the computing there was. The trouble was, they didn't know a great deal, and what they did know was empirical. So the challenge facing the academic community was to abstract a set of universal principles from this experience. The author gives his personal view of the development of computer science education.

In 1950 the official view of the whole business was: "Computers? That's just a technical problem, isn't it? It's just a matter of electronics. That's it. Once you've solved the electronics there's nothing else to do." The fact that you might want some mechanical devices such as fast printers, card readers, tape readers, was totally ignored. "Programming? What's that? It's only a matter of writing things down. That's clerical. There is nothing in there that anyone needs to learn. This is the sort of task that a technical college can deal with if you want to be serious about it. There is certainly nothing of any academic interest."

Max Newman, head of mathematics at Manchester at the time and a participant in the development of Colossus at Bletchley, was one who said there was no research to be done in computing. The fact that Alan Turing was one of his staff at Manchester was ignored.

By 1952, people had realised that it wasn't quite as trivial as they had thought. By then people were getting PhDs, usually disguised. David Wheeler who died recently, Stanley Gill, the former BCS President who died regrettably about 30 years ago, and John Bennett, who I think is still alive in Australia, all got PhDs in about 1951/52, but they all got them as though they were doing mathematics.

There is a distinction between education and training. Training, as far as I'm concerned, is about techniques for solving today's problems: this is how you do it. Education is deeper, helping you to understand and to analyse, so that you can help with tomorrow's problems. Both are vital. If you don't know how to solve tomorrow's problems, knowing how to deal with today's isn't going to help you at all.

A computer science course in a respectable university today includes around 20 compulsory elements, and students have the choice of a few others. All the compulsory items are the invention and the discovery of the last 40 years. None of it was known in 1950: then computer science wasn't a subject at all.

What did we know in 1950? We knew about numerical methods. Few mathematicians were taught about it as undergraduates, but as soon as you got involved in research, say at De Havillands or the National Physical Laboratory, you got to know about numerical methods.

There was machine code programming for a very few machines. You might have learned how to program Edsac or Deuce or the Pilot Ace. You probably knew a little bit about binary arithmetic. You learned a bit about Boolean algebra, and could show how it helped the design of gates for some of the simple electronic operations. There was theory of Turing machines, which had been around since 1936-37. The number of people who knew all this with any degree of competence was very few - I think the fingers of one hand would be more than adequate to count them.

I was exposed to some of this as a postgraduate student in about 1951. I still have the notes, though I don't think I understood a word of what the man said. We had no commercial experience at all. Some of us had heard about Leo, but we didn't actually know anything about it.

There were very few computers then. That was the time when three machines were thought to be enough for the whole country. A few years earlier IBM decided not to join the computer market, because the total world market would be for about 10 computers, of which they couldn't possibly sell more than five. Three for the UK was perhaps an overestimate - in England we could have had just one, in London, and the Scots would probably have wanted one as well. As there was already one being built in Cambridge, and another in Manchester, and NPL was building one, and Andrew Booth was building one, the country was over-supplied!

A digital wristwatch today has got more computing power than all those machines put together, and it costs about £10. I don't think anyone ever actually counted the cost of those early computers.

Many of the machines had serial storage, which was hard to work with. It led to concepts such as 'optimum programming'. 'Optimum' sounds as though it's something good, but it wasn't fun to do.

There was backing store, though the first few machines I worked on didn't have any. A machine with a thousand words of store was a big machine! Pilot Ace had about 384 words; Deuce had 402; Edsac had just over 500. They were very, very small amounts of store. You also had backing store, and the problem with these was that a lot of your time was taken up with moving stuff from the backing store to where you could use it. You spent all your time with the mechanics of the problem rather than actually doing the arithmetic.

As far as programming was concerned, there were subroutine libraries. There was no recursion. You had to incorporate everything yourself, by copying bits of tape or cards. Assemblers came a few years later. There was no real notation (flow diagrams weren't much of a notation). There wasn't anything that you could work through and say: this is how to get things right.

The hardware details would obscure the algorithm. On a machine like Deuce you could only add in two places, so to perform calculations you had to move numbers to those places. If you were going to multiply two numbers, they again had to be in particular places. If you were going to do logical operations they had to be in particular places. You spent all your time shuffling data rather than actually doing something with it.

There were no high level languages. There were no consistent program testing techniques. I think we all developed some, such as putting chads back into a card so that they would stop at the right place. (Voters still do this in Florida, don't they!)

There was nearly a total absence of tools. One of my friends says that when he was learning Edsac in 1953 they did have some post-mortem routines; he doesn't say whether these were for the programmer or the program! You could stop the machine and move to single-shot operation. On a machine which worked at about a thousand instructions per second this was feasible. If the machine worked at a million instructions per second you didn't get very far in the program.

So, in summary, there was a total lack of notation, a general lack of experience, very limited hardware, no floating-point arithmetic, and very limited machines with tiny amounts of store, and initially no backing store. Something like the Elliott 803, which was later than I'm talking about, was fantastic: it had 8000 words of store at one level! You could then add backing store (at an astronomical price) on film.

Let's have a look at computing as a subject. We're talking about the mid- fifties, when people started setting up departments in universities. I quote one of my friends, on the occasion of the fortieth anniversary celebrations of the setting up of the computing laboratory in Newcastle: "Of course, at that time we all knew all the computing there was." Which we probably did, and we all knew each other as well. The trouble was we didn't actually know a great deal. There wasn't a great deal to know.

Because there were more people around, all writing programs, there was a growing body of experience. But nobody had had a chance to stand back and analyse this, and distil it into principles. There was no abstraction. But there was this growing body of experience - what I call the critical mass effect. You had enough people to talk to.

What do I mean by abstraction? The problem is that humans aren't very good at dealing with detail. In that sense a product such as an assembler was a good step forward. You gave names to variables rather than specifying where they were, and you let the machine decide where to put them. If you wanted to change things, well and good: provided you could spell the name consistently you would always get the same thing. When you moved bits of programs around, because you wanted to insert some extra instructions, you had to amend addresses in jumps. Someone at Cambridge - David Wheeler, I suspect - had the idea of relative addressing: how far away from this instruction to put something else. That allowed you to move a body of code without having to change it.

People then developed the ideas of lists, stacks, and pointers. Pointers are very useful: you can move (or apparently move) data around by just changing the pointers - it's over here, or over there. You didn't have to move the data, you just said where it was.

I shall provide two other examples of abstraction. Strachey cleverly noticed that what people like to create was effectively a three-address code. There were two variables, a and b, some function linking them, and the result of the function, c, which went to some other place. Machines like Mosaic were constructed on this basis.

Strachey noticed that very frequently one of a and b was the same as c, and also that one of a and b went over a fairly wide range of addresses while the other one didn't. You would go through a whole list of things when you were going to add them up. This observation led to multi-accumulator machines like Pegasus and other machines.

The other classical example comes from Dijkstra's famous article "GOTO statement considered harmful". He analysed why people made mistakes in programs, and concluded it was because a language like Fortran allowed a great deal of indiscipline. You could jump into the middle of a loop or a block of program without being sure that all the necessary conditions were fully satisfied. This line of thought led to structured programming.

The first computer science courses were in 1948, when Manchester University electronics students were getting lectures and exams on digital techniques even before the first machine was working. That was Tom Kilburn talking about what he was doing in the research lab each week. It must have been a very exciting time.

Cambridge had by 1953 a diploma course in numerical analysis and computing. There was a lot of numerical analysis and there wasn't a great deal of computing. London was running a diploma course in 1956, based at Northampton Poly. It was given by people like Jim Wilkinson, Ted Newman, Frank Olver and others. I remember going on that course.

In 1957-58 there was a fantastic change. The University Grants Committee agreed that about half a dozen computers should be installed in universities from Scotland downwards, mostly in places that already had an engineering faculty. So Southampton got one although it was one of the smallest universities at the time.

The Committee paid for some academic staff, which was important. They weren't actually to do any research or teaching, but to run computing services. This involved providing assistance and advice to the mainstream academic staff. To ensure they would have enough knowledge of the problems that physicists and chemists and others had, academic computing staff were appointed.

In 1958 another important change was the arrival of things like autocodes and matrix schemes, so you were getting away from having to do things in machine code.

By 1960 several universities were running postgraduate diploma courses, and we were getting around to convincing colleagues in other departments that we could teach their undergraduates. We taught them a bit about numerical analysis and then slipped in a bit about programming.

Universities are not necessarily places of enlightenment. Read "The Two and a Half Pillars of Wisdom" by Alexander McCall Smith. It's a brilliant book. The professors of philology that he writes about - every one of us who works in universities knows exactly which members of our senior common rooms are like them!

The trouble with setting up a course is this. A university would have an agreed target for the number of students. So if you were going to have a new course, who was going to give up some student numbers? Where would the money come from? Who would give up space?

At a university, status is remarkably important. For instance, at Newcastle we were to sell computer time to local industry. Ewan Page was only a Reader, not a Professor, but he had a bigger room than some Professors, and with a better carpet, because the local industrialists would require the sort of surroundings that they were used to in their offices. I was on the floor above; I just had linoleum. You had to make sure that the status was right.

By 1965 London University had introduced what was called a 'course unit system', and this allowed you to slip in single courses (a course in numerical methods or in programming or whatever) without changing a degree course. Imperial and Queen Mary Colleges made use of that capability.

The first actual BSc computer science courses started in 1964 in North Staffordshire and in 1965 at Manchester. At the Poly it was a four-year course, with one year out in industry. So they had graduates out in 1968.

By 1970, several universities were running courses in various computing subjects, and the postgraduate diplomas had almost all become MScs. Cambridge was an exception; that was still a diploma course.

The Polytechnics didn't have the same internal jealousies, so it was easier to introduce courses. The Council for National Academic Awards did a lot of work there.

The BCS courses didn't come in until after 1970. Having a BCS was very helpful; there were Branches all over the country, and a lot of them were based around a university. For instance we at Newcastle had in Pegasus the first and largest computer in the North East, and we set up local branches. By talking to local people from the Coal Board and the NHS and so on, we helped them and they helped us.

In 1961 I was partly involved with the Schools Mathematics Project. They had a big conference to launch it in Southampton - 160 schoolteachers - and I taught Pegasus autocode to all of them. They then returned to their schools and introduced computing to their curricula.

Editor's note: This is an edited transcript of the talk given by the author to the Society at the Building the Profession seminar at the Science Museum on 24 March 2005. The Editor acknowledges with gratitude the work of Hamish Carmichael in creating the transcript.

Professor Samet can be contacted at paul.samet@btinternet.com.

| Top | Previous | Next |

One of the UK's leading software pioneers tells his personal story of the early days of computing.

As a schoolboy, I often used to visit the Science Museum in London before the war and became enthused by their displays of calculating machines. I first came across computing in 1941, during the War, when there were computers attached to the ground radar stations in the Chain Home (CH and CHL) stations. These were built in the late 1930s to guard against air attacks.

The CH stations had huge tower masts, which sent out pulses over a wide area and then listened for the echoes coming back from aircraft. They had directional antennas to sense the direction of the echo, and they measured the range by timing the delay between the pulse going out and the echo coming back. The operator had a cathode ray tube display, the spot travelled from left to right across the tube and was deflected upwards by the echo - he marked the echo by moving a pointer across the screen, and its position was read digitally.

On CH stations the operator turned a goniometer loop between fixed loops to locate the minimum signal. The CHL stations used shorter wavelengths and narrower beams so he could get an accurate direction by turning the antenna itself for the maximum signal. The bearing was then read by a digital attachment to the goniometer or the antenna shaft.

You pressed a button to start up a Type Q Calculator, built out of Post Office type 3000 electromechanical relays. This converted the range and bearing into a standard Ordnance Survey grid reference, displayed for the operator to read into his telephone to a WAAF in Fighter Command Headquarters in Stanmore, where the Group Captain would order up a flight of aircraft to attack the intruders. This system made a huge contribution to winning the war. Computing definitely came into it at a very early stage.

The radar itself was very interesting, particularly to me because my hobby was building radios - I was a radio 'ham' in 1938. When I went up to Cambridge in 1940 I had one year of mathematics before they called me up into the RAF, who discovered my electronics background and put me in to cope with airborne radar technology.

In 1946 I went back to Cambridge and attended a course that was run by Maurice Wilkes on numerical analysis and the computing that was around in those days. They were designing and building Edsac which worked about six months after the Manchester machine. I was in and out of the maths lab all the time, finding out what they were doing and how they were doing it. It was interesting to me because I knew from my radar experience the electronic circuits that they were using, and this was a fascinating application of them.

I moved into computing soon after I joined Elliott Brothers in Borehamwood in 1951, along with my new wife Ruth. I joined Hugh Ross to build an electronic analogue computer. I soon moved over to the digital side, where Ruth was already working, and a small digital computer (Nicholas) was being built. There were other computers that did hush-hush jobs that none of us were supposed to know anything about.

The small computer was called Nicholas because it had a memory made of nickel delay lines. You sent a pulse of current through a coil around one end of the wire, and the magnetostrictive effect made the wire in the coil shrink by a tiny amount, and sent a compression wave down the wire. After about three milliseconds it got to the other end, where another coil (and a permanent magnet) detected the sound pulse, amplified and clocked it, and fed it back in at the beginning. A single length of wire held 1024 binary digits in the form of pulses or gaps, which were read as 32 words of 32 bits each. Thirty two of these wires were coiled into open loops to make up the 1024-word computer memory.

In 1952 Ruth wrote the first 'initial orders' or assembler for Nicholas, made up of about 400 commands. It helped users to write programs and had preset parameters, which allowed blocks of code to be moved. David Wheeler's Edsac Initial Orders were influential.

Nicholas was built in a wooden frame. It didn't have floating point, or a multiplier, but we managed to simulate those by program. Its logic was designed by Charles Owen with only 80 gates using 'packaged' technology, which had been invented by him and Bill Elliott. It meant that if you wanted to have some particular function in the hardware you made it up by interconnecting a few pre- designed packages that were largely gates - AND-ing and OR-ing and things of that kind. Nicholas was very successful as a test-bed and for providing a computing service for several years. (In 1953 Ruth and I named our eldest son Nicholas.)

In the early days many computers were designed by engineers, who sometimes tended not to look after the programmers in a very friendly way. The first page of the programming manual for the Manchester machine displayed the international teleprinter code, and you had to memorise it before you could write a single command. The Cambridge people had improved on that with an assembly process in the Initial Orders, and they had fairly straightforward commands. You could understand very easily what they were, the addresses were written in decimal rather than binary, and you had preset parameters and things like that. We roughly imitated that on Nicholas, built a couple of years later. We had better hardware. Edsac had mercury delay lines, which were fantastically expensive: nickel delay lines were much cheaper, and we had twice the memory that Edsac had.

Nicholas was relatively easy to program, and we developed things for it including floating-point routines. It was very important to do things by floating-point; otherwise you had to scale everything to avoid overflow, which was a very unfortunate thing to happen. The result was that we built a library of routines such as floating-point, and later an interpreter for floating-point. The following year we also had a matrix interpretive scheme so that we could do operations on modest-size matrices, but the store was only 1024 words.

I remember Peter Hunt visiting Elliott Brothers from de Havillands in 1953. In July of that year I wrote a Programming Manual, which included an outline of the subroutine library. I also showed Peter Hunt how to program Nicholas. He programmed and executed the flutter calculations for the Comet 1, the world's first jet airliner. He used the floating-point matrix scheme, limited to 10-by- 10 size matrices. (Later I flew to Australia in a Comet 1, before they discovered the metal-fatigue problem that led to the crashes.)

In 1954 a lot of Elliott people moved to Ferranti, where Bernard Swann was building up a loose organisation for sales and programming. The atmosphere was very good, and I was taken on to help out with Pegasus, particularly the programming. I knew Christopher Strachey very well, and Pegasus was his creation for the NRDC. He had huge talents, particularly in programming.

The engineering side in Ferranti included Bill Elliott and Charles Owen. About 300 packaged circuits made up all the Pegasus logic. There were only about five different types of logic package so you could apply mass-production techniques to make them cheaply, and we used them also to build other computers.

We wrote floating-point routines which were working when the first Pegasus ran, in 1955, and we could manage about 50-by-50 size matrices by 1957. There were lots of these facilities and over the years a comprehensive library of routines was built up for and by the owners of the 40 Pegasus machines.

We also had to train programmers. After three years with Elliott Brothers I had joined Ferranti in 21 Portland Place (a very grand building with a wonderful fireplace which had to be boxed in to protect it from the earthy hands of computerites), and we ran programming courses there. Peter Hunt had joined me, and we both delivered the first programming course on Pegasus. We ran a large number of courses for staff and customers.

We had of course to write the programming manual. The original manual had a pretty front cover and then masses of typescript, and was dated September 1955. Peter Hunt and I wrote alternate chapters.

In 1958, when I was writing the ultimate Pegasus Programming Manual, I had programmed the calculation of π to high precision by two independent methods, to provide a check. When I had reached and checked 6000 places I was asked by Bernard Swann to attend a conference on Mathematics in Industry organised by John Hammersley of Oxford for maths teachers in public schools. There wasn't much time left, so I took only one of the calculations forward to 10,000 decimals. I attended as one of the lecturers, and I caused a stir by showing this latest result of the calculation - Hammersley grabbed it from me, and published it as a multi-page footnote in the proceedings of the conference.

When I got back to Portland Place I continued with the check calculation, and it differed! The two methods produced different answers after about 8000 places - probably a hardware error. So, regrettably, Hammersley's footnote was incorrect after that point.

Bernard Swann also asked me to attend the International Congress of Mathematicians in Edinburgh, for which I managed to compute on Pegasus the value of π to 10,018 correct decimal places - a world record. The previous record was produced on a big IBM machine in Paris, which got to 3000 places. So I got three times as far on a much smaller computer.

IBM got to hear of this, and continued their calculation in Paris to 16,000 places, six months after I'd achieved 10,000. Nowadays the value of π is known to billions of decimal places. I hung up my print-out at the Edinburgh conference, and a Frenchman wrote on it "Je conteste ce chiffre"! So I wrote a note in reply (also in French) asking him to contact me, but he never did.

I also calculated lots of logarithms because I was able to use the same program. By changing only one instruction I could use it to calculate logarithms instead of π. So I calculated logarithms of all the primes up to 109 to 1000 decimal places. These things took months to calculate, but the Portland Place Pegasus did it while I was writing the official Pegasus Programming Manual.

Pegasus was a pretty useful machine. We sold 40 of them altogether, and as time went on lots of programs became available, so we had a library of subroutines, and lots of people in the company and on the customer side produced lots of papers. We had a wonderful matrix interpretation scheme that was used a lot for flutter calculations, which was documented in April 1955.

That was really what computing was about. We didn't care so much about organising ourselves as about getting jobs done.

Editor's note: This is an edited transcript of the talk given by the author to the Society at the Building the Profession seminar at the Science Museum on 24 March 2005. The Editor acknowledges with gratitude the work of Hamish Carmichael in creating the transcript.

George Felton can be contacted at george@feltonhome.com.

North West Group contact detailsChairman Tom Hinchliffe: Tel: 01663 765040. |

| Top | Previous | Next |

The National Computing Centre was a Government creation with fairly vague objectives, which succeeded in playing a seminal role in the move towards professionalism in the UK computing community. Here a former Director of the Centre describes how the NCC set about its business.

The NCC is a membership organisation, but not of individuals as the BCS is. The NCC is a membership organisation of establishments. In each case the establishment is represented by an individual, but the registered body is an establishment. It spans the whole spectrum of computers, users, vendors, service companies, academics, Government and anybody else. Any organisation that has any link to computers can join the NCC.

I want to make a clear distinction between 'professionals' -- the people who are professional - and 'professionalism' - the activity of being professional about what one does. I was a bit perplexed to define 'professional' until I found this definition in the Oxford Dictionary - the big one - under sub-heading 6a: a vocation in which a professed knowledge of some learning or science is used in its application in the affairs of others.

This is very important because the NCC was always most interested, not in the actual science and technology of computing, but in its use by others. It was concerned with professionalism, rather than professionals. Professionals were the realm of the BCS, and professionalism was very much the realm of the NCC. (I'm not quite sure how that fits in with the current accord which the two have just signed.)

I want to go through aspects of professionalism as seen by the NCC. The NCC was concerned with creating a professional infrastructure within which computing could be applied, was concerned with the professional skills and the development of these skills, and was concerned with meeting joint professional challenges and in creating some sort of user voice. There were times when the NCC was viewed as the user voice, but there was no effective user voice, so it had to create one.

Taking the infrastructure first, a problem we perceived in the early days was that there was no professional way to record what you were doing. An early achievement of the NCC was to create documentation standards, and these were used widely throughout the industry.

The next subject, (though not in a temporal sense) was that whenever we polled users about their biggest problem it was interoperability - a lack of common standards, as is still the case today. So we did a lot of work in standards, and were very much in the forefront of developing OSI (Open Systems Interconnection).

For example, we set up a Conformance Testing Laboratory with testing tools to see whether a product conformed to open systems standards. The findings were applied in Japan, the United States and other places as well as the UK. We were instrumental in setting up a Government committee, the Intercept Committee, which was chaired by a Minister (John Butcher, originally). This was a field where we were very active.

The third area on which I would like to comment is the need for data protection - the protection of individuals' privacy. The NCC was extremely active in this field, as was the BCS for that matter. The Deputy Director of the NCC at the time, Eric Howe, became the first Data Protection Registrar. We did a lot of work there.

Then there was the problem of software security. If users invested a lot of money in software provided by a service company, and the service company went bust, then they would lose any support for their software. We set up an escrow scheme to keep the source code of registered software. If the supplier went out of business, one could apply for the source code and maintain it oneself.

All of that was concerned with creating a professional infrastructure within which computing could be applied to the needs of users.

There was a lot of activity in the academic world developing the necessary skills for programming and for computer science for the development of computers. We were concerned all the time with the application. We saw that there was a need for systems analysis skills, and set up the NCC Certificate in Systems Analysis and Design. The last figure I have shows that 30,000 people worldwide have the NCC Certificate.

At the bottom end of the scale we saw a particular need to give unemployed school-leavers the opportunity of being to a degree computer-literate, so that they had the opportunity of starting out on a basic career in computer support, computer operation, and so forth. This led to the Threshold Scheme, a remarkable scheme.

There was very basic computer training. There was no required academic standard to start on this course. But we noted down the academic qualifications of people who went on the course, and subsequently matched it with their achievements in their careers after they had completed the course.

It's interesting that there was no correlation at all between ability in mathematics and achievement on the course, but there was a high correlation between ability in English language and achievement. This was because applying the technology is all about constructing a sentence, constructing a complete thing, rather than getting sums right.

There were 25,000 young people who went through the UK Threshold scheme before Government funding stopped. Then we took it overseas, and there are now something like half a million people worldwide who have been through this course. So it has been a real success.

At the other extreme, we looked at the directors, and introduced a Director Appreciation Course to give them a better understanding.

We were aware that there were continually new challenges to be faced by users. We had the ideal structure to assemble users together to discuss common problems, and to assist them by providing academics and people from the vendor industries. With decimalisation, for example, users could take a standard approach from the NCC, a professionally tested and proven approach. We acted similarly with changes in VAT, and, much later, with the "Year 2000" problem.

We introduced something we called the Start Programme when real time computing came into vogue. This was a set of software tools for application to large real time systems, coupled with the opportunity of getting together users who were working in the field, to allow them to develop their tools and to work with tools that were provided for that purpose.

Another initiative was the Impact Programme which provided business perspectives, again allowing users to get together. All of this was to do with helping people who were applying computing to apply a professional approach to solving these challenges.

Finally, it seemed to us that while we didn't regard ourselves as the user voice, there was a need for a user voice, so we created an organisation called the NCUF, the National Computer Users Forum, which I believe no longer exists.

It was an interesting challenge, and I think I might have made a tactical error here. IBM and ICL and one or two of the other big boys wanted this organisation. I said "Alright, we can have a users association, but it must represent all users". So in the end we had an organisation which had 20 to 25 different registered bodies, which to some extent reduced its power to do things, though it made it much more representative and much more authoritative.

The NCC itself was regarded as a user voice. For example, we represented users on the National Committee for Computer Networks. This was when 'ICT', with the 'C' standing for Communications, was just coming in. I remember that one of the findings of the NCCN was that "there should be a limited experiment in a privatised network". After that Mercury came into being, and we all know what's happened since then.

So that is the story of the NCC helping to introduce professionalism into the application of computing throughout the community.

Editor's note: This is an edited transcript of the talk given by the author to the Society at the Building the Profession seminar at the Science Museum on 24 March 2005. The Editor acknowledges with gratitude the work of Hamish Carmichael in creating the transcript.

Editorial contact detailsReaders wishing to contact the Editor may do so by email to wk@nenticknap.fsnet.co.uk. |

| Top | Previous | Next |

The steady progress towards the establishment of computing as a profession was accelerated by the organisation of the Datafair conferences, and culminated in the attainment of the Royal Charter. The author provides an insight into the thinking that went into these initiatives.

My father, John Reginald Ivinson, worked at Vickers Engineering circa 1951 on a Powers Samas EMP Mark B. Some regard that as being some sort of calculator rather than a proper computer.

I remember my father telling me that the two major customers they had were the National Coal Board which used it for miners' payroll, and British Shoe Corporation which bought several to do stock control. They had introduced the concept of putting a punched card into each shoe box, so when the pair of shoes was sold they just returned the punched cards at the end of the day or the end of the week to update their stock positions.

My father, when I asked him how many EMP Bs they sold, said 300-400, but I've no way of knowing whether that is an accurate figure.

My professional career started in 1967, when I left university, having read American History and Geography at the University of Keele, which gave me a very good background for going straight into data processing! One of the reasons why I've got the number 19460 was that some bright spark had read the writing on the wall in terms of the cut-off date of 31 January 1968, because in late 1967, when I'd only been with IBM for about three months, a memo came round to all the staff saying that it was in their interest and that of IBM to join the British Computer Society.

One of my earliest recollections was of receiving information in the post, probably in the Bulletin, on Datafair. This was an idea dreamed up by Ken Smith, who worked for IBM (I met him on a number of occasions). He was very active inside the Society. He had started his career as a field engineer underneath my father at Vickers Armstrong. After two or three years he was seduced by IBM, which had come into this country and gone wrong, and effectively doubled everybody's salary that they could find who knew anything about computers. My father was very irritated in that he had to start from scratch in building up a field engineering force in order to service the machines that they had been putting out.

Datafair congresses were run by the Society from 1967-77. I was involved with the one in 1975 which was run at the Novotel in Hammersmith, and I remember that the man in charge was Air Commodore Malcolm Jolly. Up until then they were extremely successful. But I'm led to believe that the last one wasn't so successful.

This caused a problem in the development of the Society, because we had a policy up until then that Datafair underpinned the finances of the Society. Therefore subscriptions had been allowed to remain at a very low level. When the good times finished it suddenly came home to roost. To sustain the Society at the level it was used to, the subscriptions had to go up and this caused a relatively large number of people to resign from the Society because they thought it was iniquitous.

In terms of professional development the Datafair series always seemed to me to represent a very clear-cut policy in terms of developing the profession involving the manufacturing side and the nascent software houses, and getting the members together to have a full programme of presentations from all parts of the industry or profession. It was a major contributor to the development of the professionalism in the mid seventies up to its demise in 1977.

There's a little bit of anecdotal history here, because a number of people have asked me why we went for a Charter round about 1981, and the answer is quite simple. About 1975 Maurice Ashill decided to retire. Council was then charged with setting up a working party to find a suitable replacement. We placed an advert in the Sunday Times, which was the traditional method, and we had nominated a salary range - I can't remember just what it was, but it was certainly in the tens of thousands - and we solicited applications. I sat on the working party; it was about my second year on the BCS Council.

We had about 70 applicants. The working party assessed them all and whittled them down to about a dozen. Then we reached deadlock. We couldn't find someone who we thought was capable of replacing Maurice within the salary scale that we were looking at.

So we went back to Council and said that we were sorry, we couldn't come to a reasonable decision; would Council like to advise us. The decision was delegated to Sandy Douglas, who was then I think the immediate past-President.

Sandy went through the papers and said: "I've identified the bloke that we want, and it's Derek Harding". Somebody said "Hang on a minute! He's outside our salary range: he's earning more than we can afford to pay." Sandy said "Yes, we're going to pay him what he's currently earning. Blow your old range. Let's get on with it." So that's how we got Derek Harding as our new Secretary- General.

Derek came in, and took over the running of the Society on a very efficient basis. He put in place a number of processes and procedures which still exist today. I got to know him quite well, because I was living in London, and was in and out of the Society regularly.

One day he took me out to lunch. This was about 1980 or 1981. We had a very pleasant lunch, round the corner from the offices, and I said: "You seem to be bored". He asked: "What gives you that impression?" I replied: "You know, you've got this place running pretty well, everything's ticking over quite nicely. I suspect you need a new challenge in your employment." He asked "What have you got in mind?" I said: "In your CV you said that you'd taken the Institute of Physics to becoming a chartered body by petitioning the Privy Council, and so you obviously understand that process." He said: "Yes, it was very interesting". So I said: "Well, why not do it for us? You've got the experience. You've got the track record. Why don't we set that up?" He said: "Well, that's an idea."

We then went back to the F and GP Committee and said: "How about it?" I remember David Butler, who was then VP (Technical), said: "I'll support the proposal, provided John chairs the working party."

So I got lumbered in that graceful fashion with chairing the working party, which eventually, with the help of John Southall and Derek Harding and two or three others, drafted the petition. We worked with Christopher Walford, who was very experienced in this particular area and had lots of connections with the Clerks of the Privy Council. Walford later became the Lord Mayor of London (in 1984), which tells you the sort of status he had.

We petitioned in 1983 and the Charter was granted. In my mind that marked the real start of the BCS as a professional body. There was an existing Code of Conduct, but that Charter formally enshrined the whole process of Conduct and Disciplinary Procedures, and we had a much more draconian approach. Until a Society of our sort starts kicking people out, it hasn't come of age; that is the mark of a sane professional body. There needs to be a corpus of knowledge against which people can be examined and tested and held accountable.

The next move that occurred in the Society's history was our application for membership of the Engineering Council. I personally regard that as being a distraction. Not being an engineer by background, and not being particularly interested in trying to impress Italians and Germans by calling ourselves CEng, I didn't really understand why we went hell for leather for it, but I think the answer lies in that particular point in time.

Sir John Fairclough, who was then our President, had an interest in promoting our membership: indeed a year later he became Chairman of the Engineering Council. He asked me a very intriguing question. He said: "Why is the Society applying to become a member of the Engineering Council?" I said: "I really don't know. I personally believe that there's another way forward for us." He said: "What's interesting is that if you examine the European structure, you'll find that all the other Engineering Councils in Europe have got a professional computing body inside their Engineering Council. The UK's is the only one that doesn't." And he put forward the proposal that in fact the UK Engineering Council needed us more than the BCS needed the Engineering Council.

What was the alternative? I thought we needed further steps towards the regulated profession involving recognition of a body of knowledge, of a code of conduct, and of a disciplinary process. I was not arguing that everybody in the computer industry should be a member of the regulated profession, but as far as I was concerned parts of our profession had got to get to the point where they could be guillotined or hung up and drawn and quartered for a misdemeanour, and it had to be seen to be public. Until that occurs I don't believe that we will have achieved our majority.

Editor's note: This is an edited transcript of the talk given by the author to the Society at the Building the Profession seminar at the Science Museum on 24 March 2005. The Editor acknowledges with gratitude the work of Hamish Carmichael in creating the transcript. John Ivinson can be contacted at john@johnivinson.co.uk.

| Top | Previous | Next |

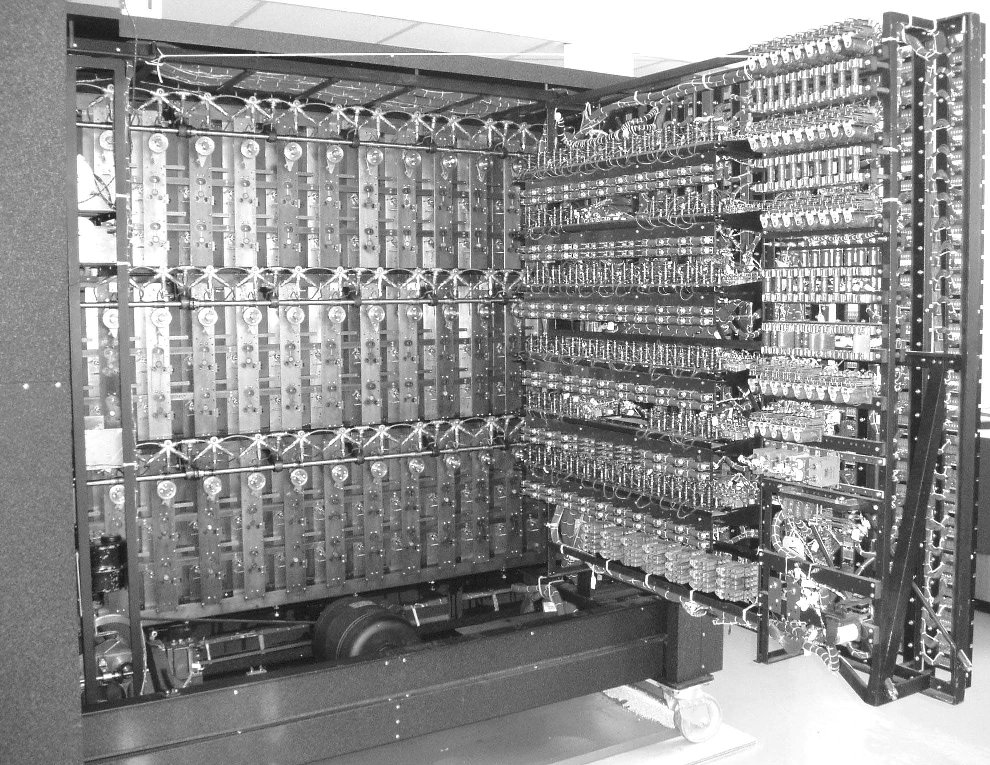

One of the rarer early British computers, the AEI 1010, is the subject of this issue's "Pictures of the Past" feature. The company did not sell many systems, and was eventually taken over by GEC in the mid sixties. These are two images of the AEI 1010 installation at 33 Grosvenor Square in central London, taken by reader Jeff Kearey, head of Performance Improvement at Achilles Group in, he thinks, 1961. The top photograph shows the Ampex tape units, and the bottom one the AEI 1010 processor cabinet.

| Top | Previous | Next |

Every Tuesday at 1200 and 1400 Demonstrations of the replica Small-Scale Experimental Machine at Manchester Museum of Science and Industry.

Weekday afternoons and every weekend Guided tours and exhibitions at Bletchley Park, price £10.00, or £8.00 for children and concessions (prices are raised on some weekends when there are additional attractions). Exhibition of wartime code-breaking equipment and procedures, including the replica Colossus, plus 60 minute tours of the wartime buildings.

Details are subject to change. The programme of future seminars had not been finalised as we went to press: details will be circulated when the arrangements have been completed. Members wishing to attend any meeting are advised to check in the Diary section of the BCS Web site, or in the Events Diary columns of Computing and Computer Weekly, where accurate final details will be published nearer the time. London meetings take place in the Director's Suite of the Science Museum, starting at 1430. North West Group meetings take place in the Conference room at the Manchester Museum of Science and Industry, starting usually at 1730; tea is served from 1700.

Queries about London meetings should be addressed to David Anderson on 0239284 6668, and about Manchester meetings to William Gunn on 01663 764997.

| Top | Previous | Next |

[The printed version carries contact details of committee members]

Chairman Dr Roger Johnson FBCS

Vice-Chairman Tony Sale Hon FBCS

Secretary and Chairman, DEC Working Party Kevin Murrell

Email: kevin@ps8.co.uk

Treasurer Dan Hayton

Science Museum representative Tilly Blyth

Museum of Science & Industry in Manchester representative Jenny Wetton

National Archives representative David Glover

Computer Museum at Bletchley Park representative Michelle Moore

Chairman, Elliott 803 Working Party John Sinclair

Chairman, Elliott 401 Working Party Arthur Rowles

Chairman, Pegasus Working Party Len Hewitt MBCS

Chairman, Bombe Rebuild Project John Harper CEng, MIEE, MBCS

Chairman, Software Conservation Dr Dave Holdsworth CEng Hon FBCS

Digital Archivist & Chairman, Our Computer Heritage Working Party Professor Simon Lavington FBCS FIEE CEng

Editor, Resurrection Nicholas Enticknap

Archivist Hamish Carmichael FBCS

Meetings Secretary and Web Site Editor Dr David Anderson

Chairman, North West Group Tom Hinchliffe

Peter Barnes FBCS

Chris Burton Ceng FIEE FBCS

Dr Martin Campbell-Kelly

George Davis CEng Hon FBCS

Peter Holland

Eric Jukes

Ernest Morris FBCS

Dr Doron Swade CEng FBCS

Readers who have general queries to put to the Society should address them to the Secretary: contact details are given elsewhere.

Members who move house should notify Kevin Murrell of their new address to ensure that they continue to receive copies of Resurrection. Those who are also members of the BCS should note that the CCS membership is different from the BCS list and so needs to be maintained separately.

| Top | Previous |

The Computer Conservation Society (CCS) is a co-operative venture between the British Computer Society, the Science Museum of London and the Museum of Science and Industry in Manchester.

The CCS was constituted in September 1989 as a Specialist Group of the British Computer Society (BCS). It thus is covered by the Royal Charter and charitable status of the BCS.

The aims of the CCS are to

Membership is open to anyone interested in computer conservation and the history of computing.

The CCS is funded and supported by voluntary subscriptions from members, a grant from the BCS, fees from corporate membership, donations, and by the free use of Science Museum facilities. Some charges may be made for publications and attendance at seminars and conferences.

There are a number of active Working Parties on specific computer restorations and early computer technologies and software. Younger people are especially encouraged to take part in order to achieve skills transfer.

| ||||||||||