| Resurrection Home | Previous issue | Next issue | View Original Cover | PDF Version |

Computer

RESURRECTION

The Journal of the Computer Conservation Society

ISSN 0958-7403

|

Number 77 |

Spring 2017 |

Contents

| Society Activity | |

| News Round-up | |

| The Evolution of Enterprise Computing | Geoff Sharman |

| Reflections on the Hoot | Simon Lavington |

| In Search of the Lost Bits | Dik leatherdale |

| The Science Museum’s Winton Gallery | Kevin Murrell |

| Letter to the Editor : PLAYSD | John Buckle |

| 50 Years Ago .... From the Pages of Computer Weekly | Brian Aldous |

| Forthcoming Events | |

| Committee of the Society | |

| Aims and Objectives |

Society Activity

|

Software — David Holdsworth Kidsgrove Algol I think a very brief recap will help. We have listings of all but one of the 13 overlays (called bricks in EE documentation) of the Kidsgrove Algol Compiler, and a listing of an end user program at the point after the library routines have been incorporated. We also have a hand-written version of the missing brick. We do not have the POST library system within which the compiler operated in real life. Our aim is to make it work by re-engineering the missing bits. We have got to point where we can compile and run some carefully chosen Algol60 programs, even Donald Knuth’s man-or-boy. There is a little demonstration at: kdf9.settle.dtdns.net/KDF9/kalgol/DavidHo/demo.zip.

INRIA I have recently sent off an email to INRIA, drawing their attention to my embryo thoughts on archiving source text for which the original character predates the wide acceptance of ASCII/ISO eight-bit character sets. It can be seen at: www.settle.dtdns.net/CCS/sourcetext.htm. Atlas Emulator Dik Leatherdale reports that after a long period of uncertainty the mechanics of the fixed point arithmetic functions which resided in the floating point accumulator have been thought through and (re)-implemented. He has access to a small selection of software items which utilise these functions and so far, they all deliver the expected result. As a consequence, however, doubts have arisen over the floating point functions. One step forward... |

|

Analytical Engine — Doron Swade The main activities focus on the original manuscript sources of the Babbage technical archive held by the Science Museum. Since the 19th century there have been several generations of reference systems, and with the digitisation of the archive in 2011 by the Science Museum, a further layer was added. The reference system now used by the Science Museum will be the de facto standard for future scholarship. What we are doing is reviewing image by image the entire archive holdings (some 7,000 manuscript folios) including all surviving drawings and notations for the Analytical Engine,

Not all of this is straightforward and in some instances unscrambling layered inconsistent reference systems dating back to Babbage’s time has proved to be nightmarishly difficult. Several visits to the physical archive at Wroughton have been made. More will be required. The outcome of this process will be a searchable database, annotated and cross-referenced, that identifies all known sources, and records the status of each source including issues of provenance, anomalous reference history, with a record of links and citations to other material in the archive and elsewhere. Tim Robinson in the US is going through all known material sheet by sheet to construct the searchable data base with estimated completion by the end of 2017. In London I am going through 20 volumes of Babbage’s ‘Scribbling Books’ page by page, transcribing AE-relevant material, to produce a searchable quick index, estimated for completion by July 2017. We are in close collaboration with the Science Museum archivists, and our findings are routinely supplied to them in support of their ongoing work to open-source this material. The main outcome to the Analytical Engine project will be a survey-knowledge of all known material relevant to the AE design, consistent indexing, and a powerful software retrieval and reference tool. While this activity is going on it must be said that pursuing the implications of critical new discoveries has proved, on occasions, irresistible. |

|

Argus 700/Bloodhound — Peter Harry The Argus 700, its power supplies and I/O rack continue to run without any faults occurring since my last report. Unfortunately the same cannot be said for the monitors used in the Bloodhound simulator as we have now experienced our third failure in the four monitors in use. While two monitor faults have been repaired the latest failure, indicated by no EHT on the CRT, could be the most serious. It will be a week before we will have time to localise the fault on the monitor but it is very possible the EHT line/flyback transformer is the culprit. If it is, there are no spares for the Murata branded transformer that we have found, anywhere. We do have a spare monitor but to lose a monitor will mean we have no replacement in the future. In the past we have recognised the vulnerability we have due to the age of the monitors (20” Mitsubishi C3920s) but replacing with a current or standard monitor is not straightforward due to unusual line frequencies etc. the monitor is designed for. In the past we have considered developing a form of translator which will convert the Ferranti graphics command set, possibly to Open GL. A translator that will enable the use of alternative, bog standard, graphic cards and monitors, something we will revisit. The monitors in question can be view on our YouTube video at: www.youtube.com/watch?v=5Xl1By8vh3k. The ability to maintain and repair the Argus 700 along with the rest of the simulator is of paramount importance to our team and to that end work is underway on a PeriBus simulator. PeriBus is the I/O bus for the Argus 700 and has two forms, parallel and serial. It is the PeriBus that in its serial form that connects the Argus 700 processor to its external I/O. Some background: The Argus 700 is a process control computer and designed to handle large numbers of inputs and outputs, both digital and analogue. To handle the large number of I/O connections the previous Argus 500 processor had individual twisted pair wiring to each and every input and output resulting in several very large multi pin connectors. To replace such an impractical system for I/O the PeriBus was developed for the Argus 700. The serial PeriBus has six parallel lines (a contradiction), four lines for addressing and data, one for setting input or output and the sixth for timing. Our PeriBus simulator is a test set that will allow the manual setting of addressing and data to exercise and test I/O cards and also the serial to parallel PeriBus converter cards. It is planned that the manual setting of the address and data logic will be replaced with scenarios run from an Arduino. We have already seen the benefit in the PeriBus simulator in its current stage of development as it has enabled us to repair a serial to parallel converter card. |

|

Harwell Dekatron/WITCH — Delwyn Holroyd The machine suffered a power supply failure last month which caused a few days downtime. The fault turned out to be a heater-cathode short in one of the main series regulator valves in the positive half of the supply. Having adjusted all the voltages we took the opportunity to do a full MOT and adjust all the transfer and carry unit thresholds. Store 10 developed an intermittent problem supplying B pulses to the eighth digit column, which was cleared by cleaning the relevant relay contacts. We discovered that some store locations are not working reliably when certain shifts are selected, such as would happen when orders are being read. A new version of the store test program has been developed to explicitly test for this. The operator training booklet has been revised and a program of refresher training put in place for all operators. In addition a number of new operators have been trained up. |

|

ICT/ICL 1900 — Delwyn Holroyd, Brian Spoor, Bill Gallagher We are still progressing on multiple fronts, juggling different sub-projects. Since the last update we have continued with MAXIMOP (Brian) and PLASYD (Bill), additionally restarting work on the 1830 General Purpose Visual Display Unit after finding five Engineers’ Test Programs on one of the Galdor tapes. MAXIMOP A lot more testing is still required using the new issue tape, as well as further documentation. PLASYD Has been recovered and we now know how to run it both under GEORGE3 and E6RM exec. Thanks to the efforts of Bob Hopgood and John Buckle we also have two original manuals scanned and even re-created as web-documents for this most useful language. We are still missing the matching subroutine library SUBGROUPS-PL, but have created a skeleton version using SUBGROUPS-RS. BCPL Has been shown to work, but as yet awaiting investigation. Delwyn has obtained a manual, but without much information on how to operate the compiler. We have a G3 macro, but its parameters are a bit opaque. ICT 1830 General Purpose Visual Display Unit With the addition of some #NCRx programs (as well as 3 demonstration programs from another source, investigated some time ago), we now have eight programs available for testing an emulation of this interesting Vector Graphic device under GEORGE 3. Currently the output side is working fairly well, but inputs to it are causing problems, not an unusual occurrence at this stage. Despite its capabilities, there is precious little known about it. Any information welcome. What we have deduced/found and think we know is:-

|

|

Elliott 903 — Terry Froggatt An Elliott 903 has a built-in 18-bit 8192-word store, and the 903 at TNMoC has an additional 8192-word store in a separate cabinet with its own power supply. A rectifier failed in this power supply in late 2014, which was duly replaced, but the extra store has not worked properly since then. It was suggested that the rectifier failure could have damaged the store circuits before the fuse blew, although this is unlikely especially as the power supply does also have electronic overload protection. The lack of the extra store is not a major issue. In the late 1960s we developed the Jaguar flight program with just an 8192-word 920B and 920M, and none of the routine demonstrations given at TNMoC nowadays need it. Having the extra store does enable Algol to be run in load-and-go mode, rather than having to load Translator then overwrite it with the Interpreter, but Algol still has to be overwritten to run any Editor (such as Bowdler). Nevertheless, at some point in the last two years I’ve tried to locate the fault, by temporarily replacing all of the extra store circuit boards with boards from my own 903, but the fault was still present. I wrote a new test program, which suggested that all of the store cores were OK, but when different words were written to each store location, roughly 1200 tests out of 8192 were failing. The nature of the failures was consistent but the exact number of failures varied slightly from run to run. When I made a recent trip to TNMoC I decided it was time to take the whole extra store unit home to test it on my 903. I took not just the circuit boards but the rack which holds them, and the three thick cables that run between the CPU cabinet and extra store cabinet. (Thanks to Delwyn and others for helping get the cables out from under the cabinets). Of course when I connected everything to my 903 it all worked. So I made a another trip to TNMoC, not just to collect the extra store’s power supply, but also to collect most of the circuit boards from the CPU, temporarily leaving some of my own circuit boards there. Again, when I connected everything at home, it all worked, and continued to do so even if I adjusted all of the voltages on both power supplies up and down by a volt. But I did notice that one A-FA board from TNMoC did not belong to their 903. It was an older card from their scrap 903 chassis (which lacks the backplane wiring to support any extra store). On my fourth trip to TNMoC (a mere 51 miles as the crow flies from Hampshire, but always taking over 95 minutes due to the London-centric nature of our motorways), I returned everything to TNMoC. I reconnected the extra store rack, initially leaving my boards in the CPU, and everything worked, but the fault came back as soon as I refitted the older A-FA board, and the fault symptoms varied if I moved that board to a different bit position. So there never was a problem with the extra store or with its repaired power supply! The fault was in the older A-FA board (which lacks some of the pull-up resistors present on later A-FA boards), which had been fitted to the 903 as far back as April 2014, but nobody noticed that the extra store was not working until its power supply failed some months later. The TNMoC 903 and its extra store are now working, albeit with one of my A-FA boards. The odd thing is that the older A-FA board was perfectly capable of working on my 903 which has three extra stores, and the newer A-FA board (which was swapped out of TNMoC’s 903 in April 2014 as faulty) is working on my 903 right now. But the flying season is approaching, so I have no immediate plans to find out exactly why these boards perform differently in different 903s. To be continued in Resurrection 78 [ed]. |

|

ICL 2966 — Delwyn Holroyd Terminals continue to give trouble. The intermittent problem on the 7501 scan board has remained elusive and the terminal is currently running with the board from the left hand SCP. One 7181 has developed an intermittent problem where the jiggle scan collapses. These units have a third scan coil that is used to move the beam rapidly up and down one by character height as each line is scanned horizontally. |

|

Our Computer Heritage — Simon Lavington & Rod Brown A collection of technical manuals and rare photos of the Ferranti Mark I* installed at Armstrong Siddeley’s Ansty site has been located. Subject to the owner’s approval, it is intended to catalogue this collection and arrange for it to be deposited in a permanent archive. Tony Conway has begun work to expand and complete the Modular One technical write-up in the Minicomputer section. Iann Barron has offered his help. |

|

IBM Group — Peter Short |

|||||

|

John Heath, inventor of the swinging arm disc head technology, visited the museum in December and donated his first two prototype swinging arms and some slides. Swinging arm technology was not generally adopted in IBM; other laboratories clung on to the old push-pull. However it became the most licensed IBM patent of all time, and John estimates that to date something like 19 billion hard discs have been produced using his invention.

John also helped the museum to identify an artefact that has puzzled us for a while. A small plastic box full of small magnetic strips, labelled ‘Zenith’ and ‘First Hursley Storage Project.’ There was also a matching cartridge containing more strips. It turns out that John was hired specifically to work on this project! In the mid-1950s the CIA asked IBM to produce a system for the mass storage of printed documents. The IBM1350 was developed using small pieces of Kavlar photographic film, called chips, stored in small boxes called cells, fitted to boxes in a carousel. Due to some unpleasant characteristics of Kavlar, IBM redeveloped the system, the IBM1360, in the hope of commercialising it using standard photo film technology. A full description of these two systems is in Wikipedia at https://en.wikipedia.org/wiki/IBM_1360. Meanwhile IBM decided to seek a cheaper magnetic storage solution to produce higher densities and faster operation using the same format. This was Zenith, the low cost version. Development never came to fruition, as other forms of magnetic media were becoming available.

When the Greenock plant was opened in 1954, a time capsule, a lead box containing newspapers and other artefacts, was buried in a wall in reception. This has now been retrieved as the site is finally being abandoned, and has been received in Hursley for safekeeping. |

|

Manchester Baby/SSEM — Chris Burton Nothing much to report regarding the “Baby” computer itself. It typically works when switched-on, four times per week, though occasional spells of reluctance might take a few sessions of expert attention to locate problems like a loose valve, out of tolerance resistor or displaced control knob. However, it is very pleasing that the volunteer team has grown to about 20 people. They are formally enrolled by the Museum of Science and Industry and undertake a fairly structured training programme starting as an explainer who faces and interacts with the public, with opportunities to move on to operator, maintainer and repairer. Demonstration sessions are held on Sunday, Tuesday, Wednesday and Thursday every week and the groups are to be congratulated on their enthusiasm when presenting the story of the “Baby” to individuals, families and parties. Typically 40 to 80 persons are interacted with on each working day. |

|

Turing Bombe — John Harper The planned new exhibition has got off to a slow start with most action being to establish the conditions and workplace for making a dummy Bombe more presentable and motorised. We have started a new research project to try and find out in much more detail the various versions of the BTM Bombes and their attachments. This has to start with inputting parts schedules, from these identify the relevant drawings and then study these to get some idea of the machine details. We hope to draw up these drawings using AutoCad and then produce assembled units. If resources allow we might be able to then create 3D or ‘Walk through’ images but perhaps this aim is rather ambitious! As a spinoff from this we are producing a ‘used on’ database so it will be able to identify where every part is used. This database is not small and is over 5000 entries long and will have over a dozen columns of information. |

CCS Website InformationThe Society has its own website, which is located at www.computerconservationsociety.org. It contains news items, details of forthcoming events, and also electronic copies of all past issues of Resurrection, in both HTML and PDF formats, which can be downloaded for printing. At www.computerconservationsociety.org/software/software-index.htm, can be found emulators for historic machines together with associated software and related documents all of which may be downloaded. |

News Round-Up

Huge congratulations to Margaret Sale who has recently been awarded the Prime Minister’s “Point of Light” award for her tireless work for Bletchley Park and latterly, TNMoC. Margaret was with her late and much missed husband Tony, was a driving force in saving Bletchley Park for the nation. She continues to serve as a trustee of TNMoC and is an inspiration to everybody there. 101010101 In 2002, not long after ICL was fully absorbed into Fujitsu, the ICL name was cast into outer darkness never to be seen again. Or so we all thought. But now, in a small way, the ICL brand is back. A sentimental soul by the name of Kevin Jones has set up a computer repair company International Computer Logistics and has secured the rights to use the name with a near-lookalike logo and web address (www.icluk.co.uk). And where, I hear you ask, might this phoenix be located?

In Kidsgrove, of course! Where else? 101010101 In February, the Blackpool building where lived the original ERNIE (ERNIE of the Premium Bonds, not to be confused with a certain Teddington milkman) was demolished (see www.bbc.co.uk/news/uk-england-lancashire-39090489). For the last several years ERNIE himself was on display in the Computer Gallery of the London Science Museum. He has now been retired again, no doubt to the Museum’s “care home” at Wroughton in Wiltshire. We wish him well. 101010101 In Resurrection 75 we noted with some puzzlement, that the Victoria and Albert Museum had borrowed the Ferranti Pegasus from Manchester’s MSI rather than the one from the Science Museum just across Exhibition Road. That temporary display is now over but was replaced by another in which an Apple 1 featured. Once again, not The Science Museum’s Apple 1 but another, sourced elsewhere. We remain mystified. 101010101

A part of the Julius Totalisator in which the Society once had an interest is now on display in the London Science Museum. So it is appropriate that Brian Conlon should write from Australia directing our attention to his splendid website tinyurl.com/zxkqcfk devoted to the Totalisator. Readers may be particularly interested in a late model based around DEC PDP-8s. 101010101 Javier Albinarrate reports from Buenos Aires that they have now sufficiently completed their Ferranti Mercury working replica at the Museo de Inform´tica de la República Argentina to be able to hold an open day attended by some 1,500 visitors. I/O remains to be completed but the end result is seriously impressive – re-creation on a heroic scale. See tinyurl.com/lxs79b3 and pictures at tinyurl.com/kfk2v8f. We are lost in admiration! 101010101 Finally, news reaches us of the passing of Hugh Devonald late of Elliotts, Ferranti and ICL. One of nature’s true gentlemen and a consummate engineer. Rest in peace Hugh. We’ll remember you. |

The Evolution of Enterprise ComputingGeoff SharmanWhat’s the biggest use of computers today? Page retrieval via the World Wide Web? No. Google searches (Google claims 3.5 billion searches a day worldwide)? Social media? Big Data analytics? No. It’s something much more mundane, but also more fundamental: business transactions running on enterprise systems. If you doubt this, ask yourself whether you did any of the following today: buy something in a supermarket?; use a cash machine, debit card, credit card, or contactless payment card?; use an app for internet or mobile shopping, banking, etc.?; make a telephone call?; trade shares? buy a lottery ticket?; travel by public transport?; watch catch-up or pay-for TV?; use electricity, gas or water? Of course you did! All of these activities generate online transactions which are processed through traditional and not-so-traditional enterprise systems for Online Transaction Processing (OLTP). The worldwide daily volume of these transactions had already topped 20 billion worldwide by the mid-1990s; today it’s well in excess of 100 billion. That’s two to three orders of magnitude greater than the number of Google searches per day, and equivalent to 14 transactions per day for every man, woman and child on the planet. All this is simply a reflection of the fact that trade is the dominant human activity: one US bank reports that the financial value of transactions handled by its mainframe-based OLTP system is over $10 trillion per day. That picture is repeated across government, the financial services industry, other major industries, and web based giants such as Amazon, eBay and PayPal. From the consumer’s point of view, commerce has become much easier but the records of our daily transactions are held in systems right across the globe, constituting the digital footprints through which our lives can potentially be tracked day by day and hour by hour. Yet it still comes as a surprise to many IT professionals that OLTP is the major use of computer systems. To understand why, it helps to take a historical perspective. The first use of writing, on 6,000 year old Sumerian clay tablets, turned out to be for accounting records rather than literary works. The first business use of computers in 1951, by the J. Lyons company in the UK, was also for accounting, and things didn’t change until the US government got involved. In the early 1960s, the US Air Force unveiled its SAGE (Semi-Automatic Ground Environment) air defence system, a very large system combining computers and networks for the first time. American Airlines adapted some of this technology for its SABRE airline reservation system, launched in 1965. That year also saw other key developments in computing: IBM’s first deliveries of System/360 – the first series of upward compatible computers – and the formulation of Moore’s Law. Which of these had the greatest influence on subsequent events? IBM quickly moved to become the largest computer company of the era. The core of its success was the widespread adoption of IBM software, such as BATS (Basic Additional Teleprocessing Support) implemented for UK banks, together with database management systems and communications software based on SNA (Systems Network Architecture) as the basis for enterprise systems. Within a decade, these systems were installed in large enterprises across the globe. Many of those systems have been continuously enhanced and remain in use to the present day, forming a large part of the digital infrastructure on which we all now depend. Gordon Moore, the founder of intel, announced his famous “law”: a prediction that the number of transistors per unit area of silicon would double approximately every two years for the foreseeable future. Over the next 40 years, this exponential increase in processor power at constant cost changed everything: cost/instruction, market size, device form factors and network bandwidth, opening up the era of pervasive computing. Few computer companies of that era survive to the present day. How is, then, that OLTP survives and thrives? To answer this question, we first need to understand what it is. The term “OnLine TeleProcessing” was initially used to denote the use of terminals connected to a central computer via telephone lines, and only later adapted to encompass the meaning of handling business transactions with reliability and integrity. These terminal were used by employees of airlines, travel companies, utility companies, and banks to capture customer transactions at source and process them immediately, rather than filing for later action. Consumers usually had no access to these terminals, although banks were the first to offer consumer terminals, e.g. the Lloyds Bank Cashpoint (IBM 2780) which was introduced in 1972. Typical networks of the time were small (~50 terminals), but a key problem was handling concurrent activities efficiently; network lines had low bandwidth (~1024 bits/sec) and could not be shared by different applications; processor hardware was slow (~1 MIPS); while accessing data (typically held on magnetic tape) would have been even slower (seconds/record). Hardware advances including higher speed leased lines, direct access disc storage, and the continued improvement in processor speed were important enablers for these systems, but even they could not match the market demand nor keep pace with its rate of growth. That required a radical re-think of the way software was designed. The key problem was that the operating systems, data management systems and programming languages of the day had all been designed for batch processing, where a single application program might run for hours. Scheduling a batch job (process) could take millions of instructions, i.e. many seconds. Most operating systems could only handle a few concurrent jobs; data management mainly provided support for sequential files; while programming languages did not support network operations. None of this technology fitted an environment where each terminal user needed a response within a couple of seconds. It quickly became clear that new software was needed for the management of indexed files and data bases, which allowed direct access to a specific record or sets of records within a data file in milliseconds, for data communication, which enabled receiving and sending messages and control of telephone lines, and especially for application execution which enabled rapid scheduling of short application segments. These segments are initiated by a message from a terminal and create a response message in real time; the operating software became known as an OLTP Monitor. Some well-known monitors first released in the late 1960s and early 1970s have been continuously enhanced ever since and one of these, CICS (Customer Information Control System), has been described as “the most successful software product of all time”. It’s been developed at IBM’s Hursley Lab in the UK since 1974. At the heart of OLTP is a programming model where resources such as applications, memory, processes, threads, files, databases, communications channels, etc. are owned by the monitor rather than by individual applications. On receipt of a request message, the monitor initiates an application segment and provides concurrent access to these resources. It frees resources when a response message is sent, so the application has no memory of actions it may have performed or, in other words, is “stateless”. More complex applications (known as “pseudo-conversations”) can be created by retaining some state data in a scratchpad area. The next segment retrieves this data from the scratchpad area, processes the incoming message and issues another response message. This model is very different from conversational application models, which retain large amounts of state between user interactions, and it’s this feature which enables it to scale to thousands or millions of transactions per second. Subsequent decades saw intense competition for this OLTP business from vendors of mainframe compatible systems, specialist “non-stop” systems, mid-range and Unix systems, packaged application systems, and PC-based distributed systems using local area networks. The most far-reaching impact, however, was occasioned by introduction of the world wide web as a service running on the internet in the early 1990s. The WWW used a stateless activity model in which messages triggered the retrieval of a web page and generated a response message. It became immediately apparent to many observers that this was, in effect, a read-only version of OLTP and it wasn’t long before creative programmers found a way to run user applications from a web server. The original WWW pioneers, by contrast, knew nothing about enterprise applications. Opinions varied on what impact the WWW would have on existing OLTP systems. Some commentators thought it would provide the low cost global network which these systems needed, while others thought it would spark a wave of new products which might compete with those systems. In the event, both have happened. Rapid innovation has seen the creation of new web-based programming models and languages such as Java, PHP (used as the basis for Facebook), and Node.js (a variant of Javascript), each of which implements aspects of the OLTP programming model. At the same time, established OLTP monitors have incorporated support for Internet protocols and new programming languages, and now sit behind many popular web sites for internet banking, shopping, travel reservations, and so on. One mainframebased travel reservation service processes a billion transactions/day on its own. There’s no doubt that OLTP, in its many forms, powers the world economy and will continue to do so. To take only one example, sensors connected to the WWW for the Internet of Things will require OLTP applications to support them and are likely to drive the global transaction rate to trillions per day. www.bcs.org/content/conWebDoc/54889 gives more background on this article. The article is based on the January 2017 CCS lecture in London and has previously been published in IT Now. It is reproduced here by kind permission of the editor. Geoff Sharman spent 35 years in the software industry. He was responsible for the strategic direction of IBM’s billion dollar CICS software business. He can be contacted at geoffrey.sharman@btinternet.com. |

|

Appeal for Help |

|

Raf Dua (raf@microplanning.com.au) is writing a history of the ICT/ICL PERT programs 1962-9. He asks whether anybody from the ICT 1300 PERT team is around and could make contact with him. Any documentation such as the manual would also be much appreciated. |

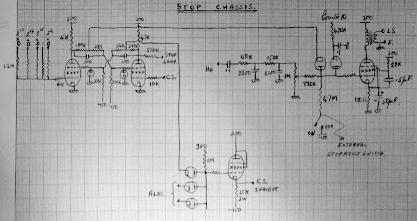

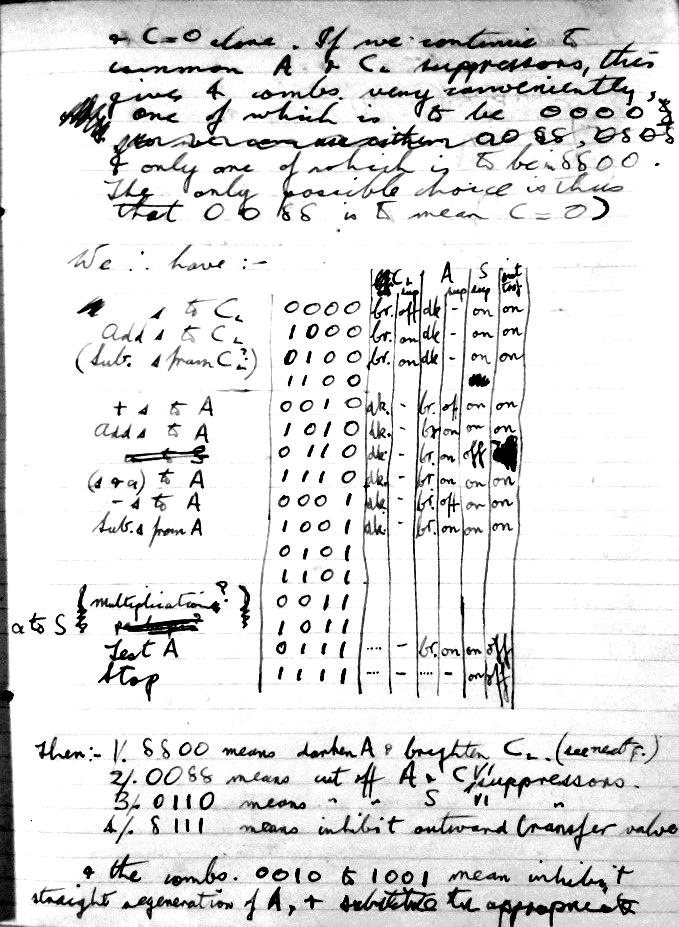

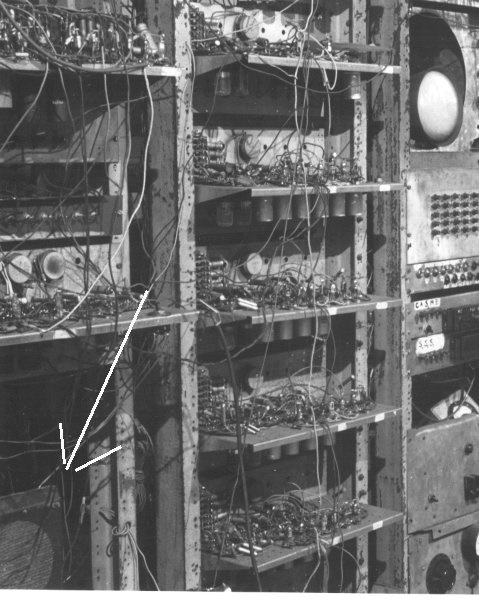

Reflections on the HootSimon LavingtonFollowing David Link’s Resurrection 76 article on the generation of music in the Ferranti Mark I and Jack Copepland’s “controversial” appearance on the BBC Today programme, Simon Lavington has been inspired to dig deeper to investigate the facility which enabled Strachey’s feat to take place. 1. The history of the HOOT instruction The idea of some sort of audible signal being generated by a digital computer has a long history. In section 6.8.5 of the first report on John von Neumann’s Princeton computer, dated June 1946, we read: There is one further order that the control needs to execute. There should be some means by which the computer can signal to the operator when a computation has been concluded, or when the computation has reached a previously determined point. Hence an order is needed which will tell the computer to stop and to flash a light or ring a bell. An alarm instruction was also proposed a little later by Alan Turing for his ACE computer at the National Physical Laboratory. Much, much earlier, Charles Babbage planned to make his Analytical Engine ring a bell if an arithmetical error was encountered. At Manchester University, the need for an audible signal arose in the summer of 1948. The early factoring programs run on the Manchester Baby computer were deliberately chosen to have long run times (up to an hour) in order to test the stability of the novel CRT storage system. One can imagine Tom Kilburn and others losing patience with having to watch the flickering display on the machine’s monitoring screen until the program successfully terminated in a STOP instruction – at which point the screen stopped flickering and a small neon lamp lit up. The solution was to make the Baby’s STOP instruction cause a loudspeaker to emit a fixed-frequency buzz. It is not clear exactly when a loudspeaker was attached to the Baby machine but it is thought likely to have been during June or July 1948. The surviving Lab notebook kept by Geoff Tootill is silent on the matter but notes kept by Dai Edwards from mid-September onwards offer a clue. Dai’s first task as a research student was to learn about the Baby computer and to produce neat circuit diagrams and descriptions of the equipment. Figure 1 is undated but, by observing the dates on adjacent diagrams, it seems reasonable to assume that Dai drew this diagram in November 1948. On the left of Figure 1 we may deduce that the circuit refers to an extended version of Baby that assigned four, not three, function-bits in the instruction. These are shown as bits 213 to 216. Alternative tones are shown for STOP: HA which is the action waveform, and Counter10 which is the slowest scan counter stage. Chris Burton, who led the team which built the Baby working replica, makes the following comment on Figure 1. “I believe the action is supposed to be that the section fed by HA is a filter to provide a tone of about 700Hz at the volume control. The two diodes are an AND gate to permit the tone out when the STOP flip flop sets, and to modulate the tone with C10. This will make the sound a series of 1.4 second on, 1.4 second off of the tone. In June 1948, there would not have been the long counter chain leading to C10. Consequently, I believe the second diode with C10 on it was added later as a refinement as they expanded the address range”. The first-occurring entry in Tootill’s notebook for a four-bit op code is dated 20th July 1948 and it can be seen from Figure 2 that the code for STOP at that point in the Baby’s development was 1111. This chimes with the top left of Figure 1 and the four input signals shown there. The loudspeaker is shown as the transformer-coupled LS in the top right-hand corner of Figure 1. It seems reasonable to assume that a loudspeaker was being driven by Baby’s STOP instruction from at least July 1948. The loudspeaker is certainly evident in a photograph taken by Alec Robinson on 15th December 1948 – see Figure 3. The enhancements to Baby led in due course to a five-bit op code. The first definite suggestion for a five-bit op code in Tootill’s notebook occurs on 23rd October 1948, though it is clear that, from the outset, Williams and Kilburn intended the Baby’s instruction repertoire and address range to be expanded. Judging from the layout of the Baby’s 40-button ‘typewriter’ input panel, there was always an intention to expand to 40-bit words. The 5-bit Teleprinter code fits conveniently into 40-bit words, and indeed into the 20-bit half-words eventually chosen for instructions. By the end of 1948 the five-bit op code was being represented by Teleprinter characters. It was not until late 1949 that input/output using Teleprinter equipment was actually attached to the Mark I computer. Before that time, input was by 40-button ‘typewriter’ and output was by inspecting the monitor screen.

At this stage in its development, the Manchester Mark I, sometimes called MADM, did not have an explicit HOOT instruction. The loudspeaker was only capable of emitting a tone when the STOP instruction was encountered and a programmer could not alter this tone. Various typewritten listings of Mark I’s instruction repertoire survive; these help us to chart the development stages. None of the early surviving listings, respectively dated 8th November 1948, 5th December 1948, June 1949 and September 1949, mention a HOOT instruction. The first mention is in a listing dated 28th February 1950, when the Teleprinter code for HOOT is given as K. This code is also used for MADM’s HOOT instruction as described by Turing in his retrospective account of what he calls the Pilot Machine (or Manchester Computer Mark I). This is on page 87 of Turing’s Programmers’ Handbook, which was issued in about March 1951 for the Ferranti Mark I computer. An early user of the University Mark I was Audrey Bates, a Manchester mathematics graduate and Alan Turing’s first research student. Curiously, the HOOT instruction is given the Teleprinter code E in Audrey Bates’ M.Sc. thesis, submitted October 1950, and code K is assigned to one of several versions of the STOP instruction. This suggests that, in Audrey Bates’ time, the loudspeaker may have been activated under two circumstances: (a) when pulsed transiently by each HOOT instruction; (b) when set to emit a constant tone by a STOP instruction. When describing the Pilot Machine Turing assigns code E to “ TIMEWASTER”, which is probably another name for a dummy instruction. We may deduce that the Mark I was the subject of minor engineering changes throughout its life. The Manchester Mark I, MADM, was closed down in the late summer/autumn of 1950. There is no record of the HOOT instruction being used to generate music up to that point. The first recorded mention of explicitly-generated music at Manchester occurred in 1951, by which time the Ferranti Mark I was in operation in the Computing Machine Laboratory at Manchester University. The programmer was Christopher Strachey and it is interesting to discover exactly when Strachey produced melodies via the computer’s HOOT instruction.

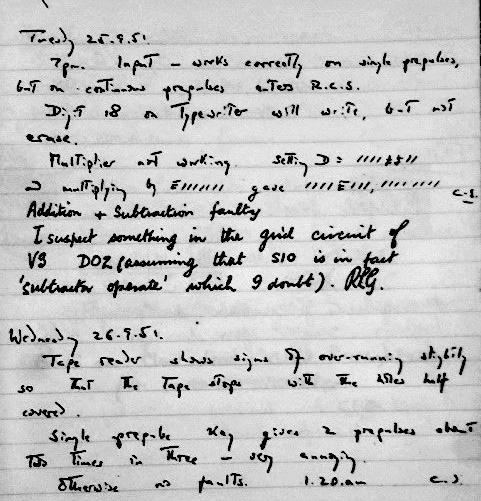

2. The likely date of Strachey’s first visit to the Ferranti Mark I. Looking through the surviving Ferranti Mark I users’ Log Books, it seems that Christopher Strachey first used the computer at Manchester University on 25th September 1951. A photo of the relevant log book page is shown in Figure 4. By way of explanation, Christopher Strachey signed on at 7:00pm at the start of an all-night session during which he would have been the only user in the machine room with no cover from a maintenance engineer. Figure 4 shows that Strachey’s session was certainly not free of hardware faults, though some of them were possibly intermittent. Judging from the log book entries from other users involved in similar all-night sessions in 1951 and 1952, it seems possible that Strachey pressed on with program development until a solid hardware fault (in the multiplier?) became insurmountable. It is not clear when Strachey quit. It is possible that the next user, Dick Grimsdale (RLG), logged on early in the morning. Dick was a research student who was developing engineering test programs and it seems likely that Dick would have tried to diagnose the multiplier fault prior to the involvement of the maintenance engineers. It is not clear who used the computer on Wednesday after the fault had been fixed. Tony Brooker has said that internal Manchester University users did not always sign the log book but external visitors such as Strachey were always asked to sign on and sign off. It therefore seems that Strachey next used the computer on Wednesday evening, 26th September, working through until 01:20am the next day. It is possible that the first full ‘performance’ of the Manchester computer’s rendering of God Save the King took place early in the morning of 27th September 1951. Certainly, it had been heard and admired by many in the Computing Machine Laboratory by the start of October. It seems likely that Strachey’s music routines were copied and modified by the Ferranti Mark I’s maintenance engineers and other programmers, to use as party tricks when demonstrating the computer to visitors. It was during one such visit that Strachey’s music was recorded by BBC sound engineers. 3. A possible date of the BBC’s Children’s Hour visit. No mention can be found in the Ferranti Mark I’s main log book of the BBC’s visit. This is perhaps because it was a special session supervised by the maintenance engineers, as described below. Roy Duffy was the second computer maintenance engineer to be recruited at Manchester, taking up his duties in November 1951. From conversations with Roy in the 1970s, it seems that the engineers had their own separate Mark I log books for a while, one of which was preserved by Roy. He drew my attention to an entry dated 7th December 1951 saying: Demo to BBC Children’s Hour. No other details were given. It is indeed likely that the BBC recording of computer music from the Ferranti Mark I was made on 7th December 1951 because, in his book Faster Than Thought published in 1953, Vivien Bowden says: “In December 1951 the BBC broadcast the machine’s performance of Jingle Bells on a One Horse Open Sleigh and Good King Wenceslas.... On the whole it seems probable that this technique will have no other applications than the amusement of the programmers and their friends at Christmas time”. Probably the only surviving audio recording of music generated by the Ferranti Mark I is on a 30cm, 78rpm acetate disc now in the custody of Chris Burton. It contains three melodies: The National Anthem, Baa Baa Black Sheep and In the Mood. In his article in Resurrection 12 (1995) Chris Burton ascribes a date of 7th September 1951 to this disc. Might this be a mis-transcription of 7th December? The original owner of the disc, who was present when it was cut by the BBC, was the Ferranti engineer Frank Cooper. In his 1994 audio commentary to the disc, Frank explains that his disc was a one-off special, cut just after the main BBC recording session and at a time when the computer was “getting a bit sick and didn’t want to play for very long”. Although Frank could not remember the date of the BBC’s visit, some of his comments are very revealing about the computing background in the autumn of 1951. He says: “It’s many years since I’ve played this disc and I really can’t remember much about it. But following Chris Strachey’s efforts at producing music (in inverted commas) from the machine, everybody got interested. Engineers started writing music programs, programmers were writing music programs and eventually word got around that this marvellous computer, or the electronic brain as it tended to be called by the press in those days – everybody wanted to hear this. And one of the groups that came along at the time was a recording crew from BBC Children’s Hour. The leader of the team was one of the Aunties, I think Auntie Muriel, but there again after 40 odd years I may very well be wrong. Well, in those days the machine wasn’t all that reliable but we managed to get it working for the necessary four or five minutes for the BBC to make a recording of various tunes which had been written for the computer and they were all very happy”. Frank requested a copy of the BBC’s recording but this was refused. Instead, Auntie Muriel replied: “I’ll see if Fred in the recording van has a scrap disc. We might be able to record something for you if you can make the machine go again”. They just managed to make a short recording. In conclusion, Frank Cooper’s surviving disc may or may not contain the same melodies chosen by BBC Children’s Hour and may or may not contain melodies generated by Christopher Strachey’s original program. What it undoubtedly does contain is important examples of very early computer-generated melodies dating from late 1951. No evidence has been found of any melodies being generated by any Manchester computer before September 1951 and the BBC has been unable to trace its own recording of Ferranti Mark I music. In this sense, Frank Cooper’s disc is unique. [It is not usual for articles in Resurrection to contain detailed references to source documents. However, such is the strength of feeling generated by the current music debate that it is thought useful to provide pointers to the source material used in the above article. Accordingly, a full reference list can be found at www.computerconservationsociety.org/resurrection/res77_aux.htm][ed.] The original documents that have been consulted in the writing of the above article are available for consultation in the National Archive for the History of Computing, the British Library and the Library of the Worshipful Company of Information Technologists. Professor Lavington is a leading member of the Computer Conservation Society with several books to his credit on the history of British Computing – including a comprehensive history of Elliott computers. He can be contacted at lavis@essex.ac.uk. Image acknowledgements: Figure 1: Worshipful Company of Information Technologists. Figures 2 & 3: National Archive for the History of Computing. Figure 4: School of Computer Science, University of Manchester |

|

A New Idea for Resurrection |

|

Reader Jim Munroe has made the interesting suggestion of an occasional column in Resurrection entitled “ Xxxx Xxxx – a Life in Computing” to be contributed by early computer engineers, programmers and such. Something short of a life history but relating writers’ own experience of their pioneering contact with the world of computers. Shall we give it a go? Perhaps 600-900 words apiece? The editor awaits... Go to www.computerconservationsociety.org/document_exchange.htm for technical advice on submission of articles for Resurrection. |

In Search of the Lost Bits

Dik Leatherdale

Having struggled over many years attempting to create an emulator for Atlas 1, I have found that surviving documentation, though often highly detailed, does leave occasional gaps where something which was “obvious” to the writer is left unsaid. Clearly this creates a certain degree of difficulty and whereas logical reasoning can often fill the gap sometimes it is more difficult. An example of such a gap concerns the implementation of the repertoire of Atlas Floating point instructions.

The Ferranti Atlas was, for its time, an incredibly complex computer. A repertoire of no less than 100 hardware instructions and a further 262 additional pseudo-hardware instructions (actually built-in subroutines) could be put to use by the assembler programmer and by compiler writers though, as the late Geoff Rohl once remarked, rather few of this gargantuan set of functions were ever generated by the Atlas compilers. Of the 100 genuine hardware instructions some 45 were concerned with manipulation of the floating-point accumulator and it is this subset of instructions which provides some difficulty for an emulator. For floating-point purposes the Atlas big-endian, 48-bit word was divided up into an eight-bit exponent (bits 0-7) and a 40-bit mantissa (bits 8-47 with the binary point between bits 8 and 9). The exponent was an octal exponent so the number represented was mantissa × 8exponent. For the purpose of this note we will ignore this subtlety and assume that the mantissa is a mere 7 bits (to prevent the reader from expiring from sheer tedium) and that the exponent is binary – It makes no difference to the issues with which we are dealing here. The number 3, (for example) might be represented by either 0.110000 × 22 or equally by 0.000011 × 26 The former is, in Atlas parlance, regarded as a “standardised” number, the latter as “unstandardised”. To add the standardised numbers 3 and 34, we first bring them together and compare the exponents. If unequal, the number with the lowest exponent is “destandardised” until such time as the exponents are equal. Then and only then can the mantissas be added. Finally the result is standardised (which isn’t needed here). Thus –

And the standardised number decimal 37 emerges as you would expect. All this will be well-known to readers and provides no particular difficulty. But now let’s consider what happens when the same two numbers are added but with the 34 in unstandardised form –

We see here that although the same process has been followed we get a different answer : 36. We’re dealing with small integers here so we must regard this answer as “wrong”. But reverting to the real world with a 40-bit mantissa, we might more charitably call this a “loss of accuracy”. The difficulty can be overcome by adding an initial step to make sure that both the numbers are standardised at the start of the process. When I implemented the floating-point functions in the Atlas emulator, more years ago than I care to recall, I assumed that the operands had to be standardised first. I considered that an avoidable loss of accuracy would not have been thought acceptable by the designers. More recently I have come to doubt the wisdom of this choice. Atlas was intended to be (and was) a world-beating computer the speed of which had not been previously achieved. It would have taken some little time to confirm that both numbers were standardised at the point of entry and even more to deal with the matter if they weren’t. Given that in the nature of things most, even if not all operands would have been standardised anyway, could this slowing of the machine be justified? Especially if, in most cases, it didn’t make any material difference to the end result. After all the programmer had operations available to make sure the operands were standardised if it mattered. So what does the published documentation tell us? The description of the 320 (Add) instruction reads – Clear L; add the contents of S to the contents of Am; standardise the result.... You will observe that, although we are told it applies standardisation at the end, it doesn’t tell us to standardise at the start. It is silent on the matter. It hints, but it isn’t quite conclusive. My present feeling is that a fairly trivial change may be called for to achieve an authentic emulation. The difficulty is in deciding what is authentic. But no doubt somebody out there can shed light on the matter. It has also occurred to me that builders of replica hardware must face the same difficulty from time to time. But the problem is far from new. It was, if I recall, the late Terry Muldoon of the IBM Hursley Museum who once remarked that Hursley, having produced the first member of the 360 range (model 40 since you ask) had it easier than their colleagues in other IBM laboratories, because everybody else had to conform to what Hursley had produced, whatever that was. But at least the other labs had a point of reference available. Atlas people will recognise that I have grossly oversimplified the nature of the machine. But I have done so in order to distil the issues to a sensible core. Please forgive me. Dik Leatherdale has undue influence in the offices of Resurrection otherwise this article might not have got past the editor. He can be contacted at dik@leatherdale.net. |

|||||||||||||||||||||||||||||

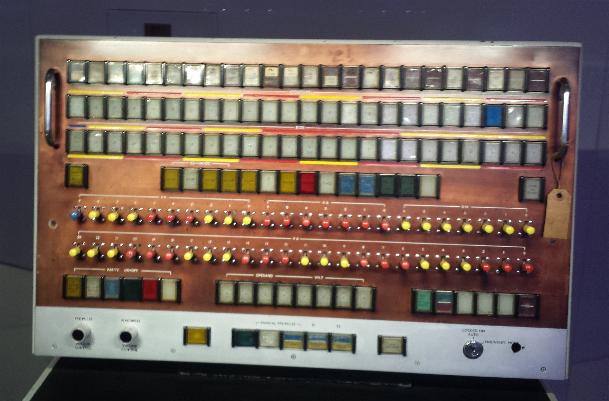

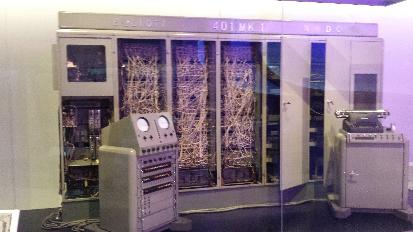

The Science Museum’s Winton Gallery

Kevin MurrellLetter to the Editor : PLAYSD

John Buckle|

I was intrigued to see a PLASYD compiler has survived (Resurrection 75, page 5). Since I suspect only a handful of CCS members will have heard of the language, perhaps a brief history would be interesting. In 1967, ICT was planning a number of new software developments, including two FORTRAN compilers, and it was widely agreed that we needed some better development tools. I had been impressed by a paper describing Niklaus Wirth’s PL360 language for the IBM 360 and thought something similar for the 1900 would be useful. The software development unit at that time was open to innovations, and I was allowed to design such a language. A small team was established to write the compiler but I was not allowed to call the language PL1900 – hence the Programming LAnguage for SYstem Development. The restful nomenclature caught on and led to another tool called PSFUL and an operating system named CALM. Like PL360 PLASYD has most of the attributes of a high-level language, in my case, similar to my first love, Algol 60. It thus has declared, named and typed variables, loops, conditional statements and control statements. It also, of course, has assignment statements, but these are restricted to operations that can be easily and efficiently translated into 1900 machine code. Additionally, the programmer has direct access to the machine structure, for example by using one of the integer accumulators as a variable or as a modifier of an array. There was a GOTO instruction, pace Dijkstra, but its use was deprecated. PLASYD was used internally with some success for a number of projects and, although never officially supported externally, was allowed to escape to some privileged customers. It naturally died along with the 1900. If anyone is interested I have a typescript of the PLASYD Programmer’s Guide. It is over half an inch thick so would probably require professional facilities to scan or copy, but anyone is welcome to look at it. John Buckle can be contacted at buckle525@btinternet.com. |

North West Group contact details

Chairman Tom Hinchliffe: Tel: 01663 765040.

|

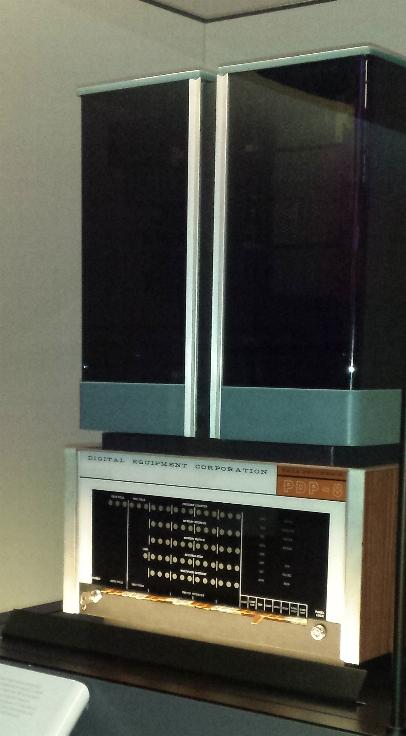

50 Years ago .... From the Pages of Computer WeeklyBrian AldousOrders over £3m won by ICT: A fortnight in which ICT has announced orders for over £3 million worth of equipment from the UK and from Eastern and Western Europe has culminated with a £580,000 order from the Scottish Gas Board for a 1905F installation. This follows the delivery of the first of five 1905s ordered by the Central Electricity Generating Board for £1.5 million. (CW024 p1) PDP production line at Reading: The first stages of the establishment of full scale production of the PDP range of computers are now in progress at the new 7,000 sq ft factory of Digital Equipment Corp (UK) Ltd, at Reading. At present PDP 8 machines are assembled from components from the US . (CW024 p3) Countrywide grid for Midland: All branches linked on-line to a computer complex by 1971 is the target set by the Midland Bank under a plan announced this week. It will be the largest on-line banking network in the world. (CW025 p1) ADA control system for Navy’s missiles: The latest County Class guided missile destroyers, HMS Fife and HMS Glamorgan, have been fitted with Ferranti Action Data Automation system – ADA – which employs two Ferranti Poseidon computers to achieve optimum use of the armament systems. (CW026 p2) Name and HQ change for EELM: A new name and new sales headquarters were the two major announcements by Lord Nelson of Stafford at yesterday’s annual meeting of English Electric Computers Ltd, the new title for English Electric Leo Marconi. (CW027 p1) FACE aids gunners in the field: The first large scale battlefield application of computers is marked by a £2.25 million order for Field Artillery Computer Equipment, FACE, placed with EIliott-Automation. The order is for a first batch of 76 operational systems. (CW027 p8) London traffic control scheme: Computer control of 300 traffic signals in Central London at a cost of £1.5 million is planned by the Greater London Council for 1970 as the first part of a three-phase scheme. (CW027 p8) Hospital to pioneer total data system: A thousand-bed hospital at Copenhagen will pioneer integrated data processing in Europe when a computer system takes over medical and administrative work in the near future, despite reported opposition from some staff members. (CW028 p12) Burroughs terminals at banks: The choice by both Barclays and the Midland Banks of Burroughs TC 500 terminal equipment represents a major triumph for that company and points to the possibility of at least some measure of standardisation in on-line banking routines in the UK. (CW029 p1) First System 4 to go East: The first computer using integrated circuits to be sold to an Eastern bloc country, an English Electric Computers’ System 4/50, is to be installed at the Yugoslavian Ministry of Defence next spring. Further orders from behind the Iron Curtain are believed to be on the way. (CW030 p1) Data service planned by GPO: A National computer service, backed by the government, could become a reality in a comparatively short time if the opportunities offered by the Post Office (Data Processing Service) Bill are fully grasped. (CW030 p1) Hancock system a World first: A World first, the Hancock Electronic Telecontrol system installed at CAV’s injector factory at Sudbury, Suffolk, aids production control of nearly 200 machine tools by automatic data logging and component counting. (CW030 p4) Flexible new range built by Siemens: A new family of computers for process control work, the 300 range, has been designed by Siemens AG in Germany, using monolithic integrated circuitry. This is the first range of machines based on micro-miniature circuits to be designed and built entirely by the company, although they have previous experience with these circuits in the American-designed 4004. (CW030 p12) China buys major machine from ICT: An order for a £500,000 computer system for Communist China was announced in Hong Kong this week by Mr C. B. Oldham, general overseas manager of ICT. No comment was available from ICT in this country . (CW031 p1) Traffic system in operation at Munich: The traffic control system for Munich was officially handed over to the Munich City Police on April 13. The system already controls about 20 major traffic zones in the city and is capable of expansion to deal with up to 60 zones each controlling from two to 15 intersections. (CW031 p8) E-A traffic systems in Spain: Self-adaptive methods of control, in addition to normal program selection techniques, will be incorporated in traffic control systems that Elliott Automation are to supply for Madrid and Barcelona. (CW032 p1) Number cruncher project by Elliott: Proposals for a large fast computer system which have been presented to the Ministry of Technology and the National Computing Centre, are being announced today by Elliott Automation. The proposal is for two machines, an NCR Elliott 4140, with a capacity of about seven IBM 7090s, and an NCR Elliott 4150 equivalent to about 15 Atlas computers, or 50 IBM 7090s. (CW033 p16) Remote loading at oil depot: The first remote-controlled rail car filling system in Britain has gone into operation at the Shell refinery at Stanlow, Cheshire. The system was developed by Shell in collaboration with Avery Hardoll and Sperry Gyroscope. (CW034 p16) Elliott’s all-British conversion system: Greater efficiency in the use of computers for scientific test analysis is expected to result from a new magnetic tape-to-tape conversion unit produced by Elliott Automation. This new, all-British unit is considerably less expensive and is claimed to be more accurate than any comparable equipment produced in the USA . (CW035 p1) NCIC is nucleus of new DP Network: Nucleus of a new data processing-communications network for the “instant” exchange of police information on a nationwide level is the National Crime Information Centre, Washington. Located at FBI headquarters, NCIC uses high-speed files controlled by two IBM 360 computers . (CW035 p7) US bureau firm moves into UK: One of the major independent US bureau organisations, the University Computing Co. of Dallas, Texas, has moved into the UK market through a merger with Computer Services (Birmingham) Ltd, and its associated Irish company, Computer Bureau (Shannon) Ltd. These two companies are now wholly owned subsidiaries of UCC. (CW036 p1) Stage set for a Scottish computer grid: Although still very much in the future, there is among Scottish local authorities a growing awareness of the benefits of educational television and hence a growing amount of high class co-axial cable in Scotland of the kind most suited to accurate data transmission. (CW036 p10) |

Contact details

Readers wishing to contact the Editor may do so by email to

Members who move house or change email address should notify Membership Secretary Dave Goodwin

(dave.goodwin@gmail.com)

of their new address or go to

Queries about all other CCS matters should be addressed to the Secretary, Roger Johnson at r.johnson@bcs.org.uk, or by post to 9 Chipstead Park Close, Sevenoaks, TN13 2SJ. |

Forthcoming EventsLondon Seminar Programme

London meetings take place at the BCS in Southampton Street, Covent Garden starting at 14:30. Southampton Street is immediately south of (downhill from) Covent Garden market. The door can be found under an ornate Victorian clock. You are strongly advised to use the BCS event booking service to reserve a place at CCS London seminars. Web links can be found at www.computerconservationsociety.org/lecture.htm. For queries about London meetings please contact Roger Johnson at r.johnson@bcs.org.uk. Manchester Seminar Programme

North West Group meetings take place in the conference room of the Royal Northern College of Music, Booth St East M13 9RD: 17:00 for 17:30. For queries about Manchester meetings please contact Gordon Adshead at gordon@adshead.com. Details are subject to change. Members wishing to attend any meeting are advised to check the events page on the Society website at www.computerconservationsociety.org/lecture.htm. MuseumsMSI : Demonstrations of the replica Small-Scale Experimental Machine at the Museum of Science and Industry in Manchester are run every Tuesday, Wednesday, Thursday and Sunday between 12:00 and 14:00. Admission is free. See www.msimanchester.org.uk for more details Bletchley Park : daily. Exhibition of wartime code-breaking equipment and procedures, including the replica Bombe, plus tours of the wartime buildings. Go to www.bletchleypark.org.uk to check details of times, admission charges and special events. The National Museum of Computing : Thursday, Saturday and Sunday from 13:00. Situated within Bletchley Park, the Museum covers the development of computing from the “rebuilt” Colossus codebreaking machine via the Harwell Dekatron (the world’s oldest working computer) to the present day. From ICL mainframes to hand-held computers. Note that there is a separate admission charge to TNMoC which is either standalone or can be combined with the charge for Bletchley Park. See www.tnmoc.org for more details. Science Museum : There is an excellent display of computing and mathematics machines on the second floor. The new Information Age gallery explores “Six Networks which Changed the World” and includes a CDC 6600 computer and its Russian equivalent, the BESM-6 as well as Pilot ACE, arguably the world’s third oldest surviving computer. The new Mathematics Gallery has the Elliott 401 and the Julius Totaliser, both of which were the subject of CCS projects in years past, and much else besides. Other galleries include displays of ICT card-sorters and Cray supercomputers. Admission is free. See www.sciencemuseum.org.uk for more details. Other Museums : At www.computerconservationsociety.org/museums.htm can be found brief descriptions of various UK computing museums which may be of interest to members. |

||||||||||||||||||

Committee of the Society

|

Computer Conservation SocietyAims and objectivesThe Computer Conservation Society (CCS) is a co-operative venture between BCS, The Chartered Institute for IT; the Science Museum of London; and the Museum of Science and Industry (MSI) in Manchester. The CCS was constituted in September 1989 as a Specialist Group of the British Computer Society (BCS). It thus is covered by the Royal Charter and charitable status of BCS. The aims of the CCS are to

Membership is open to anyone interested in computer conservation and the history of computing. The CCS is funded and supported by voluntary subscriptions from members, a grant from BCS, fees from corporate membership, donations, and by the free use of the facilities of our founding museums. Some charges may be made for publications and attendance at seminars and conferences. There are a number of active Projects on specific computer restorations and early computer technologies and software. Younger people are especially encouraged to take part in order to achieve skills transfer. The CCS also enjoys a close relationship with the National Museum of Computing.

|