| Resurrection Home | Previous issue | Next issue | View Original Cover | PDF Version |

Computer

RESURRECTION

The Journal of the Computer Conservation Society

ISSN 0958-7403

|

Number 79 |

Autumn 2017 |

Contents

| Society Activity | |

| News Round-up | |

| Making IT Work — A Report | Nicholas Enticknap |

| Lost Bits Regained? | Brian M. Russell |

| A Multiple Curta | Herbert Bruderer |

| A Life in Computing | Peter Tomlinson |

| Obituary : Hamish Carmichael | Dik Leatherdale |

| Book Review : The Story of the Computer | Dik Leatherdale |

| Book Review : The Turing Guide | Dan Hayton |

| 50 Years Ago .... From the Pages of Computer Weekly | Brian Aldous |

| Forthcoming Events | |

| Committee of the Society | |

| Aims and Objectives |

Society Activity

|

EDSAC Replica – — Andrew Herbert The main activity at present is the modification of the main control circuits to use an improved bistable design. This has been shown to work satisfactorily and activity is now focussed on ensuring all the signals generated by main control are at a sufficient level to trigger the functions they initiate in the arithmetic and input/output systems. The final batch of metalwork for the remaining chassis to be constructed (printer driver, clock monitor unit) has been ordered and delivery is expected soon. The three printer chassis were designed by Martin Evans and will be constructed by Dave Milway; the clock monitor was designed and will be built by Chris Burton. We have also ordered the metalwork for the long delay lines used in the main store. Peter Linington continues his work to improve the reliability of these delay lines ahead of construction and early results are promising. Les Ferguy, Peter Haworth and Don Kesterton are assisting Peter with the cabling from storage regeneration chassis for EDSAC registers to the short delay lines located in the large coffin at the rear of the machine. The design and layout of the operators’ desk has been finalised by Les Ferguy and is under construction. A newly joined American volunteer, Nigel Atkins, who works at the Smithsonian Astronomical Laboratory, has started an investigation into the construction of the EDSAC photoelectric tape reader. Chris Burton has designed and constructed a suitable bell and circuit to be exercised by the Z instruction (“stop and ring the bell”). A large digital monitor has been set up at the front of the gallery by Bob Jones, with a touch panel user interface on the barrier rail which allows visitors to select from a variety of videos and slide shows about EDSAC and the reconstruction project. There is some hope the machine will be executing some instructions from the engineers’ switches in the not too distant future. |

|

Turing Bombe — John Harper In Resurrection 78 I reported that this July was the tenth anniversary of the official switch-on of our machine by HRH The Duke of Kent. To celebrate this we collected together all the records of our achievements over the last ten years and turned them into a pictorial slide show. We were fortunate to have been given a large flat screen television on which we now display the compilation in our area. We have surprised ourselves with how much has happened since July 2007. The display runs for about eight minutes and then repeats. It will be run at all times that the main machine is being demonstrated. |

|

IBM Group — Peter Short |

|

We were contacted in June by IBM Oslo to say that, as they are moving offices later in the year, they would like to donate a number of artefacts to the Hursley Museum. This turns out to be a treasure trove of time recording and punch card/unit record equipment, together with many other unique items. The IBM Norway manufacturing plant produced time recording equipment from 1952 until 1960, as well as typewriters from the late 1950s. At the moment we are trying to work out the funding arrangements for shipping. Highlights include a 1930s 416 tabulator and 550 interpreter, and 1950s 557 interpreter and 519 reproducing punch. There’s also a 5496, the 96 column card punch released with System/3 in the early 1970s, probably the last ever rehash of the punch card. We now also have a Sequent server rack. The Sequent is on display as an example of IBM acquiring companies for their technology. Sequent had developed advanced hardware and software to allow a large number of processors to operate as a single system, with quick scalability to manage unpredictable workloads. However the company was not successful in leveraging the technology. When IBM acquired Sequent in 1999, the company marketed Sequent products and subsequently used them in its own products. |

North West Group contact details

Chairman Tom Hinchliffe: Tel: 01663 765040.

|

|

Manchester baby a.k.a. SSEM — Chris Burton Members of the team of volunteers continue to talk to and demonstrate the machine to large numbers of visitors on four days per week. A trickle of new volunteers is taken on who are then trained in the various roles leading eventually to diagnosing and repairing faults. Relatively few faults occur though when they do they are often difficult to fix until one of the top repairers is available. Fortunately, visitors seem to be impressed by the machine and the story of the original, even when there is a fault present. For the last few years members of the team have participated in the annual MakeFest festival at the Museum, held this year on 19th/20th August. The “Baby” contribution was again co-ordinated by Brian Mulholland and supported by other team members and by Museum staff. It was a popular and successful weekend. Visiting children were invited to code their name which they then saw scroll across the monitor display tube, followed by receiving a certificate containing a photo of their personalised display. Over the two days 187 certificates were handed out and carried away with great delight. |

IEEE Annals of the History of ComputingConcessionary Rates for CCS MembersBy arrangement with the publishers, members of the CCS are eligible for substantially reduced subscription rates. An annual subscription currently costs £55.00 for four print-copy issues including postage within the UK. If you are interested taking advantage of this arrangement please let me know. The first issue for new subscribers will be available January 2018. Please register your interest by emailing Doron Swade at: Expressing interest at this stage is not a commitment to subscribe. Registering an interest now simply allows me to send you further details closer to renewal time. IEEE Annals of the History of Computing is the pre-eminent archival journal for the history of computing. Launched in 1979 it is a peer-reviewed quarterly publication featuring articles by computer experts, historians, and practitioners, and includes unique first-hand accounts by computer pioneers. |

|

Software — David Holdsworth Kidsgrove Algol There is a little demonstration (completely revised over the summer) at: kdf9.settle.dtdns.net/KDF9/kalgol/DavidHo/demo.zip. It compiles an Algol60 program and runs it load-and-go (something the real system never did). Unlike earlier demos, it is engineered much like the real system, with the bricks (overlays) on an emulated magnetic tape, and makes very few demands on system. All you need is a C compiler. It has run on Windows10, Windows98SE, Raspberry Pi GNU/Linux, FreeBSD, Solaris, ubuntu, MacOSX i.e. widely differing versions of Windows, various UNIX-like systems, involving Intel, ARM and SPARC architectures. There have again been various improvements to David Huxtable’s brick 20 (the most indispensible missing component), and we are reasonably confident that it is close to the original. (You may recall that Brian Wichmann found a handwritten version in the Science Museum’s Blythe House.) We have been presenting our system to Bill Findlay’s emulator ee9, which we also use for our Whetstone Algol facility. Just as Kidsgrove Algol proved quite a good test for real KDF9s, so also with ee9. In doing so we have turned up some dubious code in the original. We do not have brick 84, the Usercode compiler for use with POST, but I have made a substitute out of the paper tape compiler, by writing the generated usercode on mag tape in paper tape code. The mag tape read instruction on KDF9 is identical to that for reading paper tape so, as long as I write the MT blocks to match the paper tape read instructions, it all works. It was reported previously that Brian Wichmann discovered a listing of the Paper Tape Usercode Compiler in the Science Museum archives. Our initial confidence in the quality of Brian’s copy-typing proved ill-founded. He made so few errors that it all seemed perfect. Copy-typing a second version from the photos uncovered a few more errors. This tends to corroborate my view that duplicate independent versions are best for recovering software from printer listings. This said, most of the Kalgol bricks have been recovered by Terry Froggatt’s OCR, and dogged proof reading coupled with a dictionary of common OCR mis-reads. It remains to write a lot of this up. Brian Wichmann still has his Algol60 test suite, and he is ready to try it with our resurrected Kidsgrove Compiler. |

|

Elliott 903 — Terry Froggatt Various sci-fi aliens oft warn us that “resistance is useless/futile”, but readers of Resurrection 77 will know that it was a missing pull-up resistor on a board of the TNMoC 903 which made all of the difference to the 8K extension store. Amongst the circuit boards in a 903 are 18 nominally identical A-FA boards. Each board holds one bit of each of the user-visible A & Q registers and of the registers used to access the store. The circuitry consists of a number of LSAs (Logic Sub-Assemblies) on daughter boards, and some discrete resistors. Over the last decade I’ve worked on seven 903 CPUs (the one at TNMoC and their incomplete spare, one in Jim Austin’s collection, two of Don Hunter’s both now in Cambridge, and my own two). I’ve noticed that the number of discrete resistors on the fronts and backs of these boards varies, although within any one 903, the total number of resistors on each of the 18 boards is usually the same. The recent problem with the extension store prompted me to look closer, and to check the values of the resistors, not just the quantity. Given a total of 126 A-FA boards, typically with 15 resistors each, this was a task I’d been putting off for most of that decade. Each A-FA board has a distinct serial number, but the boards in any given 903 do not have 18 consecutive numbers. In fact there is a considerable overlap between, for example, the TNMoC 903 and one of Don’s and one of mine. When sorted by serial number, some sense emerges. There have been four designs of the printed circuit (with non-overlapping serial numbers), progressively supporting an increasing number of resistors, fitted to the front of the board near the edge connector. Resistors not supported by the track design (whether added in manufacture or later) are tacked onto the rear of the board. Each A-FA board provides signals to the optional engineering display unit, to show the register contents on neon lights. R1 to R8 are 3.9KΩ resistors which isolate the registers from noise and cross-talk on the display unit cables. But R1 & R2, giving the result & carry from the adder, have been omitted from all of the boards except in the spare, oldest, CPU, because the display unit does not display them. This may have saved a few pence, but the 36 wires are nevertheless present in the cables with their expensive connectors. R9 to R17 are 1.0KΩ or 2.2KΩ pull-up resistors to the +6volt rail. I have six circuit diagrams which include the A-FA board. Three omit R17, but it has been added in red ink on two of them, and it is printed on one, which implies that this is the “latest” circuit. R10 is printed as 2.2KΩ in older circuit diagrams but is printed as 1.0KΩ in this latest circuit. The values for the other resistors agree across all six diagrams. In the three newer 903s, the boards use the final fourth design and do have the resistors shown in the latest circuit. In Don’s older 903 there are signs that R10 has been changed from 2.2KΩ to 1.0KΩ, and on my older 903, which hails from Mobil’s Coryton oil refinery, 1.0KΩ resistors have been added to the rear of the boards, incorrectly paralleling the 2.2KΩ resistors on the front. Given that the serial numbers on these two 903s overlap, the changes must have been made on a CPU basis (possibly in the field) rather than to the A-FA boards as they were manufactured. This leaves TNMoC’s two CPUs. In the main CPU, two boards were correct, but the other 16 had the old value of R10. Rather than attempting to unsolder the 2.2KΩ resistor from the front of these boards, I tacked a 1.8KΩ resistor on the rear, in parallel. The boards in the spare CPU not only had the old value R10, they also all lacked R14 to R17. So I moved the 2.2KΩ R10 (which was on the rear) to R15 (which should be 2.2KΩ), and added four new resistors to each board. Having cut some 200 short lengths of sleeving and made some 200 solder joints, TNMoC now have a good stock up-to-date A-FA boards and spares. |

|

ICL 2966 — Delwyn Holroyd The Making IT Work conference was held in late May, with the workshop sessions taking place at TNMoC. One of the sessions was on the restoration and operation of the 2966. Delegates were given an overview of the various challenges associated with keeping a large machine running in a museum environment and particularly issues associated with disc peripherals. The use of emulation was discussed as a solution to the disc reliability problem. The band rollers from the line printer are currently being re-covered with rubber. The lineprinter has been out of action for more than a year since it was discovered that the rollers had severely degraded. There is some uncertainty surrounding the original material specification and dimensions so first time success is not guaranteed. The band speed is servo controlled, so the precise dimension is not critical and we are hopeful that the new rollers will be close enough to work. As reported -5.2V power supply failure on the OCP turned out to be an issue with the sense wiring rather than a real power supply problem. It is working again for now but the sense wiring and crimps may yet need more attention. |

|

ICT/ICL 1900 — Delwyn Holroyd, Brian Spoor, Bill Gallagher We are still progressing on multiple fronts, juggling different sub-projects, but most effort since the last report has been on the 1901A sub-project, the others either having reached a natural break point or stalled requiring additional information that we have not been able to find. MAXIMOP More testing still required along with further documentation. PF56/2812/7903 Stalled awaiting 7930 understanding (7903 mode) and implementation of DDE (2812 mode), understanding of status changes is lacking. ICT 1830 General Purpose Visual Display Unit Stalled due to lack of information regarding display. Has anybody any information on this device, PLEASE? ICL 1904S The 1904S peripheral UIs have been completed and are generally working. Some work, with little progress, has been done on the 7930 scanner. A further beta release was planned, but put on hold while tidying the UIs. ICL 1901A The 1901A emulator is being revised to correct some hardware bugs found after studying the logics, bringing it into line with the peripheral UIs (as used on the 1904S) and adding other peripherals including an optional console typewriter; the hardware configuration now being defined in a configuration file, rather than fixed. This allows our copy of E1HS to run and E1DS to be loaded. We can now compile both E1HS (with some source corrections) and the fixed portion of E1DS from reversed engineered sources using either #NSBL or #APDB and convert the resulting binaries into checksummed ‘wush’ loadable (emulated) card decks or paper tapes. Effort has been put into understanding the fixed portion of E1DS, this hopefully will enable us to recreate enough of the missing overlays to enable E1DS to run programs on the 1901A. We are not aiming to implement either Automatic Operator or GEORGE1S, unless much more information becomes available, or in the unlikely case that a copy (card, paper tape or listing) of the original overlays is found. |

|

Harwell Dekatron/WITCH — Delwyn Holroyd The machine has been in almost daily operation during the summer with few issues to report. A number of store anode resistors have been replaced, and several more operators have been trained up. |

News Round-Up

|

Congratulations to Andrew Herbert OBE who has been appointed to the chairmanship of the National Museum of Computing (TNMoC) at Bletchley Park. Andrew is a former chairman of Microsoft Research Europe Middle East and Africa and is presently project manager of the EDSAC Replica project at TNMoC. 101010101 Earlier this year an unidentified professor of cryptography saw a ‘typewriter’ for sale in a Romanian flea market for €100. Except it wasn’t a typewriter, it was an early Enigma (the one without a plugboard). He bought it and put it up for auction where it fetched €45,000. See tinyurl.com/y7lvy3n9. 101010101

101010101 In 2017 Glasgow University is celebrating the 60th anniversary of Scotland’s first university computer. Their web page tinyurl.com/yamonz9l tells us that their EE Deuce was installed in 1958. Confused? Me too! 101010101 Correction Corner: Peter Radford points out that Geoff Sharman’s article Evolution of Enterprise Computing in Resurrection 77 gave the wrong IBM model number for the first cash machine. It should have been 2984. 101010101 We have recently heard of the Sandford Mill Industrial Museum near Chelmsford which boasts a large collection of Marconi equipment dating back to the earliest days. In particular a Marconi Radar Locus 16 computer dating from the mid-1970s and used (to this day) for missile control by the Royal Navy. This is described as “a work in progress”. The team is also looking for a Myriad computer but has yet to find one. Contact Alan Hartley-Smith at alanhs@alanhs.plus.com if you know of one. 101010101 This summer marked the 50th anniversary of the world’s first cash dispensing machine which was inaugurated by the late actor Reg Varney at the Enfield branch of Barclays Bank in June 1967. Two different systems were introduced within a month of each other. The Barclays system used a machine-readable paper token which was exchanged for cash. It was developed by John Shepperd Barron of the printing company De La Rue. However, he failed to take out a patent for security reasons. The Westminster Bank (now NatWest/RBS) system used a punched card-like token which, after use was posted back to the user but also incorporated the now familiar device of a Personal Identification Number (PIN) which the inventor James Goodfellow did patent, receiving the magnificent sum of £10 for his trouble. Both men were awarded the OBE, but had to wait nearly 40 years for official recognition. Reg Varney is now best remembered for his leading role in the television “comedy” On the Buses. More detail at tinyurl.com/yaz7a6gs. 101010101 In Resurrection 78 we asked whether anybody could challenge the claim of the Royal Liberty School to have had the first school computer in the UK. Roger Wright tells us that Forest Grammar School in Winnersh, Berkshire got their ex-Nestlé Elliott 405 also in 1965 and that London and Manchester Insurance donated their Ferranti Pegasus to the South Shields Grammar Technical School in August 1966. So, not yet, but it’s close! 101010101 I recently came across tinyurl.com/y6wwl4fy, a web advertisement by IBM in which they extoll the undoubted virtues of flash memory as a replacement for disc storage. Discs are denigrated as “complicated”, “clunky” and “ageing”. Remind me again. Who brought discs to market in the first place? Ah yes, the delicious irony! 101010101 Two consecutive stations on the Bergen to Oslo railway rejoice in the names of ål and Gol. Make of that what you will. 101010101 Manchester University’s Prof. Jim Miles has recently been devoting some of his time to putting the archive of the School of Computer Science into order. During which he came across a file marked “Alan Turing”. “When I first found it I initially thought: ‘That can’t be what I think it is,’ but a quick inspection showed it was a file of old letters and correspondence by Turing” he said. tinyurl.com/y7kkmj4j has more detail and the catalogue of 141 items of correspondence can be found at tinyurl.com/y7k3vanh. 101010101

101010101 As so often it is our sad duty to report the departure of colleagues and distinguished computer scientists. Our dear friend and former CCS Secretary, Hamish Carmichael passed away in July. We pay tribute to him below. Stalwart of our friends the LEO Society Peter Bird, author of LEO: The First Business Computer, has also died. His obituary is at tinyurl.com/ybf4uzna.

Finally, database pioneer Charles Bachman is no longer with us. Members may recall his fascinating 2008 presentation to the CCS, A Life with Databases. A Distinguished BCS Fellow, he was 92. 101010101 |

Making IT Work - A ReportNicholas EnticknapIt is hard to believe that the CCS is “only” 28 years old. We started life in 1989 in the Old Canteen of the London Science Museum, a disused and neglected building gathering dust. Our precious machines such as the Pegasus and the Elliott 803 were housed there, and the early CCS committee meetings took place there. It seems an age ago: there was no Internet back then, and mobile phones scarcely existed either — the names of the committee members in the early issues of Resurrection were referenced by postal addresses and landline telephone numbers. In those days computer conservation was a pioneering activity. There were no guidelines or academic precepts anywhere, and the first project leaders had to rely on their own experience and professional expertise to decide what to do and how to do it. The Science Museum was involved from the start and could provide space for us to work in, but its own computer exhibits of those days were static objects, not working machines. The CCS was breaking entirely new ground. Now computer conservation is practised all over the world, and is an established activity that has come of age. As Doron Swade puts it, “The drive for such activity appears irrepressible”. Its UK centre is the National Museum of Computing at Bletchley Park, where the immaculately presented operational exhibits in a surgically clean building accompanied by detailed information boards contrast strongly with the “In Steam” days held in the near derelict Old Canteen. In May The Computer Conservation Society and the National Museum of Computing jointly organised a two day conference designed to summarise the state of play in computer conservation, to take stock of where those 28 years of activity have taken us. The first day consisted of formal presentations at the BCS headquarters building in London addressing the questions of, basically, what we are doing and why we are doing it: how to set about computer conservation, how to overcome the difficulties that typically present themselves, and what we can expect to get out of it. The second day took place at Bletchley Park and was designed to put flesh on the bones of the theorising of the first day. There was a series of five workshops consisting of talks about five exhibits, followed by demonstrations of those machines and then question and answer sessions. The list of speakers illustrated how widespread computer conservation is today. They included Jochen Viehof and Johannes Blobel from Paderborn in Germany who have built a reconstruction of an ENIAC accumulator, Robert Garner from the California Computer History Museum who has led a reconstruction of a DEC PDP-1 and two IBM 1401s, and Nicholas Hekman from the Vintage IBM Computing Centre in New York state who has participated in the restoration of an IBM Series 360 line printer.

This globalisation is one of the major differences between then and now. Another is a widening of scope. In 1989 the CCS was trying to preserve and return to working order existing ancient computers such as the Pegasus that had survived. Today many projects involve or have involved the recreation of early machines that have long since ceased to exist, such as Colossus and the Manchester Baby, while conservation of original machines that have survived, such as the Harwell Dekatron, is the exception. Most such restoration projects today involve much more modern machines, such as the ICL 2966 and the Iris air traffic control system at the National Museum of Computing. This aspect was emphasised by Doron Swade in the first of the presentations at BCS HQ, which set the tone for what was to follow as it analysed what we are trying to do and what benefits we expect to gain from doing it. Swade drew distinctions between conserving, restoring, replicating, reconstructing and simulating computers, and admitted that even this was not a complete list of the types of project taking place; his own realisation of Babbage’s Difference Engine number 2 has to be described as a “construction” as “there is no original machine of which this is a repeat”. Leaving aside these semantic differences, what are computer conservation projects of all these different types trying to achieve? It might be expected that any target machine should be restored to its original state, or at least to a state it occupied at one part of its life (the EDSAC replica currently being built, for example, is a re-creation of the machine as it was in 1951, rather than in 1949 when it first started operation). Not so: apart from the fact that many of the machines are incomplete (the 2966 at Bletchley Park does not have its original printer, for example, though it does have one just like it), few of the machines that have been preserved or reconstructed have complete documentation surviving. Even where circuit diagrams and software exist, other necessary information may be missing. For instance, in the case of metallic objects, data on the thickness of the metals used is often missing and makes re-creating these parts “as new” problematical. Often, and particularly with earlier machines, there is very little surviving data whether in the form of drawings, notes or anything else. Doron Swade pointed out also that for the Colossus replica project undertaken by Tony Sale “documentary sources were somewhere between incomplete and non-existent”, and described Sale’s machine as “a remarkable achievement of detective work, research, stamina and ingenuity”.

Andrew Herbert was similarly scathing about what survives of EDSAC documentation — “incomplete, incorrect and sometimes misleading”. Herbert also pointed out that conservation can become an attempt to hit a moving target: “there is evidence of much re-design during commissioning”, and accordingly, “a great deal of forensic research and experimentation has gone into the reconstruction” of EDSAC. Should we then be making machines that merely look like the originals? This question of visual authenticity was touched on in several presentations. For example, the delay lines used in the replica EDSAC are not mercury lines as in the original, but they are housed in boxes (called coffins) designed to look exactly like the originals. Likewise the EDSAC team is using modern resistors and capacitors, as the quality of the original components of these types the team was able to obtain was poor; these modern items are used only on the underside and rear of each chassis, so the lack of visual authenticity is not considered a problem. Swade felt that in any case “visual facsimile is not a prerequisite for meaningful realisation”, and quotes the reconstruction of the 1941 Zuse Z3 by Raul Rojas as an example, in which components are laid out on anachronistic PCBs to convey the logical architecture of the design. Problems that arise include overcoming the lack of documentation. The importance of the memories of those people who worked on the machines in their prime was repeatedly stressed. This is fine for machines like the Iris air traffic control system, which was still operational as late as 2008, but for pioneering machines like Colossus and EDSAC all of the principal designers are now dead. Indeed for Colossus we have the ironic situation that the builder of the Bletchley Park replica, Tony Sale, is himself no longer with us. The importance of training people in the use of 1950s technology was also stressed. This is difficult as their mindsets and experiences are so different from those who worked with the technology is its heyday. A glance round the conference attendees reinforced this point; the average age was frighteningly high.

It was repeatedly stressed how essential was the use of enthusiastic volunteers, but this can have its downside. Chris Burton pointed this out in describing the “Pegasus event” of 2009 and its aftermath. The CCS Pegasus was on display at the Science Museum from 2001, being both maintained and demonstrated to visitors by CCS volunteers. But in 2009 during such a demonstration, smoke emerged from the power supply unit and the machine immediately shut itself down. Although it was a relatively small incident, technically speaking, it caused the Science Museum management grave concern, not least because the machine was being handled by non-Science Museum staff. The Museum concluded “live demonstration of these early computers posed a possible risk to the public” and should be terminated. In 2015 the Pegasus was removed to the Museum’s storage facility at Wroughton, where it languishes unattended today, a sad outcome for the most successful of the CCS’s original conservation projects. Another point to emerge was: how long will a restoration or replication survive? The natural assumption is forever, but a moment’s reflection tells us this cannot be so. Usage will wear the machines out, and replacement parts will eventually become unobtainable. “The vision might be that the object survives for maybe a generation after the volunteer’s lifetime, and 25 to 50 years is acceptable”, suggested Chris Burton in his talk on the Manchester Baby replica. He pointed out that this machine has already achieved a 19 year life! Which makes emulation in software of original hardware an important capability. This serves two objectives. First, it saves wear and tear on original machines; thus disc access on the ICL 2966 is simulated to avoid wearing out the original EDS disc drives, which are prone to head crashes. Second, it allows study of the way the machines worked without needing to have the original or a replica machine at one’s disposal. How much further on are we as a result of this intensive two day exercise? Doron Swade concluded that an attempt to lay down a blueprint for how a computer conservation project should succeed was doomed to failure. “There is no single answer to the question of what constitutes responsible intervention in the restoration of machines to working order”. The conference and workshops that followed bore him out. Every project team had come across its own difficulties and solved them in its own way, and there was no discernible consensus about what the approach should be. So the conference did not produce a manual detailing how to go about computer conservation. It has produced proceedings which encompass the thinking of many of those who have successfully tackled such projects. But any computer conservation project will necessarily be dependent in the last analysis on the drive and enthusiasm of those that undertake it, and the rules that it follows will be decided by them. Nicholas Enticknap is the founding editor of Resurrection and was a senior journalist on the well-known Computer Weekly newspaper. |

Lost Bits Regained?Brian M. RussellIn Resurrection 77 we posed the question “Did the Ferranti Atlas floating point arithmetic unit standardise its operands before applying the requested arithmetic function or did it risk losing accuracy if the numbers were not standardised?”. No Atlas expert has come forward, but the responses we have had all agree that the answer is that it did not. Brian Russell’s response below is particularly close to the horse’s mouth and, as a bonus, gives us an insight into some of the early history of the ICL 2900 Series. Thanks also to Michael J. Clarke and Peter Radford for their interesting input. As a new graduate, I joined ICL West Gorton as a programmer in Engineering and Diagnostic Software. With no reply to ‘In Search of the Lost Bits’ (Resurrection 77), I submit what I learnt 40 years ago as a programmer writing tests for floating point. I had to get to grips with the detail of every last bit, every lost bit and other interesting quirks of real number arithmetic. Computers do not pre-normalise operands. As a new graduate, I joined ICL West Gorton as a programmer in Engineering and Diagnostic Software. Five months later, I found myself responsible for writing logical tests for the 12 floating point instructions in the New Range (2900) order code. Floating point for the New Range, as it was then specified, seems to have been substantially the same as on Atlas. We had an expert from Manchester University (the home of Atlas) who came and talked to us about the philosophy of floating point. We learnt that, for best accuracy over the widest range, the optimum choice of exponent base is ‘e’. Since an irrational base is inconvenient for engineering, most implementations use binary (base 2), hexadecimal (base 16) or, as on Atlas, octal (base 8). Half way through coding, word came that New Range floating point was to change to be IBM compatible. The program structure was unchanged but all the test data had to be rewritten, after a three month wait to get the details. The new format was to be 1-bit sign, 7-bit true hexadecimal exponent biased by +64 and a 24-bit, 56-bit or 112-bit mantissa (fractional part) with the binary point to the left of the mantissa. In this format, the decimal number 3 is represented neither more nor less accurately than the number 2.999 999 94. In fact, these are two adjacent 32-bit numbers, both to an accuracy of around ±0.3 in the eighth decimal palace. You cannot have anything in between. In the previous article, when the numbers 3 and 34 were added to give either 37 or 36, it was said that: “We’re dealing with small integers here so we must regard the answer 36 as ‘wrong.’” No! If you want to deal with small integers and get exact results, then use integer arithmetic. By using real (floating point) arithmetic you are saying that the numbers 3 and 34 represent real quantities to within an accuracy of about ±half-a-digit in the least significant bit of your data format. Adding standardised (Atlas/MU terminology) or normalised (IBM/ICL terminology) numbers gets you an answer of approximately the same accuracy as your operands. With non-normalised operands you lose accuracy; the more non-normal the more loss of accuracy. In particular, if adding a non-normalised zero (zero mantissa with non-zero exponent) to another number, if the higher exponent belongs to the zero then bits will be lost from the other. If the exponent difference is large enough, the other number could be shifted away completely and give a true zero result. Although this runs counter to the mathematical ‘zero is the identity for addition’, it is a fact of life in real arithmetic and warrants an explicit warning in the specification. The New Range specification rather euphemistically said: “The results of all floating-point operations are normalised. Non-normalised numbers are accepted for all floating-point operations, and will give correct results, albeit with less precision than might have been the case with normalised operands.” My test programs included non-normalised operands to check these gave correct, but less precise, answers and that these answers were correctly normalised. The justification for not normalising before adding is, as the previous article said, that this loses performance and “given that in the nature of things most, even if not all operands would have been standardised anyway, could this slowing of the machine be justified?” Where could non-normalised numbers come from? In any well-written program, numbers will either be in-code literals, which would be normalised by the programmer (or the compiler on his or her behalf), or will be external data input from tape, card or keyboard. If the latter, the program would be presented with a character string in store to be processed by the instruction sequence : TCH

PK

CBIN

FLT

Table Check (TCH) ensures all characters are decimal numeric. Pack (PK) moves the least significant four bits of each character into the accumulator. Convert to Binary (CBIN) forms a signed integer and Float (FLT) converts to a real number and incorporates the appropriate exponent. This yields a normalised floating-point number which will remain normalised through all subsequent instructions. If data comes from some other source, such that the programmer suspects that data might arrive in non-normalised form, he or she can begin the computational sequence by adding zero, (RAD/0). The literal 0 will be expanded to all zeros, which is the normalised form of true zero, and the Real Add instruction will have no effect beyond normalising the accumulator and clearing OV (overflow cannot occur, though underflow might in which case an interrupt will occur unless masked). Hence, there is no need for a machine instruction to indulge in pre-normalisation. To maintain adequate precision, machine implementation uses a guard digit. This does not require any extra hardware since a 24-bit mantissa will fit in a 32-bit adder with four bits of guard digit and four bits available for carry. The wording in PSD 2.5.1 is: “Arithmetic results are truncated, i.e. rounded towards zero. Precision is achieved in most cases by the use of ‘intermediate fractions’ which are allowed one or more hexadecimal digits than appear in the result fraction. This is referred to as a ‘Guard Digit’. When normalised operands are used, a single digit is sufficient to ensure that the error in the result of a single arithmetic operation does not exceed unity in the least significant digit of the normalised result. Variations in implementation may produce different results within the above error for the divide function.” This last bit, about “Variations in implementation” was introduced in 1976 for the MDU (fast Multiply Divide Unit) on 2980 and 2976. Unlike the IBM 360 series, and the ICL 1900 and 2900 series, this hardware would not necessarily produce results identical to previous models in the range. For floating point division, the result would be rounded rather than truncated. For some combinations of divisor and dividend, the quotient could be 1 higher in the least significant bit of the mantissa (and in some very rare cases this could cause overflow which would not otherwise have happened). The result produced is always within the accuracy required by the specification, could actually be more accurate in the sense of Real Arithmetic, but it would not be possible to say whether the result is the same or is 1 higher. For users (or test programmers) who desperately needed to have results consistent with the older range specification, provision was made to turn off division in the MDU and use the older, slower, microprogram. My last job in Software Division, before moving to the Advanced Development Centre, was to specify a test for the MDU; the test to be called MDUT (usually mispronounced as ‘Mud Hut’). The MDU is essentially a 16-bit × 64-bit multiplier. It executes instructions for Integer Multiplication, Decimal Multiplication, Real (floating point) Multiplication, Multiply B, Dope Vector Multiply and Real Division. Performing division in a multiplier requires some ingenuity; first find the reciprocal of the divisor then multiply this by the dividend. Finding the reciprocal is also quite crafty; take a guess at the inverse, multiply by the divisor, see how close this is to unity and make a better guess — each iteration doubles the number of valid bits. To get a head start, make the first guess by looking up a pre-computed table held in RAM. Use a 256-line × 32-bit store indexed by the most significant eight bits of the binary normalised divisor (ignoring the most significant bit since this is always a 1 after binary normalisation and is therefore not significant). 64-bit Floating Point Division takes eight beats in the MDU compared to some 58 to 72 beats by microcode. The MDU does not do Integer Division or Decimal Division since the concept of a whole number integer or decimal number which is the reciprocal of another integer or decimal does not make any sort of sense. However, like Atlas with its 262 additional pseudo-hardware instructions, the MDU could be used for additional performance-improving functions such as the microcoded square-root routine ... but that is another story.

|

Contact details

Readers wishing to contact the Editor may do so by email to

Members who move house or change email address should notify Membership Secretary Dave Goodwin

(dave.goodwin@gmail.com)

of their new address or go to

Queries about all other CCS matters should be addressed to the Secretary, Roger Johnson at r.johnson@bcs.org.uk, or by post to 9 Chipstead Park Close, Sevenoaks, TN13 2SJ. |

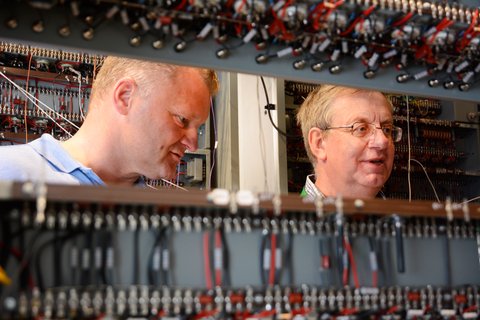

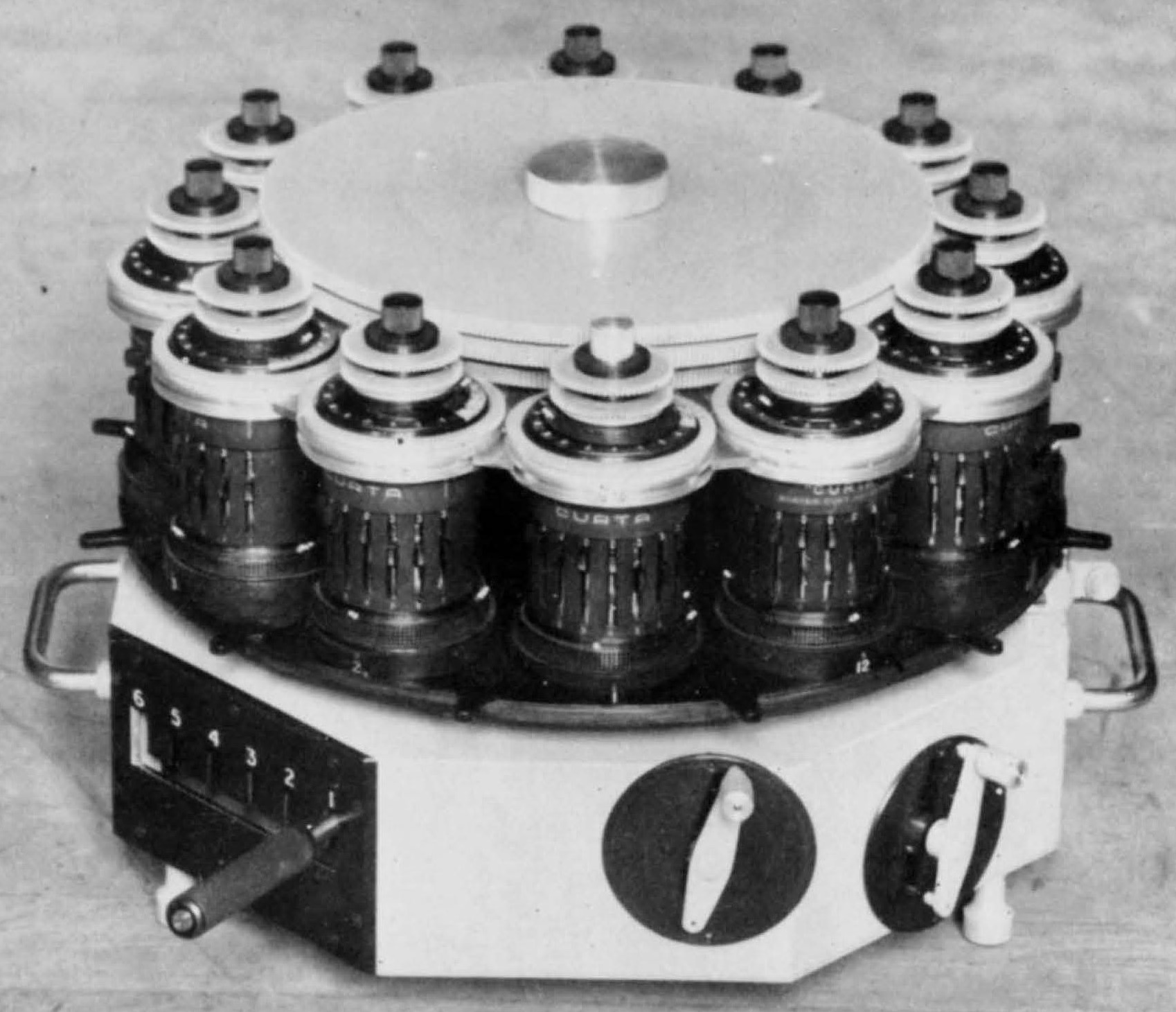

A Multiple Curta

Herbert Bruderer|

In Resurrection 75 Herbert Bruderer introduced us to the double Curta.

He has recently discovered a record of an even more impressive device using 12 single Curtas.

Built by the Department of Chemistry at the University of Birmingham in 1953 it is believed that it was used in matrix calculations. A patent was applied for, but does not seem to have been registered. Its fate is unknown but any information would be gratefully received by Dr Bruderer at bruderer@retired.ethz.ch. [ed] |

A Life in Computing

Peter Tomlinson|

I left school in December 1961 with a place secured for the following autumn to read Physics at Oxford, and the funding (thank you, State Scholarship and a College bursary) to make taking up that place possible. So what should I do for the eight months before becoming the first and only in my family to set foot in an Oxford College? My father knew someone in senior management at Ferranti West Gorton (soon to be part of ICT). So it was as student lab technician at West Gorton that I went for eight months, working in John Allenby’s peripherals group. Atlas/Orion peripherals, plus set-up equipment for the drum store of the then nearly complete Manchester Atlas, were the stock in trade. But, towards the end of that summer, early digital integrated circuits started to appear on the horizon... Three years and a Physics degree later, I went back to work at West Gorton. By then the ICT 1900 series mainframe systems were well established. They were built with discrete component plug-in printed circuit boards. I soon found out that there was an embryo project for a replacement for the 1904, thanks to the development of small integrated circuit modules. So I was able to join the team developing the mid-range 1904A. Dual-In-line 14-pin Integrated Circuits (DILICs) from Texas instruments were to be used. Not very high-density circuits: on one chip there might be four logic gates or two flip-flops. Another fundamental decision had been taken: we would for the first time simulate the entire core CPU logic in order to prove that the 1904A system would work when built. Logic network simulation software (the SIMBOL project) was being developed for the Manchester Atlas with government funding from the Advanced Computer Techniques Project. So there was created a work routine that lasted for many months until detail design of the core logic of the 4A’s CPU was complete and we had completely codified it in SIMBOL and input the data into the Atlas. Design work was indeed a production line.

Designers hand drew logic diagrams of fragments that would fit into one or other of the few available types of 14 pin IC, I coded those in SIMBOL logic, a punch operator punched them onto paper tape, then most evenings after work one or other of us would hand carry the day’s tape to the Atlas installation in central Manchester. Next morning (provided that the Atlas had done the business overnight, which was not always the case) one or other of us would collect the printout and take it back to West Gorton for study. Why the uncertainty about Atlas? The mean time between transient failures for the Atlas was about 40 minutes, which was about the time for compiling and running our simulation. Indeed, as the SIMBOL model of the core of the 4A got bigger, there was increasing risk that crashes of the Atlas would scupper the project, but the Atlas operators were a determined team, often willing to re-run our daily update instead of closing down and going home. Of course, those delivery and collection trips could not today be done: we used our own cars for a business purpose, without being insured for the task. To prove that the 1904A would work, it was decided that we would do what you do with any computer to see if it is working correctly: ask it to run a test program. So a loader was written. Then we could do with the Atlas what one does to prove that a 1904 built with transistors is working correctly. Into the Atlas was loaded the basic 1904 test program on paper tape. The Atlas became for a few minutes the slowest ever 1900 Series machine (30 simulated instructions executed per second). The test of the simulated machine worked correctly! Many years later I have donated an archive copy of the 1904A design document to TNMoC at Bletchley Park... Of course, I should not have had that copy... But I had decided to explore other fields, and also ICT was merged into ICL, so, after fixing a bug in the 1906A memory management unit, I left for a short further stint in academia, followed by a longer one in university service computing and associated minicomputer purchasing, and then I took the plunge into business. In various ways those later years occupied me and my business colleagues in doing useful things, and paid the staff, but did not make me rich. Two further items stand out from the 1990s to the present day:

A very sensible civil servant was working in the government department tasked with developing a smart ID card. He kept in touch with the development of smart technology, and came to the conclusion that the then current technology of smart cards was not up to the job of securing and evidencing one’s identity. In those days, senior civil servants had small personal budgets, and so it came to pass that he and colleagues pooled their funds so that he contracted with me to write the 1999 Framework for Smart Card Use in Government. That document is now in the National Archives. (It did help in my relationship with civil servants that my father was for many years a reluctant civil servant, conscripted during WW II into the Ministry of Food rather than anything military, found after the war that his old career path had vanished, and was able to stay on in the Ministry of Food and then MAFF: Ag, Fish and Food). At around the same time as the ID Card project (the turn of the Millennium), I had become involved with a group that soon formed ITSO Ltd: methods for using smart cards for ticketing and journey management in public transport. The best bit was working in stages on developments from which emerged the ENCTS card that powers the English National Concessionary Travel Scheme (and equivalents in Wales and Scotland). It is pleasing to use something for which I was one of the team ensuring that ENCTS would work as required. I’m a wrinklie now, with a wrinklie bus pass. |

Obituary : Hamish Carmichael

Dik Leatherdale

It is with a deep sadness that the Computer Conservation Society has received news of the passing of Hamish Carmichael. Hamish came into the IT industry in the late 1950s when he joined Powers Samas not long before it merged with the British Tabulating Machine Company to form ICT. After serving for many years in “Corporate Systems”, ICT/ICL’s internal IT division, he turned his attention to ICL’s well-regarded Content Addressable FileStore (CAFS) product. These days Microsoft employ people called “evangelists”. Their job is to enthuse people about this or that Microsoft product. That’s what Hamish did for the last decade or so of his ICL career. He often began his presentations with “My name is Hamish Carmichael and I’m a CAFS enthusiast”. The success of CAFS was due in no small measure to Hamish’s evangelism. After retirement, he threw in his lot with the CCS, serving for a decade as its secretary and volunteering at the London Science Museum to catalogue their extensive collection of ICL documents to which he added numerous items donated by his wide circle of ICL and ex-ICL colleagues. He also served unsung as the proof reader for Resurrection. But perhaps his proudest achievement was his editorship of two volumes of ICL anecdotes An ICL Anthology and Another ICL Anthology. A few years ago, at the usual Christmas CCS film afternoon, there was a showing of an ICT publicity film from the mid-1960s in which Hamish had been unaccountably cast in the rôle of ignorant customer playing against a professional actor explaining some of the joys of the 1900 Series. As the film ended, audience shouts of “Speech!” brought Hamish to his feet and, off the cuff he proceeded to keep us all in stitches for 10 minutes with witty tales of how the film was made. Hamish was a lovely man, a real gentleman who you couldn’t help but like. It wasn’t just a privilege to have known him, it was a pleasure, a joy. |

Book Review : The Story of the Computer

Dik Leatherdale

Subtitled A Technical and Business History (a nod to Martin Campbell-Kelly?) Stephen Marshall’s opus weighs in at 592 pages — a big book for a big subject. As you might expect from the title it mainly concerns itself with hardware though in the last few chapters software becomes more important. In a series of themed chapters, it progresses from Napier’s Bones to today’s PC. It does not extend to devices such as mobile ’phones in which you might find a processor but confines itself to the conventional idea of a computer. It might otherwise be an even bigger book. Unreasonably big perhaps. I started by consulting the excellent index and looking up the three machines about which I know most — the Ferranti Atlas, the CDC 6600 and the ICL 2900. The first two are given due consideration and weight. Of the last there is no mention, but as something of a dead end in the story that is not perhaps, altogether surprising. Although computing history is a largely American affair, the UK contribution in the first 20 years is given due prominence though I was disappointed that Sinclair and Acorn/ARM merit only passing mentions. The former is perhaps another dead end, but ARM cannot so easily be written off, even though its influence extends outside the book’s limits. Beyond the UK/US, the work of Konrad Zuse is considered but little else. It is sobering to be reminded that the computer upon which this review is being composed is fundamentally no different from the systems developed 40 years ago by Xerox at Palo Alto. The PARC story is fascinating and much better written than in Dealers of Lightning which I have yet to finish and probably never will. Like most readers of Resurrection I reckon to know a fair amount of the history. But I learned much from this book. It’s well-structured and well-written. So, should we recommend it? My review copy was borrowed from the author/publisher. I didn’t send it back. I sent a cheque. ’Nuff said? |

Book Review : The Turing Guide

I’m not sure if I should review books. I look at the production values as well as the text and with modern printing techniques some of the photographs are at least “muddy”. Also in the introduction to Chapter 24 there is an error in the first footnote reference which was not set in superscript. Having set out my nit-picking approach, the book itself covers the wide range of Turing’s interests and adds to the layperson’s view I had of them and the activities of the Government Code and Cypher School. The 33 separate authors contributed to the eight sections of the book covering biography, his early work, code breaking, early computer development, AI and neuroscience, biology and mathematics. A final section sums up Turing’s Legacy and a speculation on the nature of the Universe. Some of the sections seem to be as speculative as the Finale but I’m sceptical of the view of the human brain as a computer. Combining the contributions of many authors from various sources does lead to quite a few “See Chapter nn” entries taking you back and forward through the text. Fortunately, the book is rounded out with Notes on the Contributors, Further Reading, Notes and References, Chapter Notes and an Index which helps explain much. His appearance as author or co-author in 16 of the 42 papers makes it more like “Jack Copeland and friends do Turing” but I found it informative. |

50 Years ago .... From the Pages of Computer WeeklyBrian AldousAll-British Punch Unit Introduced: A new all-British keyboard punch verifier is to be put on the market following an agreement between Datek Systems Ltd and Ultronic Data Systems, the business machines subsidiary of Ultra Electronics. Ultronic are to sell and service the equipment which will be made by Datek. (CW51 p.1) 4-K Machine Compiler from ICT: Users of 4K ICT 1901 computers will shortly be able to compile small-scale programs written in ASA basic FORTRAN or IFIP ALGOL. Compilers have been developed which are able to cope with programs of up to 70 or 80 instructions on 1900 machines of a minimum configuration of 4K, 24-bit words of core store, paper tape input and a line printer. (CW52 p.1) Bankers Order £1m System: An ICT 1906E computer system, worth over £1m, has been ordered by the Committee of London Clearing Bankers for their Inter-Bank Computer Bureau planned to come into operation in March next year in Lombard Street. (CW52 p.1) Thin Film Memory Module Developed: The successful development of a new thin film memory module for military computer applications has been announced in the USA by the Federal Systems Division of Univac. The system is claimed to be competitive in cost and superior in performance to conventional core memories in the speed range of 200 to 400 nanosecond cycle time. (CW52 p.9) US Airline buys Elliott 4100: A London International Computer Centre equipped with a £130,000 NCR Elliott 4100 computer is to be set up by TransWorld Airways to handle the payroll for their London, Paris and Rome stations. As well as payroll, the computer’s initial applications will include the provision of passenger and freight sales statistics and analyses, expenses and general ledger analyses and the performance of ticket and airwaybill stock control. (CW52 p.16) First NRDC Aid for a New Application: The £100,000 computer system which Cunard are to install in their new liner, the Q-4, will be based on a Ferranti Argus 400 computer and will have a range of functions far greater than that of any other existing merchant-ship installation. Cunard have co-operated with the British Ship Research Association, The National Research Development Corporation, Ferranti and the ship builders in the two-year preliminary investigation and research stages. (CW52 p.16) £150,000 Oilfield Program: As part of a five-year oilfield study conducted on behalf of the Iranian Oil Operating companies, CEIR have developed a complex computer model for forecasting the performance of Iranian oilfields. Development costs for the project amount to about £150,000 and the programming effort involved 16 man-years of work. (CW53 p.1) Post Office Prepares for the NDPS: One of the final actions of the last session of Parliament was to pass the Bill allowing the Post Office to establish a National Data Processing Service. Until that Bill became law the department was prohibited from setting up such a service, but a study of data processing developments within the Post Office shows that it was necessary to the reorganisation carried out prior to the Post Office becoming a state corporation. If the Bill had not existed it would have had to have been introduced very quickly. (CW53 p.12) Production Snags Delay System 4: Production problems with peripherals of English Electric’s System 4 computer range have caused delays of up to three months in delivery. Announcing this the company’s marketing director, Mr Kenneth Barge, said the delays would be quickly overcome and that by the end of the year deliveries would be taking place at the rate of one a week. (CW53 p.20) Automatic Program Testing with PATSY: A suite of programs for automatic testing of programs on the 1900 series computers with a minimum of 8K 24-bit words of core store has been developed by ICT. Called PATSY, the package allows batches of programs written in PLAN, COBOL or NICOL to be amended, compiled and run with test data and requires the minimum of operator intervention. Source programs are batched on a magnetic tape file and dealt with in sequence in a continuous compilation and testing procedure. Error details are printed out for the programmers’ attention. (CW54 p.3) Health Centre 360/75 System Aids Research: Medical research data from over 300 projects will be processed on an IBM 360/75 system being established at the Centre for Health Sciences at the University of California, Los Angeles. Visual display terminals, such as the IBM 2250, linking remote locations to the system, will provide information in graphic form. The multi-million dollar facility was made possible by a grant from the National Institutes of Health and the 360/75 is replacing the centre’s original IBM 7040 and 7094 computers. (CW54 p.11) £1m Contract for Air Traffic Control Centre: Experimental data processing equipment to be tested at Euro-control’s experimental air traffic control centre at Bretigny, near Paris, will be based on a Marconi Myriad II computer with an associated French machine from CII, the Plan Calcul organisation. A £1 million contract was signed in Brussels last week. (CW54 p.20) NDPS Offered Air Customs Real Time Job: The National Data Processing Service, which was set up under the Post Office (Data Processing Service) Act, has been invited to set up and manage the £2 million real-time computer system proposed by Customs and Excise and the airlines to handle the control of imports at London Airport, Heathrow. (CW54 p.20) London University to have CDC 6600: The computing power of London University is to be increased by a factor of at least three by addition of a CDC 6600 installation. The new machine is scheduled for delivery at the end of 1968 and it is estimated that, together with the machines already available, this will satisfy the university’s computing needs until the early 1970s. (CW55 p.1) NCR Introduce the 5900: A low cost bridge between the accounting machine and the general purpose digital computer has been developed by NCR, using components of their now well-established 500 series computers. The 5900 is, like the 500, a visible record computer using magnetic striped ledger cards for permanent data storage, and can be operated by accounting machine operators with little re-training. (CW55 p.2) First System 4 Disc Supervisor: At the same time as English Electric Computers announced the availability of the first disc operating system for System 4, two of the company’s systems programming executives were delivering a paper on the technology of supervisor construction to an ACM-sponsored symposium in the USA. Mr DHR Huxtable and Mr MT Warwick presented a paper entitled Dynamic Supervisors — their Design and Construction in which were described the principles and the techniques employed in the writing of the J-disc operating system for System 4/50 and 4/70 machines which was announced last week. (CW56 p.16) ICT Move into Giant Machine Market: A giant computer, the 1906A, with more than twice the power of Atlas, has been announced by ICT. A prototype has been in operation at the West Gorton plant for some months, and a production prototype is due to be switched on towards the end of 1968. (CW57 p.1) Big Machine Study by EE-EA: The most important task for the joint product planning team of English Electric and Elliott-Automation computer specialists, which is announced today, will be the steering of a project for very large computers. The team, headed by Mr Andrew St Johnston, joint managing director of Elliott-Automation Computers, will also be responsible for the introduction of new products and the integration of the current range. (CW58 p.1) Speeding Tempo of Military Operations: To many people the mention of military applications of computers normally conjures up pictures of electronic machines controlling weapons or planning strategic battle manoeuvres. Such eye-catching applications do exist - the British Army has announced that it will use the FACE system (Field Artillery Computing Equipment) for controlling the firing of guns on the battlefield, but they represent only a small proportion of the total use that the army makes of these machines. (CW58 p.8) Low Cost Challenge of new l/C PDP-8: A very competitive newcomer to the ranks of small computers was announced this week when Digital Equipment Corporation (UK) announced the integrated circuit version of the PDP-8 the PDP-8/I which will sell in this country at a price of only £5,625 for the rack-mounted version. The new machine is fully compatible with both the hardware and software of the PDP-8 and the PDP-8/S. (CW59 p.1) ATLAS for London Bridge Programs: Load-distribution calculations for the superstructure of the new London Bridge have been performed using a suite of computer programs developed by International Computing Services Ltd, ICT’s bureau subsidiary, and run on the Atlas computer in Manchester. The 107-foot wide bridge will be constructed from pre-cast concrete sections of about 10 feet in length, and will have a three-span cantilevered construction with a suspended centre span. It will carry six lanes of traffic and two pedestrian footways, and an elevated walkway will be added at a later date. (CW60 p.20) Heart Patients to go On-Line: An on-line real time system to monitor patients suffering from heart ailments is to be set up at the Royal Victoria Hospital, Belfast, using an IBM 1800 computer. It is claimed that this is the first such system in the British Isles. (CW61 p.16) Mass Memory by Laser Techniques: A potential key to the growing need for cheap mass memory systems has been developed by scientists at the Honeywell Research Centre, in Minneapolis. The technique makes use of the polarisation properties of the laser, and the stray magnetic-optic effects of a film of manganese and bismuth. (CW61 p.16) Computer Ships for use in Apollo Moon Probes: Five US Navy ships, each carrying computing and message relaying equipment, are to be used as communications outposts during the Apollo moon probes. The equipment will be the same as that used at the majority of the land-based Manned Space Flight Tracking Network stations and includes a data processing system base d on the Univac 1230 computer. Three of the ships are to be used during the earth orbital and trans-lunar injection phases of the Apollo flight and the other two will provide coverage during the re-entry period of the spacecraft. (CW62 p.10) Production Control on Kidsgrove KDF9: An advanced control system, devised by English Electric’s bureau division at Kidsgrove, and the aircraft equipment division at Netherton, is being used in the production control of aircraft constant speed drives. These hydraulic drives, which are coupled to an aircraft’s engines to convert the varying power into constant speed to drive electrical generators, contain up to 500 parts, some machined to tight tolerances on advanced N/C machines. (CW62 p.11) Elliott 803 Aids Sheep Breeding: In an effort to improve the breed of Eire’s largest single sheep species — the Galways — the Eire Agricultural Institute are using an Elliott 803 computer, located at their Rural Economy Division in Dublin. (CW63 p.1) Strand Palace DEBBIE: Guests’ accounts at London’s 800-bedroom Strand Palace Hotel are to be handled by a dual PDP-8 system which is due to be installed early next year and come into operation in the autumn. Known as DEBBIE — Duplex Electronic Billing, Book-keeping and Information Equipment — the £50,000 system will be able to provide a guest with a detailed account within 15 seconds. (CW63 p.1) PCM Comes into Operation: On Tuesday, the Post Office moved from the experimental to the operational application of Pulse Code Modulation (PCM), when PCM telephone circuits came into use between Sunbury-on-Thames and Faraday Building in central London. PCM is a technique allowing up to 24 telephone conversations to be carried over two pairs of wires instead of two conversations as at present. (CW63 p.20) |

CCS Website InformationThe Society has its own website, which is located at www.computerconservationsociety.org. It contains news items, details of forthcoming events, and also electronic copies of all past issues of Resurrection, in both HTML and PDF formats, which can be downloaded for printing. At www.computerconservationsociety.org/software/software-index.htm, can be found emulators for historic machines together with associated software and related documents all of which may be downloaded. |

Forthcoming EventsLondon Seminar Programme

London meetings take place at the BCS in Southampton Street, Covent Garden starting at 14:30. Southampton Street is immediately south of (downhill from) Covent Garden market. The door can be found under an ornate Victorian clock. You are strongly advised to use the BCS event booking service to reserve a place at CCS London seminars. Web links can be found at www.computerconservationsociety.org/lecture.htm. For queries about London meetings please contact Roger Johnson at r.johnson@bcs.org.uk. Manchester Seminar Programme

North West Group meetings take place in the conference room of the Royal Northern College of Music, Booth St East M13 9RD: 17:00 for 17:30. For queries about Manchester meetings please contact Gordon Adshead at gordon@adshead.com. Details are subject to change. Members wishing to attend any meeting are advised to check the events page on the Society website at www.computerconservationsociety.org/lecture.htm. MuseumsMSI : Demonstrations of the replica Small-Scale Experimental Machine at the Museum of Science and Industry in Manchester are run every Tuesday, Wednesday, Thursday and Sunday between 12:00 and 14:00. Admission is free. See www.msimanchester.org.uk for more details Bletchley Park : daily. Exhibition of wartime code-breaking equipment and procedures, including the replica Bombe, plus tours of the wartime buildings. Go to www.bletchleypark.org.uk to check details of times, admission charges and special events. The National Museum of Computing : Thursday, Saturday and Sunday from 13:00. Situated within Bletchley Park, the Museum covers the development of computing from the “rebuilt” Colossus codebreaking machine via the Harwell Dekatron (the world’s oldest working computer) to the present day. From ICL mainframes to hand-held computers. Note that there is a separate admission charge to TNMoC which is either standalone or can be combined with the charge for Bletchley Park. See www.tnmoc.org for more details. Science Museum : There is an excellent display of computing and mathematics machines on the second floor. The new Information Age gallery explores “Six Networks which Changed the World” and includes a CDC 6600 computer and its Russian equivalent, the BESM-6 as well as Pilot ACE, arguably the world’s third oldest surviving computer. The new Mathematics Gallery has the Elliott 401 and the Julius Totaliser, both of which were the subject of CCS projects in years past, and much else besides. Other galleries include displays of ICT card-sorters and Cray supercomputers. Admission is free. See www.sciencemuseum.org.uk for more details. Other Museums : At www.computerconservationsociety.org/museums.htm can be found brief descriptions of various UK computing museums which may be of interest to members. |

Committee of the Society

|

Computer Conservation SocietyAims and objectivesThe Computer Conservation Society (CCS) is a co-operative venture between BCS, The Chartered Institute for IT; the Science Museum of London; and the Museum of Science and Industry (MSI) in Manchester. The CCS was constituted in September 1989 as a Specialist Group of the British Computer Society (BCS). It thus is covered by the Royal Charter and charitable status of BCS. The aims of the CCS are to

Membership is open to anyone interested in computer conservation and the history of computing. The CCS is funded and supported by voluntary subscriptions from members, a grant from BCS, fees from corporate membership, donations, and by the free use of the facilities of our founding museums. Some charges may be made for publications and attendance at seminars and conferences. There are a number of active Projects on specific computer restorations and early computer technologies and software. Younger people are especially encouraged to take part in order to achieve skills transfer. The CCS also enjoys a close relationship with the National Museum of Computing.

|