| Resurrection Home | Previous issue | Next issue | View Original Cover | PDF Version |

Computer

RESURRECTION

The Journal of the Computer Conservation Society

ISSN 0958-7403

|

Number 84 |

Winter 2018/9 |

Contents

| Society Activity | |

| News Round-up | |

| Tony Sale Award 2018 | Martin Campbell-Kelly |

| ML/I – Son of GPM? | Bob Eager |

| Elliott 905 ML/I | Andrew Herbert |

| ICL 1900 Extended Floating Point Remembered | Michael J. Clarke |

| A Life in Computing | Roger Collier |

| 50 Years Ago .... From the Pages of Computer Weekly | Brian Aldous |

| Forthcoming Events | |

| Committee of the Society | |

| Aims and Objectives |

Society Activity

|

IBM Museum — Peter Short Current Activities The week of events in September to mark the 60th anniversary of IBM at Hursley began with the Heritage Open Day on Sunday 16th. Something over 200 people had registered to attend, but it felt like a lot more circulating around the museum. The following weekend included an open day for employees, families, retirees and probably anyone else who turned up. This was a huge success, the museum was absolutely heaving the whole time, with every room absolutely packed with people. The curators had a busy time answering questions, so much so that we don’t have any photographs to show for it. We do however have a lot of good positive feedback in the visitors’ book. As part of the 60th Anniversary celebrations the lab also held a Festival of Innovation earlier in the week. This was in two parts. One featured many business partners and guests showcasing the latest technology and which gave many the chance to visit the museum and see what has already been achieved. It was opened by Kate Russell from the BBC’s Technology programme Click who later tweeted: “Anyone who loves computers and history HAS to go visit the museum at @hursley_park .. thank you so much for the tour xx” Schools in the region were also invited, and participated in a problem-solving project involving several areas of the museum. The day was a resounding success for the pupils and their teachers. It generated a huge amount of interest for future school outings to further explore the museum and in particular its operational artefacts, an area in which we are placing ever greater focus. Discoveries Those of you who have visited Hursley and been around the museum back office may remember the large walk-in safe. This would have been positioned against the wall of the earlier House before the 1903 extensions were built. A second safe of the same size sits on top of it, with access from a conference room on the floor above. Above that, in the same room, is a further, smaller safe, and as long as I’ve been volunteering at Hursley we’ve wanted to see what is in there. Not only did we not know where to find the key, the safe itself sits high up and is difficult to reach. A while ago scaffolding appeared in the room for some maintenance work, and my colleague Nick managed to locate a key. At last the great reveal – over 300 cardboard boxes, all numbered, containing ALD printouts (Automated Logic Diagrams), other print runs and punch cards. With no obvious explanation of what the contents were related to, or why they are there, we removed a small number for further examination. A few attempts at working out what the project was were fruitless. Last week, I opened another of the boxes to find a printout which included in the heading reference to MT 2040. Machine Type 2040 was the S/360 model 40 CPU. We therefore believe we have found the complete S/360-40 logic diagrams, but still no idea why they were secreted there. Computer Museum NAM-IP

Earlier this year, Gilbert Natan contacted us from the Computer Museum NAM-IP in Namur, Belgium, looking for help for an exhibition planned this November. We were able to provide a large number of scanned photos and some technical information. The exhibition has now opened, and looks very interesting. The S/370 is a model 138, developed in Hursley. Have a look at www.nam-ip.be for more information. Donations We continue to receive donations of hardware, documentation and other artefacts. The latest arrivals include a PC-AT and some older non-IBM kit including Commodore and Acorn micros, for which we have found new homes with enthusiasts in Hursley. On the customer engineering front we have coming some ESCON tools, including laser light source and light meter for measuring propagation / attenuation in fibre cables. During the Open Day, a former colleague from my early days in Hursley offered us a 4691 ‘Moonshine’ leisure/pub terminal. This was based on the PC-XT, with waterproof membrane keyboard. It needs a good clean after 30 years in his garage, and he has promised to do that before we receive it. My first job when I moved to Hursley from Greenock was competitive analysis for this machine! |

|

EDSAC Replica — Andrew Herbert We continue to make progress with the commissioning of main control. Our focus remains on the correct functioning of the order fetch and decode cycle. This has been made to run for extended periods repeatedly but looking closely at the logic analyser traces we still see some anomalies and are tracking back to determine what is causing them. Progress has been helped by the acquisition of a discounted demonstration model four-channel GW Instek MSO-2204EA digital oscilloscope which has the capacity to monitor more signals in greater depth. As we won’t be able to work on the machine during the roof repairs at TNMoC we are planning to use the winter months to work away from the machine to build and commission the clock monitor, the main store delay lines and construct the initial orders logic. We may also look at the final design for the transfer unit having realised this needs to allow for longer delays than originally planned. We are working towards being in the position to demonstrate simple programmes running in time for the 70th anniversary of the June 1949 official opening event for the original EDSAC. |

|

Elliott 903 — Terry Froggatt In the previous issue of Resurrection, I reported that we were having problems with TNMoC 903. During October, I substituted the 18 bit-slice register cards into it from my own 903, but there was a power failure at TNMoC on the day of my visit. Subsequently we found that the TNMoC 903 was no better for the swap, but the removed cards did work OK in my 903. We were also suspicious of noise on the -6 volt power rail, but this has not been seen again. The TNMoC Large Systems Gallery is expected to be closed for three months over winter, because the roof is being refurbished, so we are going to take advantage of this down time by moving the whole 903 CPU rack, PSU, control panel and some cables to my home where I can test everything thoroughly card by card in my own 903s. Andrew Herbert adds – AThe 903 at The Centre for Computing History in Cambridge is now restored to working condition, having been out of action over the summer. A fault was found in the paper tape reader and a spare reader is being used as a replacement while the faulty one is investigated. There was also a suspected seating problem with the PCBs in the processor rack. |

|

Analytical Engine — Doron Swade The creation of the cross-referenced database for the set of some 20 Scribbling Books, the manuscript notebooks in which Babbage recorded his workings and thoughts on his engine designs, is almost complete. Tim Robinson, who has been compiling the database, is up to the last year of Babbage’s life (1871) and is within striking distance of completion within months. The process has taken coming up for three years. The motive for this undertaking was that before we could commit to building anything we needed to be sure that we had reviewed everything Babbage had to say on a particular topic, a situation confounded by Babbage’s practice of returning to the same design issues time and time again over a period of decades. Consequently related material is unsystematically scattered through the archive of some 7,000 manuscript sheets. While Tim Robinson’s emphasis so far has been data capture rather than interpretation there are several general preliminary findings that are already invaluable to the overall enterprise of constructing an Analytical Engine. One such is the confirmation that not only are the designs incomplete with respect to details of control and overall systems integration (this was anticipated) but that there are several critical features for which there are worked viable alternatives the final selection of which Babbage left open (method of multiplication, digit precision, method of carriage, for example). Also, that Babbage remained creatively active till the end with at least one instance of his most sophisticated design being modelled in the period immediately before his death in 1871. The completion of the database will be a landmark in the developmental trajectory of this project. Next steps are, firstly, a scrape of a relatively small but potentially critical set of ‘mystery’ drawings that have not been catalogued or scanned. This is a manageable clean-up job undertaken for completeness and in the hope that some final gaps might be filled and some remaining blind references traced. Secondly, to model and build a mechanism (the advanced anticipating carriage mechanism) to assess logical and physical feasibility, and to use this to develop generic modelling and evaluation techniques to be extended to each of the core functions. |

|

Our Computer Heritage — Simon Lavington Since the last Report, new information has been gathered about the deliveries of the following computers:

For three of the above machines, updates for the relevant deliveries pages of the OCH website have been sent to Birkbeck College (the host server) for actioning. At the same time, a consequential update has been submitted for the references page of the Ferranti Mark I/Mark I* computers. New versions of the above four files have not yet appeared on the website. By chance, today new delivery information has been received about deliveries of:

I am still in the process of digesting the new EMIDEC data. When checking has been completed, two updated EMIDEC pages will be submitted to Birkbeck for uploading to the OCH website. |

|

ICL 2966 — Delwyn Holroyd Several repairs have been completed over the summer. No issues to report with the system over the last couple of months. The tape decks have been kept very busy reading some of the many tapes rescued from the basement at Bracknell earlier this year. We are hopeful there may be some gems lurking. The system will be shut down and inaccessible until the end of February 2019 due to roof replacement works in Block H. |

|

Harwell Dekatron/WITCH — Delwyn Holroyd Operation has settled down again since the installation of the prototype solid state trigger tube circuits a few months ago. We have not yet had time to prototype a circuit based on the GTE175M trigger tubes, and this will now not take place until next year. The First Generation Gallery will be closed and inaccessible until the end of February 2019 due to roof replacement works in Block H. |

|

Software — David Holdsworth Leo Heritage Lottery Grant I have exchanged emails with Peter Byford who has confirmed that he intends that our Leo III preserved software will form part of the project. Kidsgrove Algol Some time ago we received a scan of an end-user program, which included the source text of the Algol library routines. Our current system uses a very minimal library written this century. It was decided to try to resurrect the genuine library routines. Once again OCR by Terry Froggatt proved invaluable and led to the unsurprising discovery the we do not quite have a complete listing. However, the missing components are documented in the manual, and it may prove possible to recreate them. KDF9 Assembly Languages It is still the case that the text of the web pages needs serious revision, but the underlying execution of the preserved software works. ee9 emulator Bill Findlay has released version 3.1 of his ee9 emulator. It offers improved emulation of KDF9 director mode, and more verisimilitude in the emulation of paper tape characters. |

|

SSEM — Chris Burton ICL 1904S/ICT 1905 The reproduction Manchester Baby computer continues to provide interest to scores of visitors on four days a week, enabled by the enthusiastic and knowledgeable team of volunteers. On an average day 60 or more adults and 30 children pass through and enjoy hearing the history of computing in Manchester and the world. Often conversations lead to reminiscences about their own involvement in the early IT world. The machine remains reasonably reliable, though the odd glitch keeps everyone on their toes, and more serious problems require calling in the level-four cavalry. A bout of power system faults over the year left us without spare power supply units, so a blast of tutorial and repair effort took place to get back to a situation with two spare units available. When these old BT Monarch telephone exchange power units become too difficult to repair, we have a strategy in hand for fitting modern compact power modules inside the existing cases. |

|

Bombe Rebuild — John Harper In my last report I said that we had sadly lost John Borthwick who died on 14th August 2018. John was Secretary and a Bombe Trustee. I suggested that I would include a eulogy which can be found at www.computerconservationsociety.org/news/bombe/john borthwick.pdf but with so many people wishing to say kind words the size would be impractical to include here John had so many interests and people who held him in high regard. John was a respected and well-loved person by so many people and we greatly miss him from the project. On September 21st 2018 we ran a Bombe roadshow in conjunction with the IFIP World Computer Congress being held in Poznan in Poland. Our event attracted an audience of over 100 onsite and was also webcast live. An edited webcast is viewable at wcc2018.org/Enigma-live . It was part of a Congress stream on the History of Computing in Eastern Europe. The day’s original schedule began with an introduction from Roger Johnson who planned the day. It was followed by the sending of an Enigma message and accompanying crib and then by another Enigma message. While the Bombe team were finding the key of the day there were lectures from Dermot Turing on the work at Bletchley followed by a talk by Dr Marek Grajek on the Polish codebreakers who had been trained at Poznan University on clandestine advanced mathematics courses. They are now rightly celebrated in Poland as national heroes. The clutch problem mentioned in the last report made the challenge from Poznan more difficult than it might otherwise been. Since the problem caused the Bombe to run more slowly than normal the first message and crib had to be sent ahead of the day and this was explained to the audience but the rest of the day ran as usual with regular reports from the Bombe team via two way video links as the key finding proceeded. “Job up” was called around 13:00. After the challenge was over we were able to get the Bombe going as originally intended by careful adjustment but new parts are currently being made which will need to be fitted if long term reliability is to be achieved We now have an Enigma machine back as part of our full demonstration. This is an almost perfect build of a WWII version but only made recently. In all respects it could be of WWII build. It has the full five wheels and allows us to work on any wheel order. This machine was purchased by a very generous gentleman from TNMoC, who has made it available to us to demonstrate. It is already a new major attraction. |

|

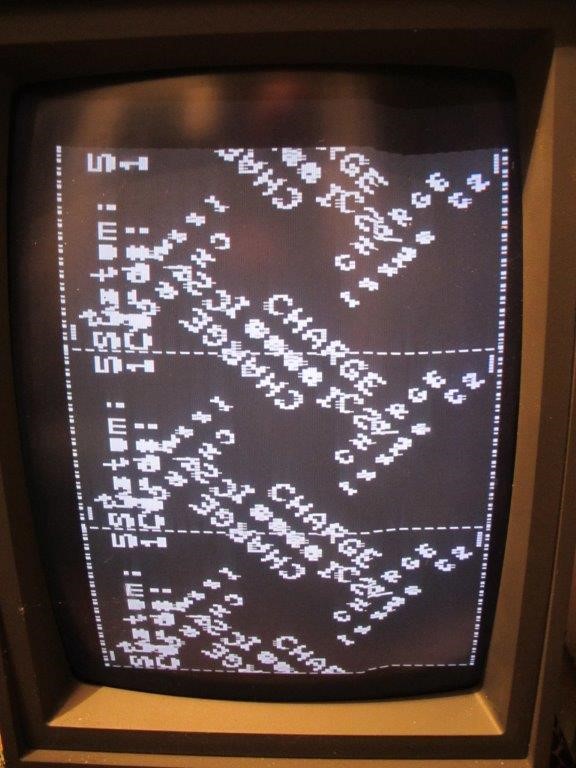

ICT/ICL 1900 — Delwyn Holroyd, Brian Spoor, Bill Gallagher ICL 1904S/ICT 1905 – Public Release The public release as foretold in the previous update didn’t happen, those ‘couple of minor snags’ turned out to be not quite so minor, but the code review as a whole was successful. We are still planning on public release before the end of 2018, so please watch icl1900.co.uk (or @ICL1900) for further details. 1905 We have successfully implemented E6RM for the 1905, which has identified a few bugs in the emulator, including previously unexercised features such as Monitor Modes. These bugs have now been corrected and an earlier (smaller) version of #FLIT (hardware instruction test/verification program) now runs successfully. With E6RM available, we have also been able to add disc (EDS8) and slow drum (196x) subsystems. According to the book, these were available under E4BM, but the surviving binary copy that we have does not include these packages. E6RM The source version that we have for E6RM Executive is much later than would have ever been tested on an unsuffixed machine (1904/1905/1909), although it was still available for those machines. Not surprisingly, we discovered a few bugs all of which were trivial to fix, and we now have it running successfully on the 1905 emulator. Bracknell Tapes Good progress is being made reading these tapes. So far the obvious highlight has been the Maximop issue tape. Some of the tapes contain dumps of various disc cartridges – these will require a lot of detective work to figure out whether they contain anything interesting. |

CCS Website InformationThe Society has its own website, which is located at www.computerconservationsociety.org. It contains news items, details of forthcoming events, and also electronic copies of all past issues of Resurrection, in both HTML and PDF formats, which can be downloaded for printing. At www.computerconservationsociety.org/software/software-index.htm, can be found emulators for historic machines together with associated software and related documents all of which may be downloaded. |

News Round-Up

|

The Science Museum in London writes that those parts of its reserve collection at Blythe House in Kensington will be transferred to a new facility on its existing offline storage site at Wroughton near Swindon. Much digitisation (and online availability) of the collection is promised together with improved access to the reserve collection in its new home once the transfer is completed in 2023. 101010101 Our friends at the LEO Society are custodians of a number of artefacts and a great deal of written material related to LEO Computers Ltd. Like the Computer Conservation Society they have no premises in which the material can be stored and displayed and some of it is “at risk” as the individual keepers get older. In concert with the Centre for Computing History in Cambridge they have applied for and received a grant of £101,000 to develop plans for a facility in which their archive can be secured. If these plans are successful, a full development grant of £265,000 may follow. We understand that digitisation of the written artefacts is intended to be a major part of the exercise. Anybody with LEO artefacts not already known to the LEO Society is asked to get in touch with Hilary Caminer at Secretary@leo-computers.org.uk. Our hearty congratulations to both parties. Obviously we wish them well in moving forward to realise their vision in full. 101010101 The ever-industrious Herbert Bruderer writes – I have just published the results of my investigations referring to the dating of the world-famous Millionaire calculating machine. The English Version can be downloaded at www.research-collection.ethz.ch/handle/20.500.11850/296752 And at Communications of the ACM cacm.acm.org/blogs/blog-cacm/231865-mystery-dating-of-the-world-famous-millionaire-calculating-machine-solved/fulltext I believe that several machines should be preserved in the UK, e.g. at TNMoC. If you know other surviving Millionaire machines please let me know. |

Tony Sale Award 2018Martin Campbell-KellyThere were five entries for the 2018 Award, any one of which would have been a worthy winner. The geographical diversity of submissions reflected the extent to which computer conservation has become an international interest. Entries came from the UK, Argentina, the Netherlands, and two submissions from the United States. Interestingly, two of the entries were software-based, with no physical hardware involved. The first of these was Martin Gillow’s Virtual Colossus. This was a meticulous simulation of the Colossus and the Lorenz SZ40/42 cipher machine with beautifully rendered graphics. The judges were impressed that the simulation took place within a standard web browser giving it potentially an international geographic reach. The second “virtual” entry was the SIMH Simulation project, submitted on behalf of Bob Supnik who originated the system. This US-based project is a framework for developing functional simulators for computer architectures together with a growing collection of simulators for historic machines. SIMH is now an open source project with numerous participants. Among the many machines realised are the historic MIT TX-0, the 1950s IBM 650, mainframes from several manufacturers, minicomputers from DEC and other makers, and personal computers. An entry from Argentina’s Museo de Informática ICATEC, Buenos Aires, was for a replica of a Ferranti Mercury – known locally as Clementina – the country’s first scientific mainframe, installed at the University of Buenos Aires in 1960. The original no longer exists, but the project team obtained detailed measurements and specifications from an extant Mercury held in store by the National Museum of Scotland. The original machine was some 18 metres in length, and the full-sized replica is now on permanent display. A Dutch entry from the Electrologica Foundation was for the restoration of a 1960s Electrologica X8. This machine was sometimes known as “Dijkstra’s Computer” because it was the computer on which Edsger Dijkstra conducted his early programming research and for which he developed the highly influential THE multiprogramming operating system. The computer is now a permanent exhibit in the Rijksmuseum Boerhaave, where it was inaugurated in March 2018 with a major symposium of computer heritage. The judges were struck by the fact that most entries had a strong local connection, in either the location of production or use, which provided a social and economic context. This was particularly the case with the winning entry, A Trio of Link Pilot Makers, submitted by the Center for Technology and Innovation, Binghamton, New York. Binghamton is the home of Link Aviation, founded in 1935. The American Society of Mechanical Engineers has conferred an Engineering Landmark on the location as the birthplace of flight simulation. The Center has restored into working order three flight simulators from three different generations of technology: a mechanical system from 1942, a solid-state analogue system from the 1960s, and a digital model from the 1980s. All three systems are on display and useable by the general public under supervision at the Center’s TechWorks! facility.

|

Contact details

Readers wishing to contact the Editor may do so by email to

Members who move house or change email address should go to

Queries about all other CCS matters should be addressed to the Secretary, Rachel Burnett at rb@burnett.uk.net, or by post to 80 Broom Park, Teddington, TW11 9RR. |

ML/I – Son of GPM?Bob EagerThe recent article on General Purpose Macrogenerator (GPM) was the inspiration for what follows. While the successor m4 was mentioned, another important development in macro processors was not covered, and it was decided to rectify this. The macro processor in question is ML/I, which has been around since 1966, and is still in use by some dedicated users. It is also interesting to examine the link with some early attempts at software portability. Those wishing to find out more can visit the ML/I website at http://www.ml1.org.uk , where they will find code, documentation, papers, and more. Introduction ML/I was a close successor to GPM, although its purpose was more general – it was partly intended as a base for developing portable software. It was written by Peter Brown as part of his Ph.D. research at Cambridge; he always had a somewhat juvenile sense of humour, and decided to position his work as pre-eminent by name alone, as well as having a dig at IBM and PL/I. He thus decided to call it ML/I – Macro Language I. Part of the dissertation later appeared in a textbook written by Peter, and since it was designed with portability in mind, ML/I was quickly implemented on a number of different systems. Implementation Before getting into the nitty gritty of ML/I itself, it is worth looking at how it was originally implemented. Peter’s plan was that ML/I should be written in a language that could be translated easily (using ML/I, or by some other mechanical means) to a suitable language on the target machine. He decided to use a language designed specifically for expressing the algorithms used by ML/I – what he called a “Descriptive Language Implemented by Macro Processor”, or a DLIMP. He named the language used for ML/I. “L” – for Language. L looks much like some other languages of the time, and this is a representative fragment: //SEARCH CHAIN OF NAMES//

[BSNAME] CALL MDFIND

[BSN1] SET CHANPT = IND(HTABPT)PT

[BSHASH] IF CHANPT = NULLPT THEN GO TO BSSWIT

CALL CMPARE(CHANPT+OF(LPT))PT EXIT BSH1

CALL TEBEST

[BSH1] SET CHANPT = IND(CHANPT)PT

GO TO BSHASH

[BSSWIT] TEST BESTPL GOING MNOUT,CALLO,WARNO,CALLO,SKIPPO,DELO

Having written ML/I in L, the first implementation (on the PDP-7) was accomplished by hand mapping the L code into PDP-7 assembly language. This eventually yielded a working version of ML/I, and this was then used to map L for the Cambridge Titan, the ICL 1900, the Burroughs B6500, and the ICL 4130 (and possibly some other systems, including the CDC 6000). However, it was quickly realised that it was quite hard to write macros to map L for a given target machine, and this problem was exacerbated by the need to ‘push’ the system from another machine running ML/I or an equally powerful equivalent; such a machine might be geographically distant. Macro assemblers were not capable of handling the relatively high level syntax of L, so it was decided to map L into an abstract assembly language, at a much lower level than L; of course, this was done using ML/I itself. The new language was called LOWL. It was then fairly easy (with only a small loss of performance) to write macros, either using ML/I or a local macro assembler, into the assembly language of the target machine. LOWL was designed to be a lowest common denominator; the abstract machine it used had just three registers, A (arithmetic), B (index) and C (character), and all of these could be implemented using a single register if desired. If a macro assembler was used, the initial version of ML/I could then be used to generate an optimised version of itself (the author later used this technique for MS-DOS, via Microsoft MASM). The author ‘discovered’ ML/I in 1971, and quickly set about implementing it on a Honeywell DDP-516. Despite a lack of experience, this was accomplished quite quickly. He has since performed at least seven more LOWL implementations with little trouble. Having more time on his hands than was good for him, he then decided (in about 1980) that the L source could be hand mapped to BCPL without too much trouble. This actually took rather more effort than envisaged, due to the rather tortuous nature of the algorithms used, but it did eventually result in a solid implementation that exactly replicated the original code. This implementation was only used on one system before the attractions of a C version became apparent, and it was a relatively simple task (as one might expect) to convert the BCPL version almost directly into portable C. That is the primary source version that exists to this day, and even that dates back to 1984. It is worth noting that the only significant portability problem arose in 1984, when the Olivetti L1 implementation was produced. ML/I relies on pointers and integers being the same length, and the L1 had 16 bit integers and 32 bit pointers. This was solved reasonably easily by using long integers, and when the same issue arose on a 64 bit Intel system recently, this was trivial to fix simply by setting the right option in a header file. The only issue encountered was the compiler over-optimising and removing vital code; it seems that ML/I’s logic confused the flow analysis! Documentation ML/I was fully documented by its User’s Manual, accompanied by an Appendix for each implementation (to date, there are 28 such Appendices). The User’s Manual was actually part of the original dissertation, and is a model of clarity as well as exhibiting a splendid lack of ambiguity; however, it is hardly a beginner’s document. Peter Brown thus wrote the Simple Introductory Guide to ML/I, but this too defeated many readers. Eventually, the author wrote an ML/I tutorial, which was intended to get the user up to speed, and to a point where they could absorb the Simple Introductory Guide. Distinguishing features The obvious question is: “What makes ML/I different from GPM and other macro processors?” Although ML/I slightly resembles GPM, there are some radical differences. The first is that macro calls need no leading symbol to identify them; all input is potentially a macro call. This means that ML/I can be used to process any arbitrary stream of text. The second is that ML/I treats everything as a macro call, even material generated as the result of an ongoing call, so it is possible to nest macro calls to an arbitrary depth, limited only by the storage available. This approach is very powerful, but also potentially dangerous, and it is easy to become mired in a twisty series of macro calls, almost all alike. Should the user wish to retain a leading symbol for macro calls, a way is provided to define one or more warning markers, which can be just a single character (e.g.$). Once this has been done, ML/I switches into warning mode, where it behaves (for this purpose) much more like GPM. There are no predefined symbols (e.g. $, <, ~, etc.) in ML/I. This means that it is possible to feed almost any arbitrary file of text to ML/I, and it will be faithfully replicated as output. The implication here is that there are no restrictions on the kind of text that can be processed, given care when writing definitions of macros and other constructions. The only exception is the presence of the names of the operation macros, which will be discussed in the next section. ML/I also contains a rich set of basic constructions (see below), and other operations (such as macro-time variables, conditionals, and functions). Using ML/I The most important thing to realise is that everything is treated as a macro call. New constructions are defined by calling predefined macros called operation macros; there are usually twenty of these, and their names start with MC. Fourteen operation macros are used to change the processing environment (i.e. define or delete constructions), and two more are macro functions used to return useful information. The remainder are used for various special purposes. Apart from the macro functions, all operation macros result in no output when called, their effect typically being that of changing the environment in some way. ML/I supports five different kinds of construction; macros, skips, inserts, warning markers and stop markers. The last two are used relatively rarely, so they will not be discussed further, and the reader is referred to the documentation for details. Macros are fairly obvious; they are constructions that, when encountered in the input, result in some action and possible output. A macro name can be a single character, a word, or a mixture (sequence ) of these, including optional and/or mandatory spaces. The name (or name delimiter) is followed by an arbitrary number of secondary delimiters (again of any form), terminating in a closing delimiter. A name delimiter can stand on its own, without any following delimiters, if desired. The text between each delimiter constitutes a series of arguments. To define a macro, the operation macro MCDEF is used, e.g.: MCDEF PIG AS DOG Skips can be considered as a degenerate form of macro, where the same delimiter rules apply. However, they are a ‘safe space’ where macro calls are generally not recognised. The delimiters and/or the ‘arguments’ (intervening text) can be copied to the output, or deleted, as required. The most common use of skips is as so-called literal brackets, where evaluation of the replacement text of a macro is deferred from definition time to the time of actual call. Such brackets must not appear in the output, must copy across (without further evaluation) the text they enclose, and must be correctly matched if nested. Definition of these, with a nod to GPM, might be: MCSKIP MT,<> Here, the M option ensures that nested occurrences of < are matched first, while the T option copies the intervening text to the output (to copy the delimiters, the D option is used). It is trivial to use the skip name in text, by surrounding it with another skip using just the T option (there is no limit to the number of different skips that can be defined). A skip with neither option is an easy way of including comments:

MCSKIP @ WITH @ NL

@@ This is a comment

Note the use of the NL keyword to denote a newline, because a real newline terminates the call to MCSKIP. Inserts provide a way to insert certain elements into the output (e.g. arguments, macro variables, etc.), and are introduced once again by a name delimiter; they have exactly one additional (closing) delimiter. The closing delimiter is necessary because the intervening insert specification can be quite complex, not a single digit as in GPM. A typical insert definition (again, with a nod to GPM) might be:

MCINS ~. The insert name is ~, and the closing delimiter is ‘.’. Macro variables ML/I supports macro variables. These are mostly integer variables, although the C version of ML/I also allows limited character string variables. Permanent variables have global scope and extent, and it is possible to request whatever number of these should be required (subject to storage limits). They are named P1, P2, .., and their values are inserted by using an insert, e.g.: ~P2. Temporary variables (T1, T2, ..) exist for each invocation of a macro; by default, macros have three temporary variables for each call in progress, although more can be requested. The first three are initialised to useful values, such as the number of arguments to the macro call. Character variables (C1, C2, ..) provide an efficient method of remembering short character strings. They are global in scope and extent, and it is possible to request any number of them, of a requested maximum individual length. System variables (S1, S2, ..) provide information about the system on which ML/I is running, and provide facilities such as input and output stream selection. The number of these is fixed. Arguments A macro call may have any number of arguments, including zero; null arguments are permissible. Arguments are retrieved by inserting them, usually with the insert flag A; for example, to insert argument 2: ~A2. There are other flag characters to preserve surrounding whitespace and to prevent the argument being evaluated for macro calls. Conditions Conditions are implemented using the operation macro MCGO, which provides a simple conditional go-to (MCGO also provides an unconditional go-to). The destination is a label, placed using an insert and the letter L followed by a number; this has a null replacement text. Examples might be: MCGO L3 MCGO L2 UNLESS T1 EN 2 Here, the EN means ‘equals numerically’; other comparisons (e.g. GR for numerical ‘greater than’, or = for textual equality) are possible. Flexible delimiter structure One major feature of ML/I is the ability to have arbitrary numbers of arguments to a given macro, and to have them separated by choices of delimiter. A simple example might be a macro SET, taking a first argument of a variable name, followed by two more arguments separated by either + or -, finishing with a newline. This might be defined as: MCDEF SET = OPT + OR - ALL NL AS .. and then one could use SET A = B + C or SET A = B - C as desired. This can be extended using nodes, which allow the delimiter structure to loop back on itself; the context indicates unambiguously whether the node is being ‘placed’ or ‘gone to’. Nodes are the letter N followed by a number. The following would enable an arbitrary number of occurrences of + and/or -, where the structure would be traversed at the time of evaluation by using a temporary variable in a loop, and the value of T1, which is preset to the number of arguments: MCDEF SET = N1 OPT + N1 OR - N1 OR NL ALL AS .. If the user needs to set up a macro whose delimiter structure is the same as one of the keywords used, it is a simple matter to make a temporary change by using the MCALTER operation macro. A real example The following will provide a real example of how ML/I could be used to map the SET statement previously mentioned into ICL 2900 assembly language: MCINS ~.

MCSKIP MT,<>

MCDEF SET = N1 OPT + N1 OR - N1 OR NL ALL

AS < LSS ~A2.

MCSET T1 = 3

~L4.MCGO L2 IF ~DT1-1.

= +

MCGO L5 UNLESS %DT1-1.

= -

SUB ~AT1.

MCGO L3

~L2.

ADD ~AT1.

~L3.MCSET T1 = T1 + 1

MCGO L4

~L5.

ST ~A1.

>

This snippet introduces macro time assignment and expressions, indexing of variable names, labels, and macro time conditionals. The input: SET A = B + C - D would generate: LSS B ADD C SUB D ST A Comparison with GPM ML/I was clearly modelled on GPM, but was intended for purposes (e.g. extending existing languages) where more power and freedom was required. This is reflected in the lack of a leading symbol for macro calls, and the use of a newline as the closing delimiter of operation macro calls (although semicolon was used originally, it was found to be cumbersome; consider the necessity for dnl in m4). This extends to the freedom of choice provided when it comes to skip and insert names. There are also many more direct facilities for macro time calculations and decisions. Lastly, ML/I has clearly separated output and error streams, as wel l as multiple input and output streams on many implementations. Applications ML/I has been used for many things. These include the extension of existing programming languages, translation between programming languages (including dialects) and general text processing. The author has used it to simplify the preparation of firewall rules. One interesting application was the extraction of crew names from several hundred heterogeneous, badly written web pages originally written for a USAF memorial website; this task had defeated many other people and languages. Preservation status Despite its age, ML/I is still in active use. Source code is portable and readily available via the ML/I website in all of the languages mentioned. Documentation is also available, in PDF and in HTML. Incidentally, a number of other pieces of software written in LOWL also survive, and can be found on the website. They include SCAN (a BASIC-like text scanning language), ALGEBRA (an algebraic investigation program), and UNRAVEL (a store dump analyser). Conclusion ML/I survives as a useful program, over 50 years since it first saw the light of day; it was really quite advanced for its time. It was originally considered large and slow; by today’s standards, it is small and fast (it has been squeezed onto an 8kW PDP-8). It is very portable, both in the C version, and (to a slightly lesser extent) using the LOWL version. It supports multiple I/O streams, making it easy to write text handling utilities using very simple control scripts. It is available to play with, or even to do real work! Bob Eager retired in 2015 from over 37 years teaching computer science at the University of Kent. He can be reached at bob@eager.cx . |

North West Group contact details

|

||||||||||||

Elliott 905 ML/I

Andrew Herbert

Bob Eager has described the ML/I macro generator in a companion article above. I found an Elliott 905 implementation of ML/I included in a collection of digitised Elliott paper tapes Terry Froggatt sent me when I began to write an Elliott 900 simulator and wanted some representative programs to try it out. The tape consists of source code for MASIR, the Elliott macro-assembler , and makes use of some Elliott 905 specific instructions. The header shows it was authored by Harold Thimbleby when a student at Queen Elizabeth College, University of London. Today Harold is a professor of computer science at the University of Swansea, and Queen Elizabeth College has long since been absorbed into Imperial College without trace. Reconstructing the Elliott 905 Implementation Initially I ignored the ML/I tape as I didn’t have a copy of MASIR, and, by the time we had found MASIR, I had forgotten about ML/I. Later after my investigation of versions of Strachey’s General Purpose Macrogenerator (GPM) for the Elliott 900 series, Terry reminded me of ML/I, but the absence of any test data or documentation provided an excuse for further procrastination. Finally, searching for ML/I on the Internet, I came across the website maintained by Bob Eager (www.ml1.org.uk) that holds source code and documentation for ML/I together with information about ports to various (now mostly historical) computers. There was no mention of an Elliott 905 port, although there was unsurprisingly an Elliott 4100 version as Kent had a 4100 machine in the 1970s. Finding Bob’s site provided the information needed to assemble the MASIR and test it. The only significant difficulty was that Harold had written the 905 version to the interface of the Elliott Rados [Random Access Disc operating system] for which I had no documentation. However, changing conditional assembly options in Harold’s source produce a standalone paper tape binary which would work with handcrafted paper tape “executive”. Having got the program running I emailed Terry and Harold to share the good news. This has two useful results: Terry found the original correspondence from the time when Harold had contributed his implementation to the Elliott Computer Users Association in 1976, and Harold dug out a box containing more Elliott tapes, his project report and various other interesting documents. As Bob describes in his article, ML/I was distributed as source code for LOWL a machine independent assembly code which it was assumed could be readily translated to a native assembly code for the target machine. Harold found that the MASIR macro assembler lacked some of the facilities assumed by LOWL (e.g., the ability to construct the address of a label as a value and various forms of string processing) so he resolved to use GPM for the translation step and wrote his own implementation in Elliott SIR (a non-macro assembler). Like others he transliterated the CPL from Strachey’s Computer Journal paper. Harold’s project report describes a tool chain containing:

(Step 3 generated MASIR macro calls for linking to the executive, hence the use of MASIR rather than SIR in step 4). Sadly programs 1), 2) and 3) were lost, but Harold’s tapes included an example of output from the pre-processor from which they could be reconstructed, and by a similar process of reverse engineering, the GPM macros for LOWL instructions and directives and the hash table utility could be reconstructed from the MASIR source. I also wrote a tool to generate a hash table of built-in identifiers: the ML/I documentation suggests this be done by hand, but that seemed too lazy an option. The recovery was done as a background project over a number of months and now I have the full tool chain working. I have verified that the various sources and intermediates in Terry and Harold’s collections match those produced by my version of the tools. I’ve also retrieved the source for the last release of ML/I (issued in 1986, ten years after Harold’s 1976 version) and translated, assembled and tested it successfully using the same process. Peter Brown, the designer of ML/I, wrote a few other applications in LOWL: ALGEBRA, a package for exploring logics; SCAN, a text analysis tool; and UNRAVEL a store dump analysis package. Finding the source of ALGEBRA in Harold’s tapes, that too has been run through the tool chain successfully. The others require some additional work in writing machine dependent support code, so remain a project for another day. Technically reverse engineering Harold’s tools was straightforward, with one exception, and it remains an issue today when one language is embedded in another. LOWL has a construct MESS ‘text’ meaning generate code to output “text” to the output stream. The LOWL alphabet of course includes the characters recognized by GPM as its own so-called “string quotes” to invoke GPM facilities. Thus the innocuous LOWL MESS "<" becomes :MESS,<"<">; in GPM (here, replacing GPM’s original § with a colon), and sends GPM off on a wild goose chase looking for the missing matching > that GPM thinks is needed to close a nested, quoted text. One solution is to have GPM turn off macro-processing when scanning LOWL strings, but this is not general. (It’s what Thimbleby did however.) Martin Richards’ version of GPM (BGPM) has built-in macros that generate the GPM left and right quote symbols, but this would require the pre-processor to parse LOWL strings and make appropriate substitutions. Reflections Having explored both GPM and ML/I, it is interesting to reflect on the differences between the two systems and why macro processors are less popular these days. GPM versus ML/I In my experience I found GPM easier to learn and remember than ML/I: GPM is a smaller language but struggles with string processing. Its sparse syntax can make for impenetrable code: the original GPM had no notion of whitespace as such, so, unlike ML/I, its definitions rapidly become opaque because they could not be written with clear spacing, let alone indentation. This is fixed in Martin Richards’ BCPL version of GPM (BGPM) through the addition of a special newline symbol that is filtered out on input. ML/I is richer and more capable of pattern matching and editing but personally I prefer to use a string processing language like Snobol (or Unix regular expression tools) for such tasks. It may be of course that familiarity with GPM and Snobol is clouding my judgement. MASIR as a Macro Assembler MASIR needs improvement – it lacks facilities to perform arithmetic on labels, to calculate the address of a label (unless it is global) or to lay down long text strings. The macro facilities however are fit for purpose. Terry has a source for MASIR, so perhaps on another rainy day... Macro Generators Today Macro generators today come in two guises: in paginators such as LaTeX, popular with academics, and in macro assemblers. Embedded macro generators are rarely found in modern programming languages. Standalone macro generators are little used, although the Unix toolset includes one – m6. As general-purpose tools standalone macrogenerators have been almost displaced by “regular expression” based tools such as grep, sed, trans and awk, which are powerful enough to meet many pattern matching and editing needs. Most modern programming languages include regular expression based pattern matching and editing either as language facility or via library routines. These facilities allow the development of custom tools as user programs. This coupled with the ability to run user programs interactively in modern operating systems reduces the requirement to provide a built-in tex t processing utility at the command level. I would suggest that macro generators embedded in programming languages died because (i) constant definitions have become first class citizens , (ii) languages have become more platform independent reducing the need for conditional compilation and (iii) other uses of macros were eclipsed by object orientation . All of the above said, a macrogenerator like ML/I would be very helpful if it was desired to write a Microsoft Word .docx to HTML converter, a task too complex for the simpler regular expression editing tools and therefore requiring some programming effort. The primitives of ML/I and its derivatives provide an excellent vehicle for such a task. Acknowledgement I would like to thank Harold Thimbleby for reading through the first draft of this article and his helpful comments and suggestions. Software and documents relating to this article can be retrieved from www.computerconservationsociety.org/software/elliott903/more903 . |

ICL 1900 Extended Floating Point Remembered

Michael J. Clarke

Warning! This is a horror story! It recounts my experience with the 1900 extended precision floating point – the hardware and the software extracodes in the E6G3/4 executive, (these were available as an option when the executive was compiled for your machine). The item in Resurrection 82 about the inherent inaccuracies of floating point arithmetic brought back memories of six months (in 1976) spent working double shifts at Bracknell (9-4 and midnight into the small hours and beyond) on the 1906S getting the hardware and software to deliver the same answers to maths processes using the same input numbers! First I better explain what extended floating point was. The 48-bit floating point units (FPUs) in the West Gorton 1900 series machines (1907, 1905/7E/F/ the 1903/4/6A/S) (with software extracodes in executive if the floating point hardware was not installed) were quite common. The extended FPU (ExFPU) was only fitted as an option to the 1906A/S and had an extra 48 bits, 96 bits in all, 33 bits being added to the length of the mantissa, and the exponent extended by the remaining 15 bits. This made the largest number that could accurately be held having a mind-boggling number of zeros , (229 - 1) with incredible accuracy for fractional numbers. A few (1906A/S) machines were fitted with this extended floating point hardware system, with a companion set of extracodes in the executive if requested. This usually meant two separate executive programs were provided. I am not sure how many or even if all of the 1906A/S machines had this extended FPU system. One day the users of the Tax Office 1906S system in Hastings which had, and used extensively, an extended FPU came up with the brilliant idea of comparing the data produced by a large financial model, as run using the ExFPU hardware and re-run with the same input data with the hardware switched off. A simple switch in the machine’s control panel indicated the presence or absence of the ExFPU hardware and could force the object program to use the executive extracodes instead of the hardware unit. This large financial model took over four hours to run in a dedicated machine using the software extracodes – they never did say how long it took to run with the ExFPU hardware. I suspect it would be just a few minutes! The switch in the extended floating point hardware module which disabled the hardware could in theory be used on-the-fly providing the right executive program was pre-loaded. As far as I was aware NOBODY ever tested this functionality. The results of the Tax Office’s two runs of the model using the same input data bore no similarities! All the results were positive when run on the hardware. Many were wildly different and some were even negative, when using the executive extended precision extracodes. I was still employed as the senior programmer on the George III/IV (E6G3/4) executive working out of Bracknell at that time, so finding out wha t was wrong and fixing it fell to me. The built-in software was called directly by the floating point instruction set seamlessly to the user program. ICL Bracknell had a 1906S fitted with an ExFPU, but getting dedicated access to it was difficult. Hence my midnight second shifts. The first error in the code was easy to identify and was fixed with a simple executive mend; this problem was causing the negative results because of an error in the extended subtract extracode routine. This mend was applied to the Hastings machine’s executive and a second run of the model was arranged using the executive extended extracodes with the same input data as before. This took place on the next weekend at Hastings and did indeed deliver results that were all positive, BUT these results still bore little resemblance to those produced by the hardware unit. There was some suggestion that ICL pay for the four hours of machine time that the test used. My investigation was not helped by the Tax Office people. They just demanded that ICL should fix the software. I was not even given the source code used in the model. But ICL took the position “No code for the model no charges for user testing of attempts to fix the problem”. The Tax Office authorities were stubborn and refused to release the source code, indeed they would not say what was used to compile their model, FORTRAN, COBOL, Algol... information withheld. They would not provide a core dump of the program. They had provided about ten pages of numerical data heavily redacted, showing the input numbers and the large discrepancies in the results. So I had to devise my own process to use and run in both modes and then a method to compare the results. I had access to the source code of the engineers’ hardware test program FLIT. It was written in West Gorton, but its author was not recorded. I think it was originally written for the Manchester Atlas. I modified this program to accept a fixed integer as a seed rather than use a different one derived from the system clock at run time. I added a routine for it to write the function, operands, expected and obtained results to an exchangeable disc for every floating point function being tested. The program took 45 minutes to fill an EDS 60. This process was run with the ExFPU hardware turned on. The special executive that included the extended precision floating point extracodes was then loaded and the process repeated giving it the same seed as used in the hardware run with the results written to another EDS 60. The executive normally used at Bracknell did not have the executive extracodes compiled in, hence my need for dedicated access. Run time was three hours plus. I then wrote a simple program to read and compare the contents of the two discs. The compare process spewed out lots of errors the on its first run – reams and reams of lineprinter paper. There was so much I had to abandon it. Different results by the thousands! It took quite a while to process this error information! I eventually decided that a complete re-write was needed. I re-wrote the ExFPU software as a standalone set of extracodes avoiding the difficult to read and debug routines with the INCLUDE sub-processes added to the normal FPU extracodes. I added notations as I worked out what each instruction function was doing and worked outwards from the first error reported found and fixed the existing code with a quick fix mend, and then incorporated that mend into my new code. After many evenings running the modified software extracodes and then the comparison software I still could not get the results to agree. The results were giving different discrepancies depending on the integer seed that was used. Please note that every change I made required two new executive compilations, one with the new mend and one with that mend incorporated into the new ExFPU modules. The 1906S had to be stopped and the new executive loaded for each test and the official executive reloaded when I had finished testing, often after 4am! One evening I accidentally ran the comparison program using two discs produced by the software extracode process using the same integer seed. There were errors, the results did not agree! More reams of lineprinter paper produced. One week later (after many frustrating hours running and re-running this process and not getting the same results twice), I discovered a fault in the basic executive when storing floating point numbers on executive entry and then restoring a slightly different number on the return from executive to the user’s program. This only happened when an interrupt occurred when an ExFPU instruction had just been obeyed using the executive extracode. My version of FLITonly used ExFPU software so it never found the discrepancy. I suspect that if left to run for a long, long time, FLITwould have found the discrepancy. That error was quickly fixed. and I re-ran the process twice and compared results. All good! I compared against the hardware-produced results. Alll good again! In hindsight I should have repeated this process using many different seeds. I only re-ran the process with just one seed with high expectations and got answers that agreed. I submitted my newly compiled executive to the Tax Office for testing. Alas, although the results were similar with the hardware ExFPU on or off they were not quite right and the worst news was that while most of the results almost agreed there were two glaring discrepancies. Even worse news was that a comparison of re-runs, both with the hardware ExFPU turned on and using the same input data, gave differing results! Quite large differences! Then there was another brilliant idea from the Tax Office staff. I think I had told them of my unfortunate experience when running my comparison program on data produced by the extracodes. This comparison of two runs of the model with the same input data both using the ExFPU hardware gave slightly differing results, with just one or two glaring differences. They changed the data and re-ran the model a few times and got different results almost every time! It seemed that the Tax Office had been using data that was suspect for many years! The programmer at the Tax Office let slip that his financial model was used when testing the effects of changes to the country’s income tax rates and thresholds! Pressure was bought to bear upon ICL and I got better access to the Bracknell 1906S. Back to the drawing board and yet another modification to the FLITprogram. More late night test runs! I could not get my software to fail, every run produced results that were identical with the ExFPU hardware on or off. I tried all sorts of seed data, the results always agreed. Then one evening the system operators of the Bracknell machine had held back a two hour compilation run of the ICL COBOL source code. They thought that they could run this process alongside my floating point tests. I agreed to it. Yes you guessed it. The ExFPU hardware gave random differing results. The software was giving random different results. Two design faults in the 1906S interrupt handling process were found and identified (the way the hardware handled overflow and underflow when an unconnected hardware interrupt from a random peripheral occurred when either condition was active. A very rare event!) A peripheral interrupt caused executive to dump the contents of the floating point unit so that it could be restored once the interrupt had been handled. But sometimes the system was corrupting the least significant part of the mantissa. This was only happening when underflow or overflow had just been set by the interrupted ExFPU instruction. A little explanation here about these quite rare conditions. Overflow occurred when the number generated by the last obeyed FPU instruction had too large an exponent. Programmers could adjust the accuracy of their data by using artificial numbers using to the ‘power of’ in their results. Underflow was an entirely different kettle of fish! And the hardware and software gave several different options about how underflow conditions were to be handled. These all relied upon a fictional floating point number known as floating point zero. You cannot represent zero in floating point terms, it cannot naturally exist so if an underflow condition was created the hardware/software had to be told and the programmer then had to decide how to deal with this microscopically small number that was still present in the ExFPU and FPU registers. Underflow occurs when the exponent becomes too negative and the mantissa still cannot be normalised. Perhaps more information is required. In normal use a floating-point number is represented by ½ + lots of ever smaller fractions right down to the smallest fraction the system can hold. FPU maths upon these numbers can and does produce numbers longer than what can be held so rounding is then invoked. Again the type of rounding can be chosen by the programmer at compile time! The rounding process will always try to produce a number whose most significant digit is a 1 if the number is positive indicating +½ or a zero if the number is negative indicating almost -½. NB -½ cannot accurately be contained. The number 1 in FPU representation is ½ times 2 to the power of 1. Zero is a nonsense number! A number that is not normalised and with an exponent as negative as can be held in the representation whatever the exponent’s minimum size sets underflow, and the programmer can then declare it to be floating point zero and the hardware and software can then use this number. This problem was quickly confirmed by using numbers that always caused either of these conditions and then running other work alongside the test program. The error was identified and fixed by modifying the hardware and also making a software change. These errors only applied to extended floating point hardware machines, although the changes to the executive applied to extended and normal floating point extracode executive entry and exit routines. No one dared to inform users of the normal single precision executive extracodes that it sometimes got its numbers wrong! Thankfully there were very few users of that functionality of the E6G3/4 executive extracode option! The Tax Office was quite relieved to be able to run and re-run its financial model and get the same results with the same data input regardless of whether the ExFPU hardware was switched on or off. I had found and fixed seven errors in the extended extracode software, two errors in the normal single precision executive software and two design errors with the 1906S hardware. It seems that the Tax Office had been using suspect data for a number of years, no wonder changes to the taxation system often gave unforeseen consequences! |

A life in ComputingRoger CollierMemories of the English Electric KDP10 I joined English Electric in 1960 after getting a University College London maths degree that included a year of Birkbeck College’s computer science programme. Jobs were assured for anyone who’d ever written a program. I joined EE because they paid me £50 a year more than the next best offer. EE’s London computer group office was in Kingsway, with the sales and systems staff squeezed into a couple of offices behind the DEUCE service bureau. I became the third member of the KDP10 South of England systems team. The KDP10 was essentially a re-badged version of the American RCA 501, one of the first large-scale (in those days) fully transistorized commercial machines. Training was minimal and mostly informal. I was given a programming manual to study, followed by occasional helpful conversations with the two much more senior and knowledgeable members of the systems staff. After the convolutions necessary for optimal programming of Birkbeck’s MAC drum memory machine (calculate time to execute each instruction in terms of partial revolutions, locate next instruction accordingly), the KDP10 was refreshingly straightforward. Instructions were all ten octal characters, formatted as: OO AAA RR BBB (Operation; From Address; Registers: To Address). Unusually, in what was still primarily the punched card era, all fields could be variable length, with separation symbols, good for reducing internal and external storage use (so long as the separators weren’t used also for fixed-length items). Consistent with the orientation to commercial applications, decimal arithmetic instructions were included, as well as binary. Computation could be simultaneous with input and output (if correctly programmed). Software was almost non-existent. A bootstrap routine initiated from the console loaded individual programs, while a sort program was available that took advantage of the tape drives’ ability to read in both forward and reverse directions. There was little communication with the American company, and RCA innovations such as an assembler language (mysteriously, to British English speakers, called EZ-code) and COBOL were spurned. In theory, a KDP10 could be configured as a very large machine (for the day): design and addressability allowed up to 64 33KB magnetic tape drives and up to 256K of core storage. Additional growth (and simultaneity of processing) was possible by adding standalone high-speed printers. For my first two years with EE, my job was to assist the (mostly) non-computer-literate sales staff. When a sale seemed possible, I configured a system to meet the potential customer’s needs. This meant determining the number of tape drives (at least four, to facilitate sorting) and estimating core storage requirements (rarely more than 48K, considered adequate for any commercial process). Rough formulae gave estimated run times for sorts (how many? what file size?) and other processes (computational complexity? possible overlap of computation and I/O?). None of this work bore fruit until 1962, when EE sold two KDP10s (!), one to a bank in Argentina, and one to Schweppes. The first required me to spend a month in Buenos Aires helping to train bank staff; the second took me to the Schweppes office at Marble Arch. The Schweppes system was intended to streamline order processing for both Schweppes and its various subsidiary companies. Orders would be taken over the phone and entered into Burroughs machines with paper tape output. The paper tapes would be input into the KDP10, and each item priced to create invoices and monthly statements. Sales reports would be a by-product, with future planned development of an order pulling system for the warehouses. I was assigned to assist with the installation but, since no-one at Schweppes had any computer experience, soon found myself de facto head of the programming team. I may have been a competent coder, but I had no background in managing a project, essentially no supervision, no deadlines, and little idea of whether the programs we were coding would run efficiently on the machine I had so blithely configured. Over the next several months, the Schweppes team and I slowly turned the rough outline design into a series of programs, all still just a series of octal coding sheets. With no machine yet installed, we had no sense of run times, only that our code should fit into the available memory. Finally, the big day arrived. The Schweppes KDP10, with five tape drives (one dedicated to the printer), 32K of memory, paper tape input, an offline printer, and a wonderfully impressive five-feet-wide multi-coloured-lighted console, was installed at Marble Arch. Full-scale testing could begin. The limitations–and some advantages–of the KDP10 now began to be apparent. Even though EE had provided two on-site maintenance engineers, breakdowns were frequent, both of the transistorized logic boards and of mechanical devices like the printer and tape drives. More critically, every time a programming error (like arithmetic applied to a non-numeric field) was encountered, the computer halted and it was up to the operator to record the address location of the error from the binary lights on the console. (The combination of inexperienced programmers, octal machine code, and paper tape punch transcription created a phenomenal number of errors.) More helpfully, the console allowed programs to be executed one instruction at a time, with the binary lights showing each instruction and associated address contents. It was excruciatingly time-consuming, but we became expert at reading binary, and testing gradually proceeded, still with just a few test records at a time. The console–a source of wonder to passers-by stopping to gaze into the street-level windows of the computer room–also began to give us some clues about run times. If the console lights flashed in a blur, a program was executing at a reasonable speed. On the other hand, the key product pricing program caused the lights to cease blinking for several seconds at a time, something we should have been seriously concerned about. It was another year before the Schweppes order/invoicing system was operational. Confident (wrongly) that our testing was close to completion, I had moved on from EE to be a consultant, working with Univac and Honeywell machines, while (I later learned) EE had been forced to upgrade the Schweppes configuration and to rewrite many of the programs, all at no cost, in order to fit each day’s run times into no more than a day. The KDP10 was a learning experience for me and for EE. Including its slightly upgraded clone, the KDF8, only a dozen machines were sold. EE’s decision not to import any part of the system led to delays in system introduction and the loss of the marketing advantage of having an operational machine–a rarity in those days. At the same time, EE’s engineering orientation resulted in far more corporate enthusiasm for the development of the KDF9, leaving the KDP10 as something of an orphan. Even so, it’s hard to imagine the KDF9’s relative success could have been achieved without the manufacturing experience with the transistorised KDP10 or the availability of the peripheral devices that accompanied it. If others also have memories of the KDP10, they can contact the author at rcollier@rockisland.com. |

|

When I asked for contributions to A Life in Computing readers were generous. It has taken me a couple of years to work through the initial offerings but now stock is running low. More interesting and entertaining material like this would be much appreciated. Please send your jottings to [ed] at dik@leatherdale.net when you have a spare moment. |

50 Years ago .... From the Pages of Computer WeeklyBrian Aldous

Terminals On-Line via PDP-8 Link: Progress on the IBM 360/65 based at Harwell Users Workshop has now reached the point where some 64 terminals are connected on-line via a DEC PDP-8 used as a message concentrator. Eventually some 160 terminals will be connected via an AEI Con Pac 4060, and it is planned then that the PDP-8 link will be used for links with other experiments. (CW117 p3) ICL Programs for N/C Applications: A new series of programs for numerical control applications has been announced by ICL. The significant features of the programs are the extensive use made of macros and the reduction in clerical effort involved. (CW117 p5) World’s Biggest Group: The largest automation group in the world has been formed within the GEC empire by a combination of the facilities of GEC-AEI, English Electric and Elliott-Automation in a new company to be known as GEC-Elliott-Automation Ltd. (CW118/119 p1) N/C Methods for Design of Ship Hulls: A system for the design and production of ship hulls by numerical control methods was presented to members of the British shipping industry in London last week. The system, designed and used by Kockums, the Swedish shipyard, was presented by Isis Computer Services, whose business is concerned mainly with the shipping industry. (CW118/119 p10) Controlling 2,000 miles of oil pipeline in Canada: Twenty-Four PDP-8/S computers and an IBM 360/40 are being used to control 2,000 miles of oil pipeline in Canada. The pipeline is owned by the Interprovincial Pipe Line Co. of Edmonton, Alberta. (CW120 p7) FBI Encoding of Fingerprint Identification: The first stage in developing a system for encoding fingerprint identification for the US Federal Bureau of Investigation has recently been completed by Mr Joseph Wegstein, staff research scientist of the US National Bureau of Standards Center for Computer Sciences and Technology. He designed the procedure using a computer to produce compact descriptions based on the minutiae of the fingerprint impression. (CW120 p8) Significant Trends Outlined at OCR Conference: Current trends in optical character recognition in the USA was the subject of a three-day conference held in Hollywood Beach, Florida just prior to the Fall Joint Conference in San Francisco, where one afternoon session was devoted to the new branch of OCR – namely HNR, handwritten numeral recognition. (CW121 p13) Multi-access System 4/50 for Hospitals: A multi-access hospital information system based on an ICL System 4/50 is to be installed at Stoke-on-Trent in April, 1970. The system will serve the two main Stoke hospitals, North Staffs Royal Infirmary and City and General Hospital with a total of 1,300 beds, and also the central out-patients department in Stoke, which handles the majority of the outpatients for over 16 hospitals in the area. (CW121 p20) Leeds Group Develops Multi-Access: An entirely new multi-access system for the KDF9 has been developed at Leeds University computing laboratory. The system is considered to be more efficient than the EGDON 3 system, which was developed at the Culham laboratory of UKAEA with the collaboration of programmers from Leeds as well as other KDF9 users. (CW122 p1) High Speed Page Reading System Launched: A high speed page reading system that can cope with conventional typefaces or fonts has been put on to the UK market by the newly formed company, Real Time Systems Ltd. (CW122 p3) NPL buys KDF9 from the NCC: To meet the growing demand for computer time, the National Physical Laboratory is to purchase a second KDF9. This machine will come from the National Computing Centre which is planning to replace its present KDF9/1904 system in October this year with an ICL 1905F. (CW123 p1) New System for BEA’s 1903 at Heathrow: Savings of £240,000 over the next three years from extending the ‘safe lives’ of many aircraft components is expected by BEA as a result of a new computer application. The airline has introduced a system known as Component Time Control System onto its ICL 1903 at its Engineering Base at Heathrow. It will monitor and control over 300,000 aircraft parts. (CW123 p3) Important influence of LSI is stressed: One of the most important influences upon the development of hardware would be the increasing use of large scale integrated (LSI) circuit components. This point was made by Professor Maurice Wilkes when he spoke to a meeting of the Central London branch of the BCS at Imperial College. (CW125 p3) ICL takes the lead in UK as market goes on booming: The third quarter of 1968 was a record one for the British computer industry according to figures issued by the Ministry of Technology last week, and a survey carried out by the Diebold organisation of New York, showed that the number of installations in the UK grew faster in the first half of the year than in any other European country. (CW125 p5) Ticket Booking Plan Introduced by IDH: An agreement has been signed between Computicket Corporation of California, a subsidary of the Computer Sciences Corporation, and the IPC subsidiary, International Data Highways. It will be used to initiate a computerised seat booking system in London for theatres and other entertainments. (CW125 p24) London 6600 on Schedule from US: The second CDC 6600 to be installed in the UK arrived at London University from the States last week, and now becomes the most powerful computer in a British university. The new machine will be connected by on-line data links to eight of the university’s colleges, three of which, Queen Mary, Imperial and King’s, will have interconnected computers. (CW126 p1) ATLAS Introduce Vehicle Routing Package: A vehicle routing package, ROUTEMASTER, written to run on Atlas is the latest to be added to the repertoire of applications offered by the Atlas Computing Service of London University. The new purchase is designed to calculate an efficient solution to a variety of transport conditions, involving vehicles operating from a specific depot calling daily on a large number of customers. (CW126 p3) DEC’S Latest Addition to PDP Range: From Digital Equipment Co Ltd comes news of the latest addition to their computer range, the PDP-12. Designed as a successor to the LINC-8, the PDP-12 is a low-cost laboratory type computer which is expected to find a market in areas such as bio-medicine, oceanography, chemistry, physics, education and industrial applications. (CW126 p9) Boadicea Know-How to aid World Airlines: Experience gained on BOAC’s massive communications project, Boadicea, is to be made available to other airlines on a consultancy basis by a new company to be called International Aviation Computer Services Ltd. The company will have as its chairman Peter Hermon, BOAC’s chief of information handling, and is being formed as a subsidiary company of International Aeradio Ltd. (CW127 p1) PEL Launch P350 Series of Mini Office Machines: Breaking into the market for small office computers is Philips Electrologica Ltd, British subsidiary of Philips Industries, which has launched in the UK a family of office computers which will be known as the P350 series. First of the series to become available in this country will be the P352, a 16K (characters) machine, with paper tape and punched card input and output facilities, and printing at a rate of up to 40 ch/s. (CW128 p22) |

Forthcoming EventsLondon Seminar Programme

London meetings take place at the BCS in Southampton Street, Covent Garden starting at 14:30. Southampton Street is immediately south of (downhill from) Covent Garden market. The door can be found under an ornate Victorian clock. You are strongly advised to use the BCS event booking service to reserve a place at CCS London seminars. Web links can be found at www.computerconservationsociety.org/lecture.htm . For queries about London meetings please contact Roger Johnson at r.johnson@bcs.org.uk. Manchester Seminar Programme